In the battle to bring AI home sooner, tech giants Microsoft, Google, Apple and Facebook have joined the likes of Nvidia, Qualcomm, Intel and Broadcom among others to build their own AI chips that are powering their hardware. Over the last few years, there has been a frenetic activity in this space with tech titans becoming chipmakers to support the AI capabilities of their products and improve their AI systems.

Legacy giants need chips in the data centres to train powerful neural networks and they also need these chips in the products to help devices run AI capabilities smoothly. Hence, the shift from mobile to AI is seeing tech titans producing AI-focused chips. Today, Google, Apple and Microsoft have some of the most visible projects in the AI space with announcements made over the last two years.

Tech companies turning chipmakers

Leading the race is the search software giant Google whose Tensor Processing Unit (TPU) is designed for training AI system. This ground-breaking advancement in AI helps Google provide faster response time for search and voice search and played a pivotal role in AlphaGo beating a reigning champion at the ancient Chinese board game. At a recent I/O conference, Google CEO Sundar Pichai revealed a new kind of second generation AI chip designed in-house that could train and execute deep neural networks that power automated translation, image and speech recognition and even robotics. In fact, Google’s shift to produce Cloud TPUs is in direct competition with NVIDIA, the gaming giant that adapted its GPUs for AI data processing. Reportedly, Google also opened Cloud TPUs for business, and has also released Tensor Flow Research Cloud for free for research work.

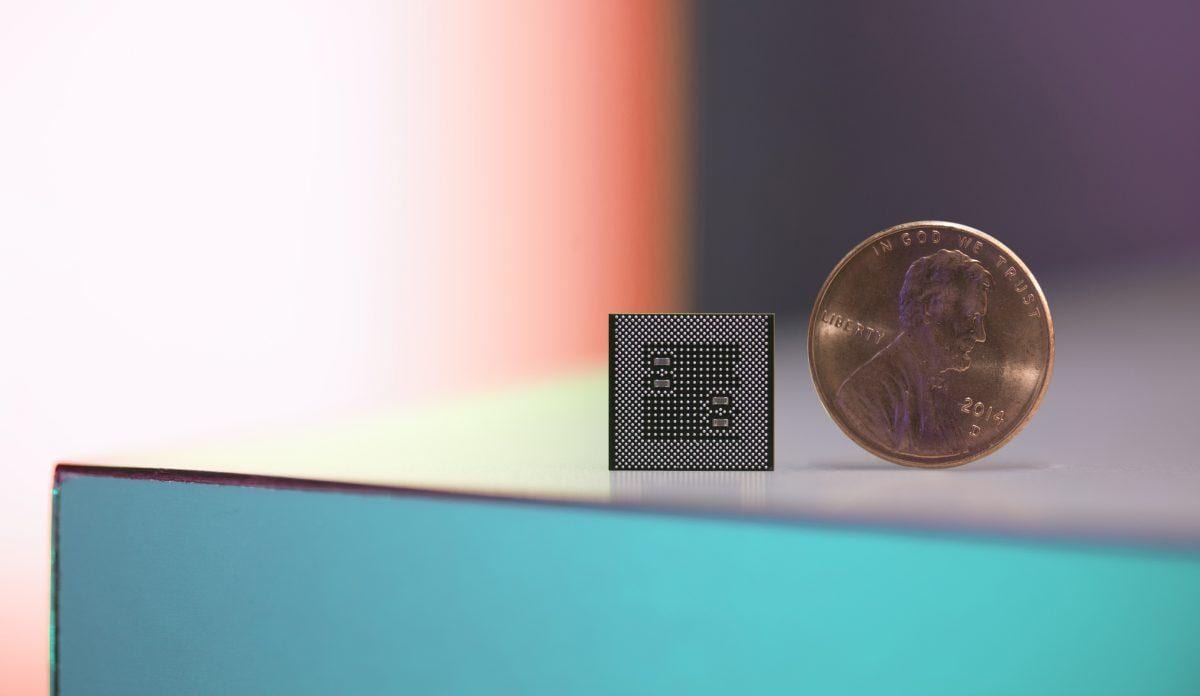

On the other hand, the Cupertino giant, which is highly secretive company about its developments was recently in the news for developing an Apple Neural Engine chip to add more advanced AI capabilities across its products. Most AI tasks such as speech recognition can affect the battery life and the iPhone maker plans to use the AI chip in conjunction with the standard processor and graphics chip to offset the battery life.

Redmond giant Microsoft too has designed its own chips designed to boost AI capabilities of their latest invention – the HoloLens, a holographic computer and head mounted display that enables users to interact with holograms.

Google and Microsoft’s projects are the most visible part of a new AI-chip industry springing up to challenge established semiconductor giants such as Intel and Nvidia.

From gaming to AI – here’s how NVIDIA started the revolution

The gaming giant won the chip market when it trained its GPUs that powered video game graphics to handle other types of large computations. Today, GPUs have become the go-to hardware in data centres, running the company’s machine learning system, with NVIDIA’s GPUs deployed by Microsoft, Amazon and Alibaba among others.

As opposed to other players such as Intel and Advanced Micro Devices, NVIDIA identified a high growth opportunity in deploying GPUs for highly intensive computing and deep learning and started releasing their early version (CUDA) to researchers at university. By bulking up on the talent front, by snagging top performers from SGI, the computer graphics company now owned by HPE and leading graduate programs, trained its eyes on making NVIDIA products that could support machine learning algorithms. Known for their killer marketing strategy, the Santa Clara-headquartered gaming company now develops processors that power autonomous capabilities in cars.

Build vs Buy

Why are companies pushing to build their own chip production facility when players such as Intel, NVIDIA, ARM already exists? The race for AI is driven by the underlying need of more computing power to support training deep neural networks that can analyse vast amounts of data. This means, supplementing CPU power with additional processor, such as Google’s TPU and in the case of Microsoft, it is Field Programmable Gate Array. Meanwhile, rumours are rife that Apple bought its own silicon chip fabrication unit and shunned process development partner to develop AI chips to power its smartphones.

The biggest reason is that companies want more autonomy in tailoring the production process more to their needs. In the case of Apple, it wants to tweak its hardware according to the needs of the functionality it wants to incorporate in its new line of iPhone. In fact, Google’s TensorFlow Lite is a version developed for mobile and is addressing almost the same set of use cases that Apple is working on.

How are tech giants closing the gap in the battle of chips?

With AI-related growth taking off, especially in areas such as healthcare, automotive, retail among other areas, legacy companies are quick to snap up startups, they see as AI-enablers. These startups are also pegged as potential AI-stocks that can change the market rapidly and accelerate bringing next-gen to market. Case in point – semiconductor giant Intel acquired chipmaker Altera in 2015, an acquisition driven by the rise of AI.

Earlier this year, Intel paid a whopping $15.3 billion to an Israeli chip startup Mobileye and in good turn of events, Intel is reportedly shunting the autonomous driving team to the startup’s HQ to accelerate tech to market. Other potential startups in this arena are UK-based Graphcore, machine learning chipmaker that closed a $30 million round of funding recently and has Deep Mind CEO Demis Hassabis as its backer and Wave Computing, focused on machine-learning chips. Graphcore’s USP lies in making chips that are mapped to machine learning algorithms as opposed to NVIDIA’s chips, that were originally meant for powering video games.

Will it phase out cloud?

With Google putting all its might behind Cloud-ML, Cloud TPUs can jostle AWS as the number #1 cloud computing partner. Analysts point out that the next generation of web-based computing services could reduce the need for cloud servers. A host of next gen technology such as VR necessitates the need for powerful AI chips to power devices that are used in a wide range of settings such as manufacturing, retail, logistics and other areas for autonomous intelligence.

Instead of depending on data centres to process responses, companies want to outfit VR headsets with AI chips to reduce time lag and speed up the responses.

With incremental growth in AI over the last few years, and emerging technologies have compelled tech behemoths to expand their ecosystem by flexing their muscles and experiment with powerful AI chips, built in-house or in collaboration to power their services and products.