Deep learning has emerged as a leading machine learning tool in computer vision and has attracted considerable attention in biomedical image analysis. There is a renewed interest in medical image computing, and here, deep learning has proved to be effective in its ability to handle complex microscopic images.

Deep learning has emerged as a leading machine learning tool in computer vision and has attracted considerable attention in biomedical image analysis. There is a renewed interest in medical image computing, and here, deep learning has proved to be effective in its ability to handle complex microscopic images.

Google’s latest research covers two important aspects:

- Basics of machine learning for analysing microscope images

- Handling out-of-focus images with the help of a pre-trained TensorFlow model with plug-ins from the Google Accelerated Science team

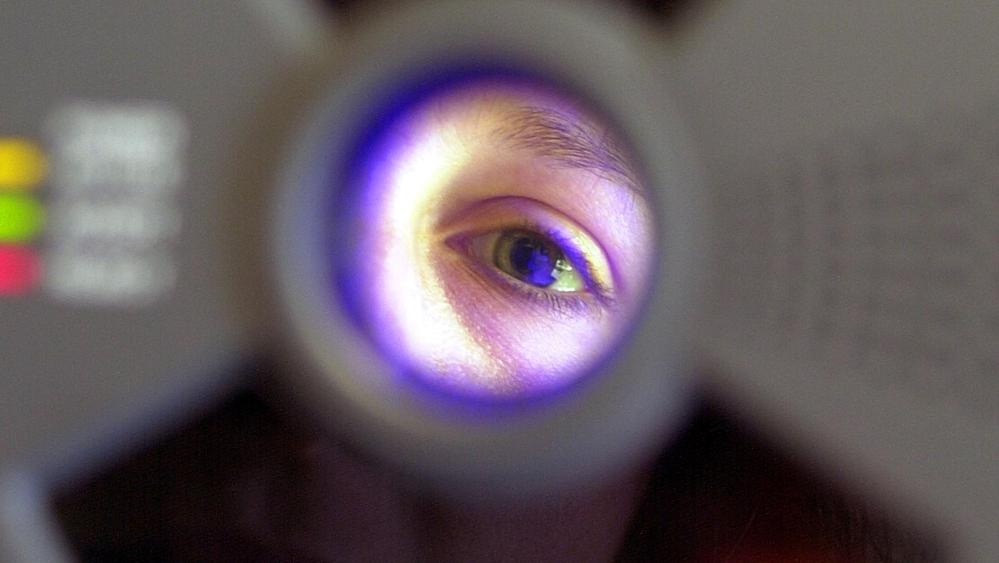

Deep neural networks have already played an important role in a host of tasks such as detection, segmentation, and classification in microscopic image analysis. Now, Google’s current research has found a way for scientists eager to use cutting-edge DL techniques for advanced image analysis work. The research deals with an important challenge of dealing with out-of-focus images. According to a post by Google, despite having autofocus systems on state-of-the-art microscopes, poor configuration or hardware incompatibility can produce substandard images, which results in quality issues. Google’s deep learning researchers have automated a technique to rate focus quality which can enable the detection, troubleshooting and removal of such images.

The paper Assessing Microscope Image Focus Quality With Deep Learning introduces a deep neural network model that could identify on a small 84 × 84 image patch (about several times the area of atypical cell), the extent of the image blur and whether the image blur is even well-defined, which implies if the image patch is a background. The research is geared to enable a precise and accurate automatic assessment of microscope focus quality.

There are a range of commercial off-the-shelf solutions for low quality image detection but microscopic images pose a more complex challenge. According to the Google research paper:

- Most microscope images are shift and rotation invariant

- They feature different offset (black-level) and pixel gain, photon noise

- In fluorescence microscopy, one of the different microscopy imaging modalities, an image may correspond to one of many possible fluorescent markers each labeling a specific morphological feature

- For scientists, gathering high quality optical microscopy images can be a challenge since individual images are mostly out of focus and noisy.

- These types of image degradation may occur on only a small fraction of a dataset too large to survey manually especially in high-content screening applications

Implementation

Google researchers started with a dataset of images consisting of in-focus and multiple out-of-focus images of U2OS cancer cells. The images shared only a few traits such as the image focus varied or many regions of images consisted of just a background. With this data, researchers trained a model that could identify, on a small 84 × 84 image patch both the severity of the image blur and whether the image blur is even well-defined.

Here’s Where Google’s Research Differed In Technique:

- Instead of training the model on focal stacks of defocused images gathered from microscopes or training the model on manually labeled images, researchers used synthetically defocused versions of real images.

- This method enabled them to identify the absolute focus quality rather than relative measures of quality.

- Another key upside of the approach was that the researchers could generate a vast number of training examples needed for deep learning with just an in-focus image dataset

- Also, the researchers took advantage of the well-known behavior of light propagation in a microscope to achieve this.

- With this method, Google researchers also proved that out-of-focus images can be a more accurately as compared to previous approaches and the model is generalised to different image and cell types.

- While much research has already been done in automatic detection of low quality images in photographic applications, researchers posit that microscope images differ from consumer photographic images. Most microscope images are shift and rotation invariants, which have varying offset and pixel gain and dynamic range.

- The team integrated a pre-trained TensorFlow model with plugins in Fiji (ImageJ) and CellProfiler, open source scientific image analysis tools that can be used with both a graphical user interface or through scripts.

Outlook

Google has been at the forefront of numerous breakthroughs in image assessment and image recognition. In December 2017, the search giant introduced to the Neural Image Assessment (NIMA) system, a technique that dishes out the predictors used when humans see an image or, the mean scores or ratings given when judging a photo. This approach also categorised images in two categories — low and high quality — with the end goal to match human perception. With continuous innovation in image assessment, Google’s quality assessment models will not only revolutionise fields like medical diagnostics but also biology and geosciences as well.