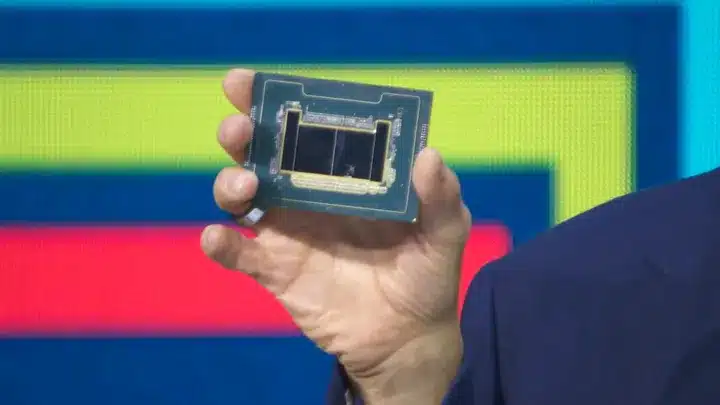

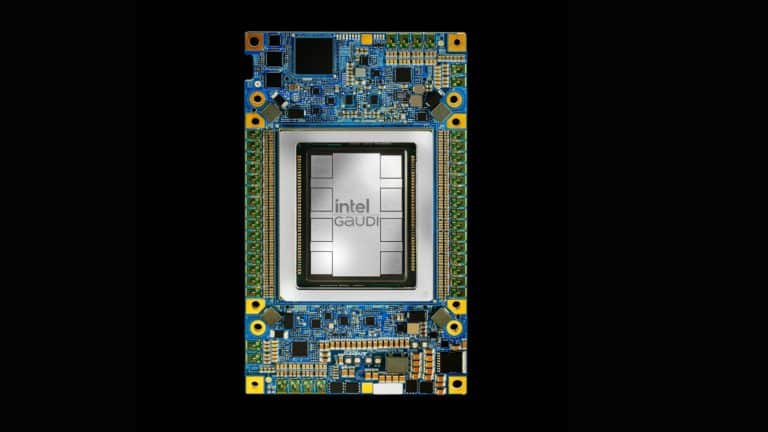

Computing and technology sector was at the centrestage at the ongoing CES 2019, and leading chipmaker Intel made a slew of announcements recently. One of the key announcements was about a new class of chip, aimed at speeding up inference for companies with a high demand for workloads. This push towards artificial intelligence-based inference chips, called the Nervana Neural Network Processor for Inference (NNP-I), has Facebook as one of the development partners. According to a statement by Intel, the company is also expected to have a Neural Network Processor for Training, code-named “Spring Crest,” available later this year.

Computing and technology sector was at the centrestage at the ongoing CES 2019, and leading chipmaker Intel made a slew of announcements recently. One of the key announcements was about a new class of chip, aimed at speeding up inference for companies with a high demand for workloads. This push towards artificial intelligence-based inference chips, called the Nervana Neural Network Processor for Inference (NNP-I), has Facebook as one of the development partners. According to a statement by Intel, the company is also expected to have a Neural Network Processor for Training, code-named “Spring Crest,” available later this year.

According to a Research and Markets report, the global AI chips market is expected to grow by 39 percent by 2023. With enterprise AI becoming one of the fastest growing market segments, and the increased adoption of AI chips in data centres, traditional chipmakers like Intel have put a significant investment in AI chip development, especially on the inference side.

Earlier last year, Amazon released their machine learning chip AWS Inferentia at their AWS re:Invent conference. Designed by Amazon-owned Israeli company that works on building semiconductor systems, the inference chips are geared at larger workloads. In an earlier article, we pointed out how the release of inference chip is an indication of AWS’s intention of strengthening its hardware muscle and also countering other cloud competitors like Azure and Google Cloud Platform. Now, Intel is fighting a battle for market size by emerging as a leader in AI compute performance and also winning over significant mindshare — a turf that clearly belonged to NVIDIA so far.

Intel’s Broadens Its Portfolio Of Products For The AI Landscape

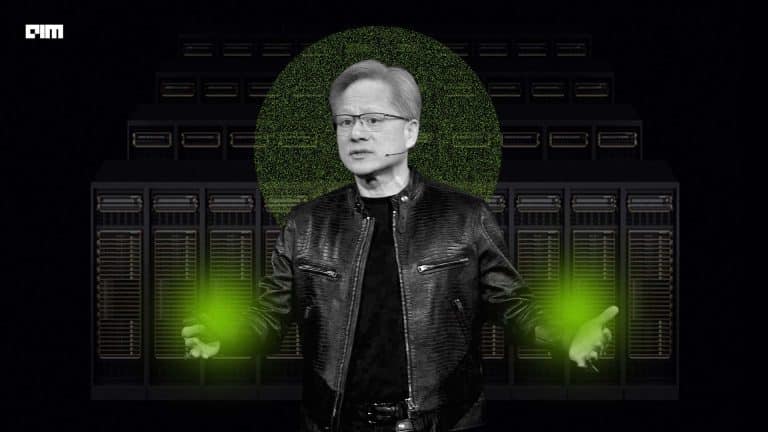

NNP-I to counter NVIDIA’S dominance in ML market: While NVIDIA is a leader in training and NVIDIA’s GPU has been widely adopted across industries for training machine learning algorithms, Google emerged as another big player in the market with TPUs, a type of ASIC designed exclusively for deep learning. In the hotly-contested chip market, Intel looks to corner the market for machine learning inference which, as per analysts from Morningstar is expected to touch $11.8 billion by 2021.

The move is in sync with Intel’s strategy to broaden its AI portfolio: Intel has a robust AI roadmap and the latest announcements at CES 2019 shows the chip maker’s commitment to lead the machine learning market. A report indicates that Intel’s first move to compete with NVIDIA in the ML market came with its Xeon Phi architecture, however that alone cannot guarantee a larger market share which led the company to invest in other architectures, which led to a series of acquisitions in the computing space, most notably MobilEye for self-driving chips and Altera for FPGAs. Also, Intel has widened the focus on newer technologies and is reported to be working on neuromorphic chips and chips geared at quantum computing.

Intel’s inference chip has Facebook as development partner: Given that the chip is developed to help enterprises and startups tackle higher workloads with accelerated inference, the collaboration is a sign to broaden the market prospects by getting leading tech majors onboard. In another collaborative move, Intel’s Xeon Scalable CPU processor with robust AI and memory capabilities was selected by Chinese cloud giant Alibaba. The two tech giants also announced a joint cloud edge computing platform which will integrate Intel’s hardware, AI capabilities with Alibaba Cloud IoT products.

Intel, the bigger giant of the two now latches on two newer technology to unseat Nvidia: Just like NVIDIA shifted the focus from gaming to GPU market and increased the curve for machine learning and deep learning, Intel, the bigger giant of the two with deep capabilities in server and edge computing is now leaning on newer companies to broaden the computing portfolio — self-driving (MobilEye), dedicated GPU segment (snagged key leadership appointment of Raja M Koduri from AMD who will lead Intel’s Core and Visual Computing Group), FPGA market (Altera), vision processing (Movidius). Koduri’s appointment signals Intel’s move to compete with the gaming giant with its own dedicated graphics technology.

Outlook

With GPUs and AI processors dictating the larger market for machine learning computation, the chips race will evolve to be a two-legged race between the computing behemoths.