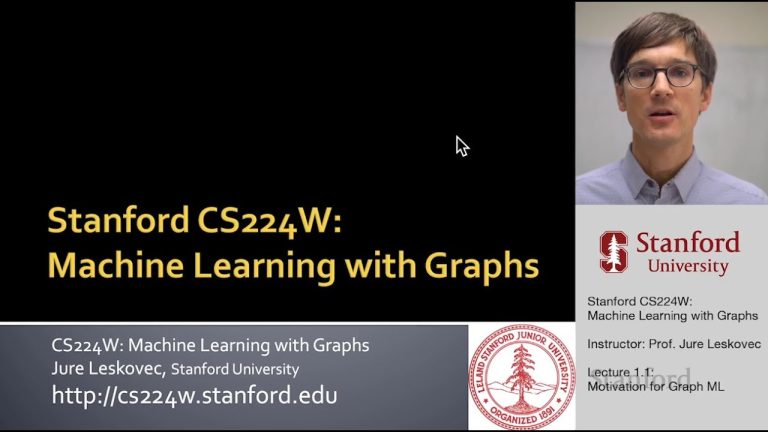

Over the last few years, AI has seen significant advances in the autonomous driving sector. Countries like China and America have started testing their self-driving cars. Although there are many challenges that self-driving cars face, one of the most significant problems it encounters is predicting or detecting an object coming from around the corner. Predicting running objects coming towards the vehicle, before making a turn or while it is passing the turn is crucial for the safety of the passengers inside the car. Artificial intelligence algorithms and the researchers at Standford University have taken a step forward towards eliminating the hassle of a camera ‘seeing’ an object that might be around the corner or not directly visible.

Not only can this technology be used for self-driving cars to see around the corner, but it also has various other security purposes, such as military usages. If one knows where the threat is, it accounts for better countermeasures. A Stanford University graduate, David Lindell, has pushed the limits of cameras by using the AI systems in the future to see something that’s coming around the corner and isn’t in its direct line of sight

How Does It Work?

David Lindell in his Ted Talk said that; these cameras use the laser to scan the side of a simple wall or the wall of a building, to create a 3D geometrical design of the object/scene which is hidden around the corner. The research camera makes use of the laser to scan that side of the wall where a reflection of the scene would be incident. These cameras are powerful and can capture the movement of light — faster than ‘The Flash’.

David Lindell and the researchers took this high-speed camera and paired it with a laser. This laser sends out short pulses of light onto the wall opposite to the scene which is present around the corner. The technology uses the laser to capture the light scattered by the scene on the wall.

What happens is that these light pulses scatter on this wall and some light scatters back to the cameras. At the same time, the camera makes use of this scattered light around the corner to get the photons of the scene scattered on the wall around the corner and gets slight imaging back through it after several repetitions.

The process looks like the ultra-high-speed camera directly recording the photons as if following the reflectivity law, whatever the light is scattered on the wall by the object or the camera is captured back through reflecting/scattering photons on the wall.

Through numerous repetitions, the researchers capture different arrival times of these photons and their positions. These measurements can be used to create what David Lindell calls, ‘trillion frames per second’ video of the wall. Keep in mind that the trillion frames per second camera video are of the wall, while it is in operation, the camera is so powerful that one can see waves of light scattered back from the scenes on the wall.

Theses images and the measurement are passed through a reconstruction algorithm which will process the 3D geometry of the hidden scene around the corner.

The researchers have introduced a wave-based image formation model and have used the f-k migration method.

Countering the Non-Line-Of-Sight (NLOS) Imaging Problem

First off, NLOS is that technology which helps in recovering the obstructed objects in the scene which aren’t in the line of sight of the camera because usually, a camera captures the objects which are in its line of sight. Some of the occluded objects are lost in the process. David Lindell and team, in this work, have introduced a wave-based image formation model for the problem of NLOS.

Though NLOS give out good results, there are some challenges which come with it.

The image formation and the inversion models used by the NLOS are slow and work for a limited amount of hidden surfaces. Along with these problems, the NLOS only detects a limited amount of light back to it, which results in high losses.

The wave-based image formation is more robust when it comes to reflective properties of various surfaces, and the powerful camera helps in achieving this too. Also, this wave-based system captures better quality reconstructions and is easy to work with.

Outlook

This new study has a lot of potential applications. Not only can this study find its applications in the area of self-driving cars, security, military but also in robotic vision, medical imaging and remote sensing and other major domains. Although this type of development which helps the camera see around the corner has been going on over the years using different methods like backpropagation and light cone transformation, this wave-based approach makes up for most of their limitations. But not without facing some of its challenges like imaging scenes/objects at long distances, making efficient use of lasers which are safe for the eyes and low in power. Also capturing photons which have bounced around multiples times around the corner or encountering problems like whether the researchers can make the cameras small and safe enough to enter them into the human bodies. And with technologies like these, one has to wonder if these get augmented with AI, it can take one step towards the myth of surpassing humans.