With improved accessibility to new technologies, there has never been a better time to include a plethora of studies into the field of artificial intelligence. It has the potential to pave the way for developing tools that can serve the clinicians and researchers greatly with effective training.

One such ingenious utility emerged out of the research carried out at Neurosurgical Simulation and Artificial Intelligence Learning Centre at the Montreal Neurological Institute and McGill University Health Centre, where the researchers used machine learning algorithms to guide virtual reality training platforms for neurosurgeons.

Since brain-related diagnoses or cures are always critical, a lot depends on the skill of the neurosurgeon. The feedback provided by this platform helped to gather more insights about the expertise of the surgeons.

ML Powered Neuro Expertise

The level of expertise in using miniature surgical tools (psychomotor skills) varies from surgeon to surgeon. The pressure applied to the brain should be such that the damage is minimal. Algorithmic assisted training exposes the areas of improvement for the surgeons and they can build their skills on these positive feedback loops.

For this study, a total of 50 individuals (14 neurosurgeons, 4 fellows, 10 senior residents, 10 junior residents, and 12 medical students) were chosen who participated in 250 simulated tumour resections.

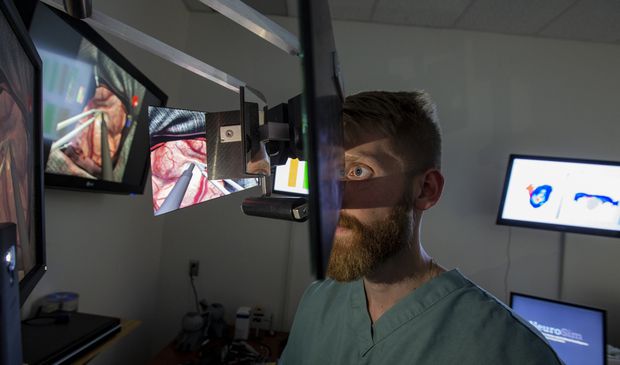

The simulator used for this experiment was the NeuroVR (CAE Healthcare) is a high-fidelity neurosurgical simulator designed to recreate the visual and haptic experience of resecting a human brain tumour through an operative microscope.

To evaluate the performance of the surgeons few metrics were categorized based on the force applied to the underlying structures and damage to the underlying brain, blood loss, and quantity of tumour removed. The level of expertise of the surgeons can then be determined accurately by looking at how well they score with machine learning models.

Factors such as the way the tip of the instrument accelerates play a crucial role in determining the expertise. So, in the first step of the experiment, raw data were transformed into performance metrics to be used by the algorithm.

This process includes transforming instrument movement from the original x, y, and z coordinates into three-dimensional representations of velocity, acceleration, and jerk, as well as the rate of change in volume of tumour and healthy tissue, as well as the rate of change of bleeding, and the number of attempts to stop bleeding were generated.

The results show that the K-nearest neighbour algorithm had an accuracy of 90% (45 of 50), the naive Bayes algorithm had an accuracy of 84% (42 of 50), the discriminant analysis algorithm had an accuracy of 78% (39 of 50), and the support vector machine algorithm had an accuracy of 76% (38 of 50).

“Physician educators are facing increased time pressure to balance their commitment to both patients and learners,” says Dr Rolando Del Maestro, the lead author of the study.

The researchers, however, still warn us from jumping to conclusions unless until these models are tested on novel data for overfitting. They feel that a more comprehensive evaluation of participants with an emphasis on demonstrated skills across assessment domains (eg: visual rating scales and training evaluations or assessment of visuospatial abilities) may result in improved algorithm performance.

AI For A Clinical Future

Although the task involved a complex neurosurgical tumour resection task, the protocol outlined can be applied to any digitized platform to assess performance in a setting in which technical skills are paramount.

The researchers firmly believe that by understanding the performance data used by the algorithm to render its decision, it is possible to design systems to deliver on-demand assessments at the convenience of the examinee and with minimal input from skilled instructors. Such systems may be subject to continuous improvement as increasing participant data are collected and integrated into the algorithm.

Simulators, while affording learners the opportunity to safely develop technical skills during the particularly dangerous and error-prone early phases of skill acquisition, do not obviate the need for learner feedback, which is often given by skilled instructors.

AI in critical areas should be considered only as an augmentation to the traditional methods and not as a tool to promote their abandonment because human assistance in achieving clinical expertise is unparalleled and it would be safe to say that it will remain to be so.