PyTorch has become popular within organisations to develop superior deep learning products. But building, scaling, securing, and managing models in production due to lack of PyTorch’s model server was keeping companies from going all in. The robust model server allows loading one or more models and automatically generating prediction API, backed by a scalable web server. Besides, it also offers production-critical features like logging, monitoring, and security.

Until now, TensorFlow Serving and Multi-Model Server catered to the needs of developers in production, but the lack of a model server that could effectively manage the workflows with PyTorch was causing hindrance among users. Consequently, to simplify the model development process, Facebook and Amazon collaborated to bring TorchServe, a PyTorch model serving library, that assists in deploying trained PyTorch models at scale without having to write custom code.

TorchServe & TorchElastic

Motivated by the request from Alex Wong on GitHub, Facebook and AWS released the much-needed service for PyTorch enthusiasts. TorchServe will be available as part of the PyTorch open-source project. Users can not only bring their models to production quicker for low latency prediction API, but also embed default handlers for the most common applications, such as object detection and text classification.

TorchServe also includes multi-model serving, model versioning for A/B testing, monitoring metrics, and RESTful endpoints for application integration. Developers can leverage the model server on various machine learning environments, including Amazon SageMaker, container services, and EC2 (Amazon Elastic Computer Cloud).

You can install TorchServe using the pip package manager:

pip install torch torchtext torchvision sentencepiece

pip install torchserve torch-model-archiver

Follow the link to get started with TorchServe.

Increasing Adoption Of PyTorch Within Companies

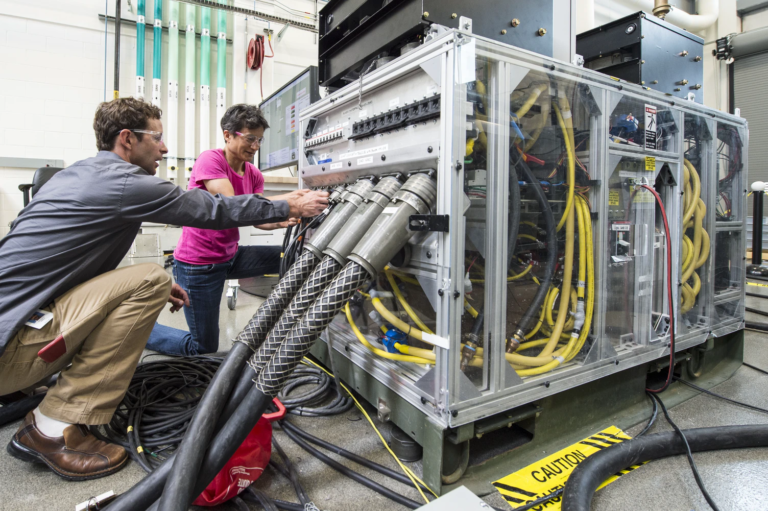

Many blue-chip companies are using PyTorch for their machine learning products, and critical use of this deep learning framework is being employed by the Toyota Research Institute Advanced Development (TRI-AD). The company is now able to simplify the model deployment and management process in production using TrochServe.

“Due to the absence of PyTorch’s model server, we were spending significant engineering effort in creating and maintaining software for deploying PyTorch models to our fleet of vehicles and cloud servers. However, with TrochServe, now we have a lightweight model server that is helping us continuously optimize our computer vision model effortlessly,” said Yusuke Yachide, Lead of ML Tools at TRI-AD.

The framework by Facebook witnessed a widespread adoption even without the model server due to several advantages, such as simple usability, readability, and distributed training. With the new PyTorch 1.5 that further focused on enhancing the speed in gaming and 3D graphics designing, it is poised to gain momentum among developers.

“Our team and the community has pushed PyTorch forward in recent years. A large number of researchers and enterprise users are leveraging AWS. Therefore, we collaborated with AWS and pushed PyTorch together,” said Bill Jia to TechCrunch.

Facebook has been enhancing PyTorch and making it more reliable within enterprises. More recently, it also announced TorchElastic’s integration with Kubernetes. This will enable developers to train models on a cluster of compute nodes that can dynamically change without disrupting the training workflow. Besides, the capability of built-in-fault-tolerant TorchElastic maintains the continuity even if nodes go down during the training.

Outlook

Although the service is under experimental release, TorchServe has opened up a clear path to deploying PyTorch models for scalable production. However, it will have to enhance the service further to match up with TensorFlow Serving, which is a flexible and high-performance end-to-end serving system that can extend to a wide range of frameworks.

TensorFlow has a considerable user base and exceptional community with more than 80.9k fork and 2,474 contributors on GitHub, whereas PyTorch has only 9.7k fork and 1,374 contributors on the platform. However, continuous advancement in the framework is allowing PyTorch to cover new grounds and become the go-to framework for all machine learning initiatives.