As technologies like machine learning and AI are powering a new generation of smart industries, including smart homes, smart retail, smart industries, smart cars amongst others, it has made lives easier through intelligent interaction with these devices. Intel, has been a pioneer in developing solutions around emerging technologies, which can be see as a part of these two developments, it recently made.

AWS announcing “DeepLens”, a deep learning enabled wireless video powered by Intel

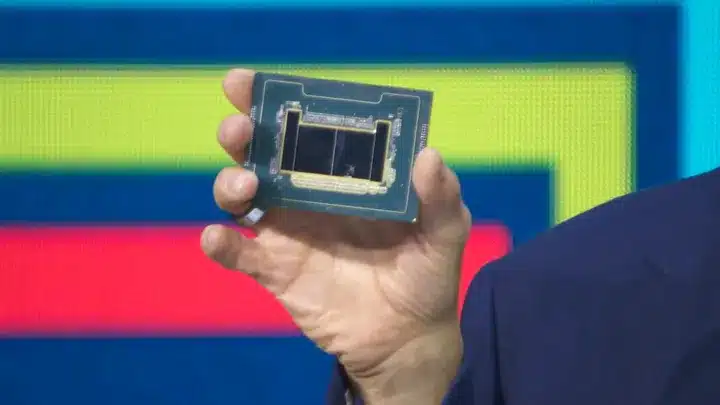

At the recently held AWS re:Invent, Amazon Web Services announced DeepLens, which is a fully programmable, deep learning enabled wireless video camera designed for developers. Revealed during AWS CEO Andy Jassy’s keynote at its annual conference in Las Vegas, DeepLens will provide optimal tools to builders to design and create artificial intelligence and machine learning products. Produced in collaboration with Intel, it is designed to suit programmers of all skill levels.

With this development, Intel is reinforcing its commitment to provide developers with tools to create AI and machine learning. It had recently introduced Intel Speech Enabling Developer Kit, which provides a complete audio front-end solution for far-field voice control and makes it easier for third-party developers to accelerate the design of consumer products integrating Alexa Voice Service.

DeepLens combines high amounts of processing power with an easy-to-learn user interface to support the training and deployment of models in the cloud. It is powered by Intel® Atom® X5 processor with embedded graphics that support object detection and recognition. Developers can start designing and creating AI and machine learning products in a matter of minutes using the preconfigured frameworks already on the device.

It uses Intel-optimized deep learning software tools and libraries to run real-time computer vision models directly on the device for reduced cost and real-time responsiveness.

“We are seeing a new wave of innovation throughout the smart home, triggered by advancements in artificial intelligence and machine learning,” said Miles Kingston, general manager of the Smart Home Group at Intel. “DeepLens brings together the full range of Intel’s hardware and software expertise to give developers a powerful tool to create new experiences, providing limitless potential for smart home integrations.”

Intel in partnership with Warner Bros announce development around autonomous cars of the future

As driverless cars are inching towards reality, it has become a new consumer space to tap for opportunities. Brian Krzanich, chief executive officer of Intel Corporation, wrote on the company blogpost that autonomous driving is today’s biggest game changer, offering a new platform for innovation from in-cabin design and entertainment to life-saving safety systems.

Aiming towards the same, Intel along with Warner Bros are going to develop in-cabin, immersive experiences in autonomous vehicle (AV) settings. It was announced at the recently held Los Angeles Auto Show.

“Called the AV Entertainment Experience, we are creating a first-of-its-kind proof-of-concept car to demonstrate what entertainment in the vehicle could look like in the future. As a member of the Intel 100-car test fleet, the vehicle will showcase the potential for entertainment in an autonomous driving world”, he said. The company believes that AV industry is going to create one of the greatest expansions of consumer time available for entertainment, including video-viewing time.

However, the technology brought by Intel is not simply about enjoying ride but saving lives too. Intel is also collaborating with the industry and policymakers on how safety performance is measured and interpreted for autonomous cars. It believes that setting rules for fault in advance will bolster bolster public confidence and clarify liability risks for consumers and the automotive and insurance industries.

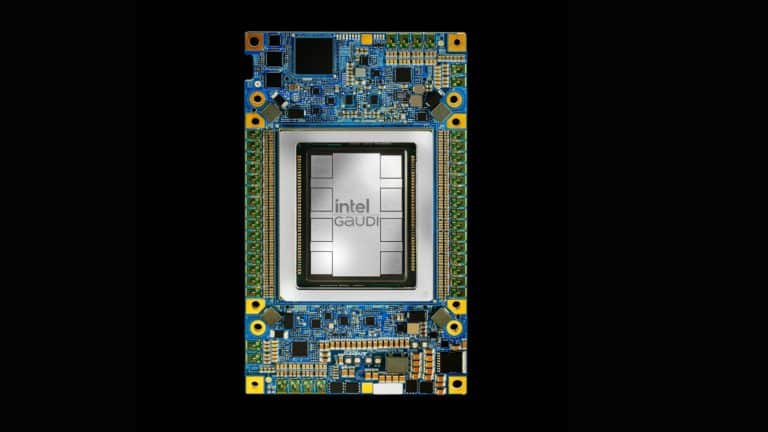

Already, Intel and Mobileye, facilitating advanced driver assistance system, have proposed a formal mathematical model called Responsibility-Sensitive Safety (RSS) to ensure, from a planning and decision-making perspective, the autonomous vehicle system will not issue a command leading to an accident. Safety systems of the future will rely on technologies with maximum efficiencies to handle the enormous amount of data processing required for artificial intelligence.

“Earlier this year, we closed our deal with Mobileye, the world’s leader in ADAS and creator of algorithms that can reach better-than-human-eye perception through a camera. Now, with the combination of the Mobileye “eyes” and the Intel microprocessor “brain,” we can deliver more than twice the deep learning performance efficiency than the competition.1”, he said.

“From entertainment to safety systems, we view the autonomous vehicle as one the most exciting platforms today and just the beginning of a renaissance for the automotive industry”.