Hugging Face is one of the most promising companies in the world. It has set to achieve a unique feat – to become the GitHub for machine learning. Over the last few years, the company has open-sourced a number of libraries and tools, especially in the NLP space. Now, the company has integrated Decision Transformer, an offline reinforcement learning method, into the transformers library and the Hugging Face Hub.

What are decision transformers

Decision transformers were first introduced by Chen L. and his team in the paper ‘Decision Transformer: Reinforcement Learning via Sequence Modelling’. This paper introduced this framework that abstracts reinforcement learning as a sequence modelling problem. Unlike previous approaches, Decision Transformers output the optimal actions by leveraging a causally masked Transformer. A Decision Transformer can generate future actions that achieve desired return by conditioning an autoregressive model on desired reward, past states, and actions. The authors concluded that despite the simple design of this transformer, it matches, even exceeds, the performance of the state-of-art model and free offline reinforcement learning baselines on Atari, OpenAI Gym, and Key-to-Door tasks.

Decision Transformer architecture

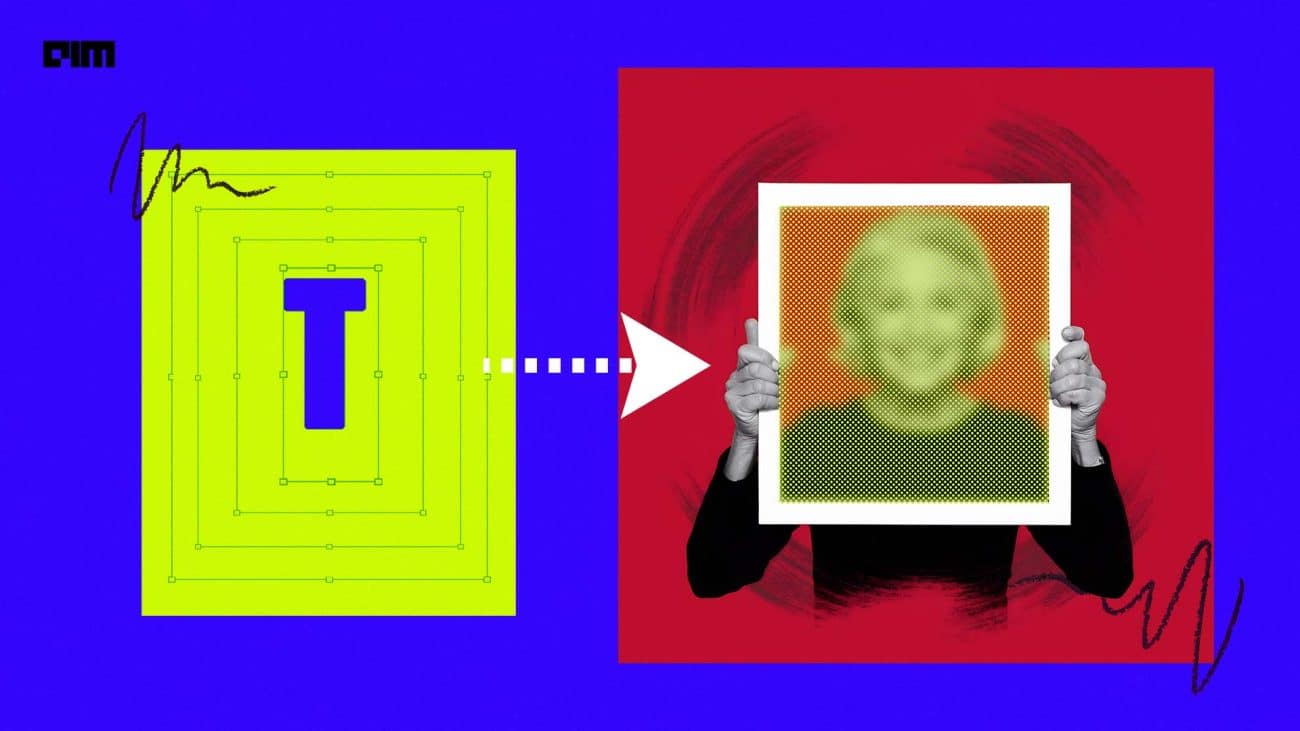

The idea of using a sequence modelling algorithm is that instead of training a policy using reinforcement methods that would suggest action to maximise the return, Decision Transformers generate future actions based on a set of desired parameters. It is a shift in the reinforcement learning paradigm since the user is using a generative trajectory modelling to replace conventional reinforcement learning algorithms. The important steps involved in this are – feeding the last K timesteps in the Decision Transformer with three inputs (return-to-go, state, action); embedding the tokens with a linear layer (if the state is a vector) or CNN encoder if it is a frame; processing the inputs by GPT-2 model that predicts future actions through autoregressive modelling.

Offline reinforcement learning

Reinforcement learning is a framework to build decision making agents that learn optimal behaviour by interacting with the environment via trial and error method. The ultimate goal of an agent is to maximise the cumulative reward called return. One can say that reinforcement learning is based on the reward hypothesis and all the goals are the maximisation of the expected cumulative reward. Most reinforcement learning techniques are geared in the online learning setting, where the agents interact with the environment and gather information using current policy and exploration schemes to find higher-reward areas. The drawback with this method is that the agent has to be trained directly in the real world or have a simulator. In case a simulator is not available, one would be required to build it, which is a very complex process. Simulators may even have flaws that can be exploited by agents to gain a competitive advantage.

Credit: Hugging Face

This problem is present in the case of offline reinforcement learning. In this case, the agent only uses the data collected from other agents or human demonstrations without interacting with the environment. Offline reinforcement learning learns skills only from previously collected datasets without active environment interaction and provides a way to utilise previously collected datasets from sources like human demonstrations, prior experiments, and domain-specific solutions.

GitHub for machine learning

Hugging Face’s startup journey has been nothing short of being phenomenal. The company, which started as a chatbot, has gained massive attention from the industry in a very short period; big companies like Apple, Monzo, and Bing use their libraries in production. Hugging Face’s transformer library is backed by PyTorch and TensorFlow, and it offers thousands of pretrained models for tasks like text classification, summarisation, and information retrieval.

In September last year, the company released Datasets, a community library for contemporary NLP, which contains 650 unique datasets and more than 250 contributors. With Datasets, the company aims at standardising end-user interface, versioning, and documentation. This sits well with the company’s larger vision of democratising AI, which would extend the benefits of emerging technologies to smaller technologies, which is otherwise concentrated in a few powerful hands.