OpenAI has released text to video generation model Sora. It can generate videos up to a minute long while maintaining visual quality and adherence to the user’s prompt.

Introducing Sora, our text-to-video model.

— OpenAI (@OpenAI) February 15, 2024

Sora can create videos of up to 60 seconds featuring highly detailed scenes, complex camera motion, and multiple characters with vibrant emotions. https://t.co/7j2JN27M3W

Prompt: “Beautiful, snowy… pic.twitter.com/ruTEWn87vf

OpenAI’s Sora is designed to understand and simulate complex scenes, featuring multiple characters, specific motions, and intricate details of the subject and background. The model not only interprets user prompts accurately but also ensures the persistence of characters and visual style throughout the generated video.

One of Sora’s standout features is its ability to take existing still images and breathe life into them, animating the content with precision and attention to detail. Additionally, it can extend or fill in missing frames in an existing video, showcasing its versatility in manipulating visual data.

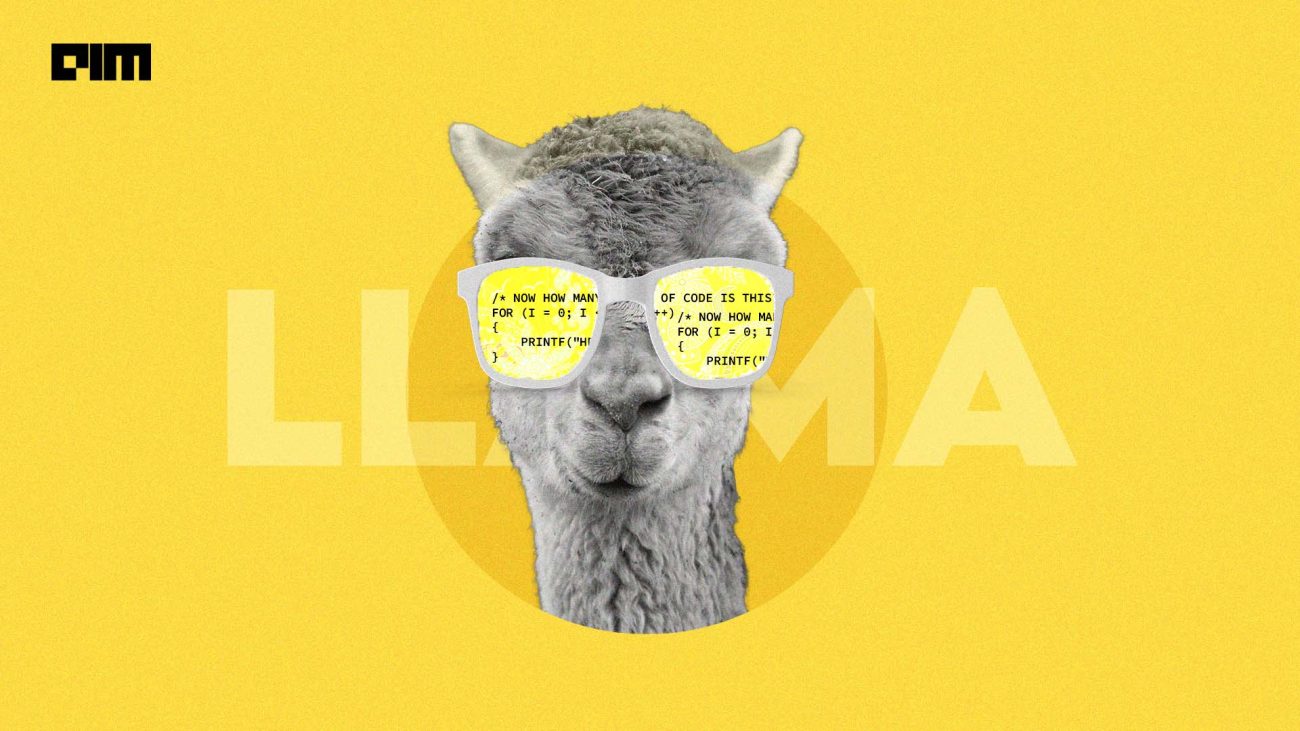

Sora builds on past research in DALL·E and GPT models. It uses the recaptioning technique from DALL·E 3, which involves generating highly descriptive captions for the visual training data.

While Sora’s capabilities are impressive, OpenAI acknowledges certain weaknesses, such as challenges in accurately simulating the physics of complex scenes and occasional confusion regarding spatial details in prompts.

OpenAI is taking proactive safety measures, engaging with red teamers to assess potential harms and risks. The company is also developing tools to detect misleading content generated by Sora and plans to include metadata for better transparency.

For now, Sora will be available to red teamers and select creative professionals. The company aims to gather feedback from diverse users to refine and enhance Sora, ensuring its responsible integration into various applications.

The team behind Sora is led by Tim Brooks, a research scientist at OpenAI, Bill Peebles, also a research scientist at OpenAI, and Aditya Ramesh, the creator of DALL·E and the head of videogen.

The unveiling of Sora follows Google’s recent release of Lumiere, a text-to-video diffusion model designed to synthesise videos, creating realistic, diverse, and coherent motion. Unlike existing models, Lumiere generates entire videos in a single, consistent pass, thanks to its cutting-edge Space-Time U-Net architecture.

Google today also released Gemini 1.5. This new model outperforms ChatGPT and Claud with 1 million token context window — the largest ever seen in natural processing models. In contrast, GPT-4 Turbo has 128K context windows and Claude 2.1 has 200K context windows.

Gemini 1.5 can process vast amounts of information in one go, including 1 hour of video, 11 hours of audio, codebases with over 30,000 lines of code, or over 700,000 words.