|

Listen to this story

|

Rocket Capital Investment (RCI), in association with MachineHack, successfully completed the longest blockchain tournament on Sep 5, 2022. The goal was to incentivise the best in machine learning applications for finance.

Headquartered in Singapore, licensed financial institution RCI combines its financial expertise with external machine learning forecasts through a blockchain tournament on financial markets. Through this competition, RCI aimed to use a decentralised platform to source and incentivise the best machine learning applications for the finance industry.

From the many entries received, only the best of the lot made it to the top. Analytics India Magazine spoke to some of the best performers to understand their data science journey, winning approach, and overall experience at MachineHack.

Let’s look at the ones that impressed the judges with their data skills.

Manish Pathak – Senior data scientist

Pathak is a BITS Pilani graduate who started exploring data science in his pre-final year. With all the complicated maths learned during college and the experience in handling data at scale using Big Data, he was naturally inclined to contribute to the data science community.

Winning Approach

Every week, the training dataset was a numeric structured data with 2000+ features and about one lakh observations. The target was continuous. Throughout the competition period, Pathak trained different regressors on the dataset with Spearman correlation as the metric. The regressors he trained were mainly tree-based boosting regressors such as XGBoost, CatBoost and LightGBM. He also trained Random Forest and Neural Networks in a few weeks’ challenges.

Since the dataset was huge, LightGBM and XGBoost were relatively faster than CatBoost. He tuned the hyper-parameters using Bayesian optimisation methods without any k-Fold CV as time was a constraint.

Since the dataset was time-based, he used the most recent data (~around 10%) as his validation set. Next, Pathak used a weighted average of the predictions from different regressors to optimise Spearman’s correlation and checked the ranking of predictions by sorting.

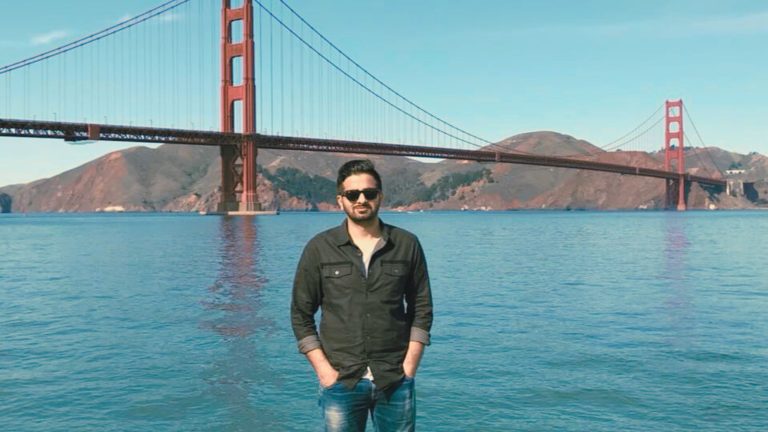

Saurabh Sawhney – Data science consultant

Data science fascinated Sawhney even before he heard the term. After many years of practice as an eye surgeon, he decided to wield the keyboard. His current area of interest is Computer Vision applications. Apart from trying his hand at various hackathons, he mentors AI/ML students on the weekends.

Winning Approach

Sawhney started by evaluating feature importance and testing different models using different numbers of features. He found that using the top 160-200 features was adequate for capturing the information contained.

He evaluated various models before finally deciding on an ensemble of Random Forest and XGBoost. Since the target variable is essentially sequential, he also experimented with various time-series models, but the results of those experiments were not satisfactory.

To account for some influence of the previous week’s position of the coin, he calculated this value for all coins where possible. Then, he combined it with the ensemble prediction, using weighted mean to assign 4% weightage to the last coin-value and 96% weightage to the ensemble prediction.

Andrey Bessalov – Data scientist

After completing his studies in mathematics a decade ago, Bessalov started working as a data scientist. He has participated in ML hackathons on the platforms for two years and has learned much from these competitions. His most memorable competitions are: Renew Power, Dare in Reality Hackathon 2021 and Rocket Capital Crypto Forecasting.

Winning Approach

1) Datasets preparation:

● Bessalov took the past three months for the evaluation set;

● For the training set, he took all other periods with the gap (holdout) of 1 month to the validation set. For example, one can choose: 2022-06-01 to 2022-09-01 for the validation set, the first available month to 2022-05-01 for the training set and so on.

2) Features:

When Bessalov trained the final model, he used all numerical features – 2010 in total.

3) Model:

He trained the Xgboost model with an early stop on the validation set and the following parameters:

‘objective’: ‘reg:squarederror’,

‘eta’: 0.05,

‘max_depth’: 6, # -1 means no limit

‘subsample’: 0.7, # Subsample ratio of the training instance.

‘colsample_bytree’: 0.7, # Subsample ratio of columns when constructing each tree.

‘reg_alpha’: 0, # L1 regularization term on weights

‘reg_lambda’: 0, # L2 regularization term on weights

Approaches tested:

● He fixed the validation set and tried to find the training set (the number of months to the validation set) that gives the best Spearman correlation score.

● He explored features by calculating the stability index and then tried to remove unstable (with different criteria) features from the model.

● He tried to train different models and then linearly stacked them (took all possible linear combinations with 0.01 step):

○ Xgboost

○ Random Forest

○ Linear models

Out-of-the-box solutions, high degree of skills displayed.

The CryptoPrediction Challenge saw participants bring out-of-the-box solutions to the table to solve the innovative problem they’d been presented with. Having such a high level of skills at the CryptoPrediction Challenge surely made it a huge success.