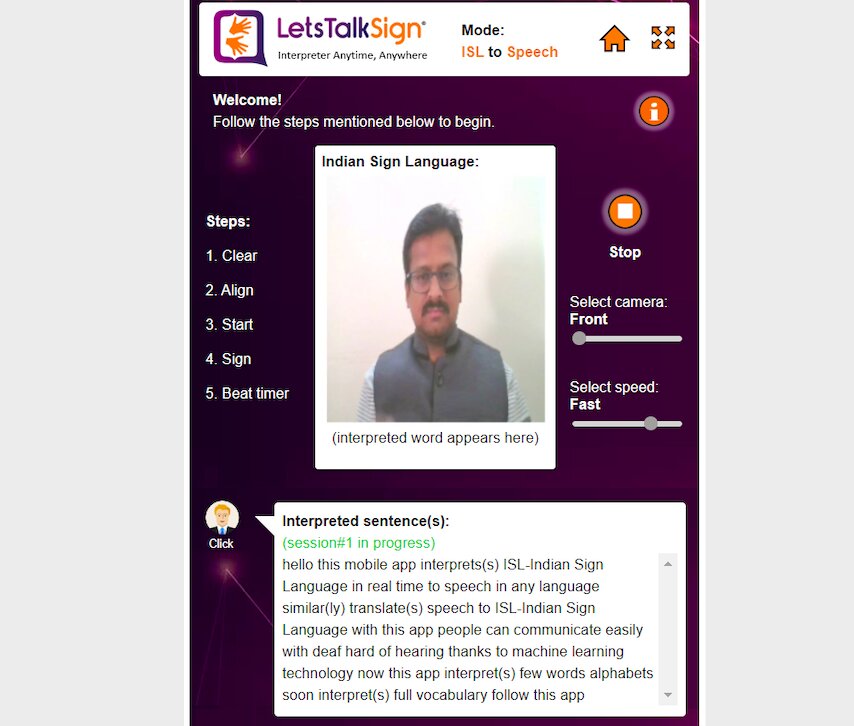

LetsTalkSign, developed by an Indian startup called DeepVisionTech.AI, is an AI-powered, device-agnostic solution that enables easy two-way communication for people with hearing/speech impairment.

Hearing loss may be on the rise, as per some estimates by WHO, and more people are increasingly using sign language to communicate. In 2018, more than 466 million of the world’s population (6.1% people) suffered from some form of hearing impairment. This could rise to 900 million by 2050, with as many as 1 people affected in every 10. Even closer home, close to 10% (~100m people) suffers from some level of functional hearing loss in India.

Affected people face numerous challenges in their day-to-day lives. In some cases, it also includes denial of their right to education because of the lack of schools teaching in sign languages. Research says that more than 90% of hearing-impaired people are either uneducated or school drop-outs. They may also have to deal with a massive lack of job opportunities, as well as social and workplace isolation.

How Can Technology Help?

According to experts, speech solutions available today for hearing/speech impaired communities are incomplete, non-scalable, and dependent on special hardware/software, which is inconvenient for day-to-day use with ease. Also, the existing systems do not provide any customisation features like creating a person’s digital voice for reading aloud interpreted text and creating an animated avatar from voice description for displaying sign gestures.

Even if few systems did not require additional hardware for sign language recognition, they fall short of detection accuracy or use techniques which cannot scale for multiple sign languages like Indian Sign Language, American Sign Language, etc. or variations of sign languages like finger-spell or word gestures or phrase gestures. Moreover, these systems require internet connection or backend services for interpretation.

This is where LetsTalkSign platform can add tremendous value by bringing to hearing/speech impaired persons a comprehensive tool to communicate, and break the stigma and language barrier. LetsTalkSign has been developed by DeepVisionTech.AI, a budding tech social enterprise startup which specializes in AI/ML technologies.

LetsTalkSign converts sign language gestures to text and speech in any language and vice-versa in real-time without requiring internet and works on any device and minimal processing power.

“LetsTalkSign is the proof that ‘AI for Accessibility’ isn’t just a slogan but a reality…we are here to leverage the power of technology to empower hearing/speech impaired communities and transform their lives,” told Jayasudan Munsamy, Founder of DeepVisionTech.AI and creator of LetsTalkSign.

DeepVisionTech.AI was conceptualized in late 2018 and established in mid-2019 with a handful of solutions for a variety of verticals like disability (hearing/speech impairment), agriculture (remote sensing for crop health monitoring), entertainment (gestures s a new user interface for apps & games) and healthcare (identifying diabetic retinopathy from OCT scans). Its patented computer vision-based solutions and techniques are targeted at giving businesses a competitive advantage.

LetsTalkSign: The Solution

To solve the issues that we see above, the founder of DeepVisionTech.AI, Jayasudan Munsamy described the tech behind LetsTalkSign. The solution leverages artificial Intelligence-powered device-agnostic platform that enables easy two-way communication between people with hearing/speech impairment who use sign language for communication and others who do not know sign language.

Some of the techniques as part of LetsTalkSign are – Computer Vision (CV) for video/image recognition to identify sign language signs/gestures Generative Adversarial Networks (GANs) for speech/text to sign language translation and synthetic voice creation; Natural Language Processing (NLP) to form sentences post interpretation of sign language signs.

Key functionalities of LetsTalkSign include:

- Supports user’s location-based customization of interpreting sign language – depending on the user’s location, that location’s sign language ‘variation’ interpretation will be enabled automatically in the system.

- Performs offline interpretation of sign language gestures, video to speech/text and vice versa.

- Supports multiple users conference at a time over the internet – users can be using sign language or speech or text to communicate and corresponding translations will happen on the users’ device

- It can be easily customized to scale the system to recognize context-specific or domain-specific words or phrases or sentences. For example, interpretation of banking or finance-related words or phrases or sentences can be enabled for the system to be used in Banks or Financial institutions.

The solution is device-agnostic meaning it can be used across smartphone, tablet, laptop, PC, TV, Raspberry Pi, etc. The coolest thing is that inference happens on device in real-time, and easily extendable to interpret other sign languages. According to Jayasudan, it also supports customizable vocabulary to support multiple domains and industry verticals and no additional hardware is required. Just a camera and minimal processing power are needed to make it work. No internet required as all the capabilities are built-in the app.

“Solutions available today for hearing/speech impaired communities are incomplete, inaccurate, non-scalable, dependent on special hardware/software and inconvenient for day-to-day use with ease. Also, the existing systems do not provide any customization features like creating a person’s digital voice for reading out aloud the interpreted text and creating animated avatar from voice description for displaying sign gestures,” explained Jayasudan.

Here is a short demo of LetsTalkSign.

So, can it really help hearing/speech impaired persons? We interacted with a hearing/ speech-impaired person who had used the app. We saw that we could easily interpret what that person said without any understanding his sign language, and proved the solution can be revolutionary for millions of people suffering from hearing/speech problems.

“I have not seen an app like this. Very easy to use on a mobile phone wherever I need,” told a hearing/ speech-impaired person.

DeepVisionTech.AI‘s Roadmap For LetsTalkSign

For 2020, the majority of the focus will be on gesture-enabling solutions – mainly LetsTalkSign platform. Then, according to the founder, the startup plans to complete building all the key functionalities, implement pilots, start acquiring customers of select domains in the next two quarters.

“By the end of 2020, we should start covering direct customers, as well as small and medium business customers. Also, our plan is to kick-off GesturizeMe solutions for the entertainment and gaming industry by the end of 2020. For 2021, we plan to extend LetsTalkSign’s vocabulary to multiple domains and approach large B2B customers. Solutions for different sectors – like agriculture and healthcare – will also be completed and rolled out soon,” said Jayasudan Munsamy.

DeepVisionTech.AI believes that ‘collaboration rather than competition’ is the way forward for any ecosystem and hence are welcome to collaborate with other social enterprises in providing this service to hearing/speech impaired community.