This year at their annual I/O developer conference, Google took us on a tour of the future of artificial intelligence and what it holds for us. Unlike last year’s hardware-centric announcements, 2018 I/O focused more on AI and how to get the most out of the Android devices. Prior to the keynote, the California-based company rebranded the whole of its Google Research division as Google AI, to focus more on computer vision, natural language processing and neural networks research and development.

At Shoreline Amphitheater in Mountain View, California, Google CEO Sundar Pichai kicked off its annual I/O developer conference by saying, “For someone like me who grew up without a phone, I understand how gaining access to technology can make a difference in your lives. There are very real and important being raised about the impact of the test advance and the role they ’ll play in our lives… We feel a deep sense of responsibility to get this right. That’s the spirit with which we are approaching our core machine to make information more useful, accessible and beneficial to society.”

“AI is enabling for us to solve problems in new ways for our users around the world. With Google AI, we want to bring the AI benefits to everyone and we are opening AI centres around the world. AI is going to impact many fields.” Pichai added.

Let’s take look at the big announcements that Google made on Day 1 keynote. The tech giant will unveil next big things announcements in APIs, SDKs, framework, etc for developers over the next couple of days.

1. Predicting Cardiovascular Risk Using AI

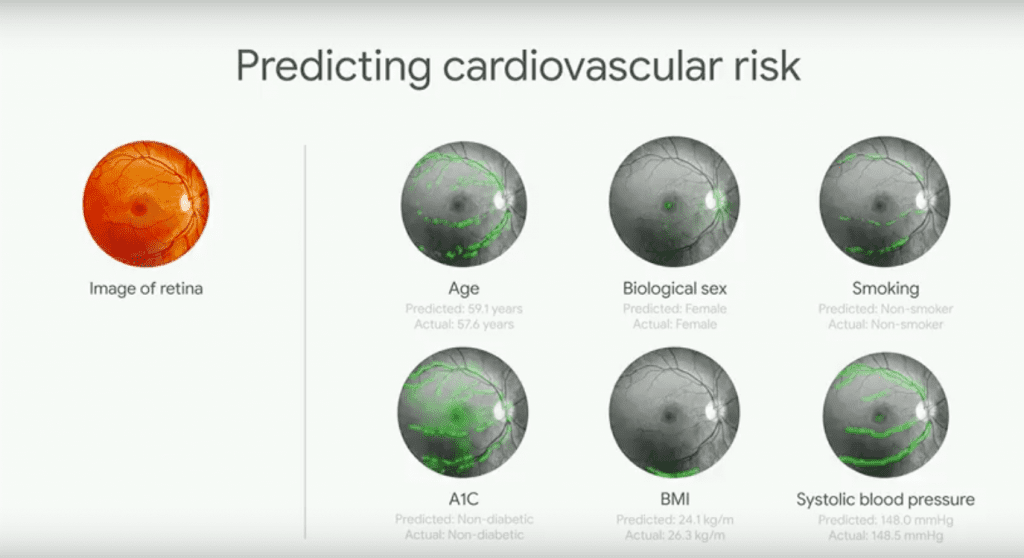

Google AI is making huge improvements in healthcare. Last year, Google announced a work on diagnosing diabetic retinopathy, the leading cause of blindness, to help the doctor diagnose it earlier using deep learning. The company is running field trials since then at Aravind and Sankara Hospital in India.

The same retinal scanning can also play an important role in predicting cardiovascular risk. Google’s AI can predict the five-year risk of you having an adverse cardiovascular event like heart attack or strokes. Also, the technology is bringing expert diagnosis to places where doctors are scarce. Google’s machine learning system can pick up on things that humans don’t. The company has published its paper on how AI is changing medicine.

2. AI For Accessibility

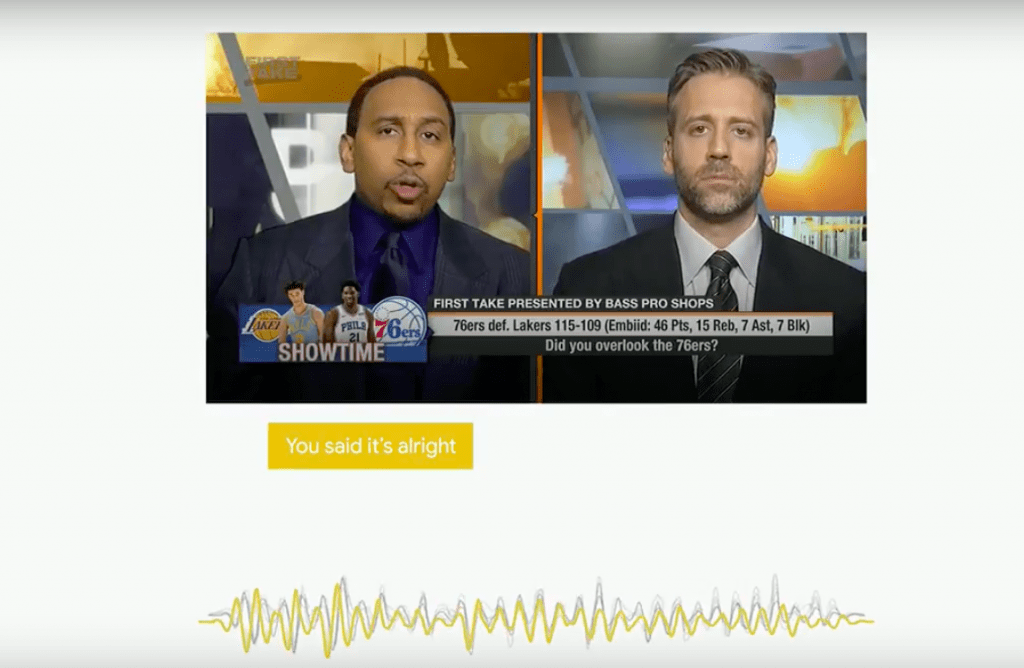

Looking To Listen

Pichai also showcased how AI can be used to write subtitles during debates. Google’s Looking To Listen is an audio-visual speech separation tool that uses ML to separate the audio from just one person from a video in which two people are debating or talking at the same time.

3. Morse Code Meets Machine Learning

Google is adding Morse Code support for its Gboard keyboard. Morse code for Gboard comes with a setting that will allow users customise the keyboard to fit their particular needs. It can be used alongside Switch Access, which allows users to interact with Google’s keyboard though external devices. It will also include Google’s AI-driven text suggestions. The company released a trainer that will help users learn how to communicate with Morse code. Google also released a Morse poster so that the users can learn Morse code more easily, as well as text-to-speech app that incorporates Morse code.

Watch Tani Finlayson’s Morse code story

4. Gmail Can Now Write Emails For You

Google now announced a new feature for Gmail called Smart Compose. This new feature is powered by AI, to help you draft emails from scratch. From your greeting to your closing sentence, Smart compose suggests complete sentence in your emails so that you can draft them with ease. It will operate in the background, and it will offer you phrase suggestions as you type, just hit tab to select it and the text will auto-populate. Google will roll out the Smart Compose feature for consumers over the next few weeks and will be integrated for G Suite customers within the next few months. When it becomes available for consumers, make sure you have enabled the new Gmail in order to use it.

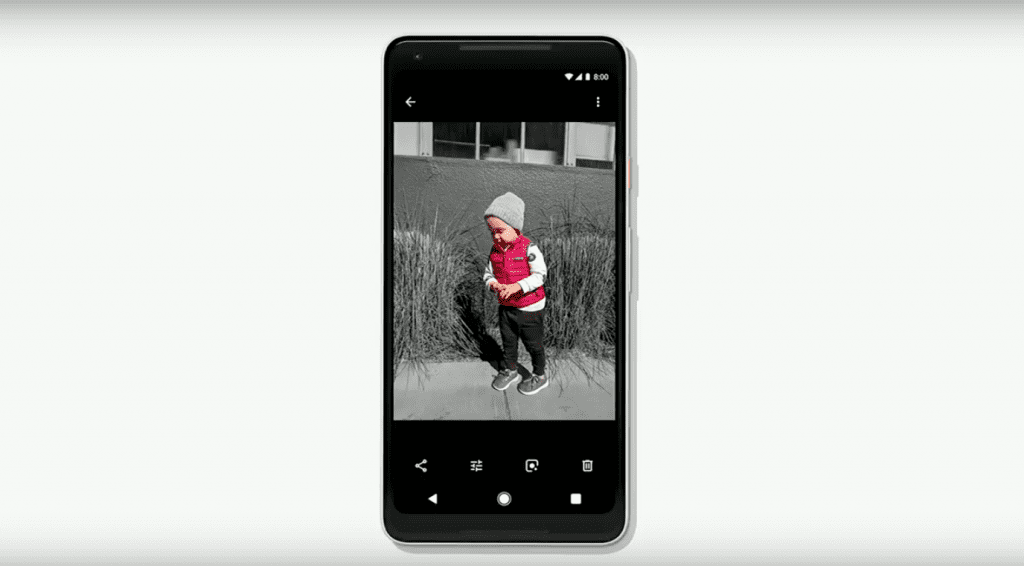

5. AI-Powered Google Photos

Pichai introduced a new feature in Google Photos called Suggested Actions. It suggests actions you might want to carry out on a photo. For example, the Google Photo app can see when your friend is in a picture and suggest that you can share it with her. If the same photo is underexposed, Google’s AI systems offer a suggestion to fix the brightness with one tap. It uses AI to convert document photo to PDF format and can fix brightness. It automatically suggests exposure adjustments and improvements to your photos. The AI can also colourise old black-and-white photos.

6. Tensor Processing Unit 3.0

The third generation tensor processing unit is eight times more powerful than the previous generations TPU. Pichai said the new chips are so powerful that Google had to add liquid cooling to their data centres to compensate for the additional heat. This can handle up to 100 petaflops of machine learning computing. It will be primarily consumed through Google Cloud.

7. Natural Conversations With AI-Based Google Assistant

Google Assistant will now come in six new voices. The Assistant also got a ‘continued conversation update’. Now, instead of having to say ‘Hey Google’ or ‘Ok Google’ everytime you want to say a command, you will only have to do so the first time. You can also ask multiple questions within the same request.

8. Google Duplex Powered By AI

Google Assistant will soon make phone calls on your behalf. The new technology called Google Duplex can carry out real-world tasks over the phone. According to a statement by Google, the technology is directed towards completing specific tasks, such as scheduling certain types of appointments. For such tasks, the system makes the conversational experience as natural as possible, allowing people to speak normally, like they would to another person, without having to adapt to a machine. But the Duplex is still under development.

9. Google Maps With Computer Vision

Google Assistant is also coming to Google Maps. The new feature will give you better recommendations for local places. Google also introduced Visual Positioning System in Google Maps for helping us with navigations beyond GPS. It will combine the camera, computer vision tech and Google Maps with Street View.

10. Google News Tailored By AI

Google News is getting revamped with AI. You will get a briefing of the top five news stories with the latest local stories. News will use ML to understand your news preference and will show stories accordingly. Google also introduced a new feature called Full Coverage, which will show all relevant stories on a particular story from different sources. It will also feature ‘Subscribe with Google’ that will allow users to access their paid content everywhere on Google’s platform.

11. ML Teaches The ‘Joy Of Missing Out’

Google will introduce new features on Android devices for your digital wellbeing. It will understand users habits, help us focus on what matters, suggest people to switch off and wind down to find more time for our family.

Android Dashboard

It will slice and dice data of how the users are using their phone and tell you if you are overindulging on your phones.

YouTube Notification Digest And Break Reminders

With this two features, you will be able to cut on notifications and better monitor the time you spend on watching videos on YouTube.

12. Android P

The latest version of Android will focus more on battery. Its new Adaptive Battery features AI to control which apps are running in the background in order to increase the battery life. In the latest version of Android, Google is also changing the user interface. The traditional three button bar will now be replaced by a single home screen button. When you swipe up on it, you will get a multitasking view of all recently used apps. It is also introducing few new features like Shush Mode, which silences your phone whenever you put your phone facedown on a surface. The Wind Down mode will automatically turn your screen to greyscale every night at a bedtime you decide and help you sleep better. Android P beta version is now available.

13. Machine Learning Kit For Developers

Google announced machine learning kit for app developers who are not much proficient with machine learning. The new software development kit (SDK) supports text, image labelling, barcode scanning, facial detection, landmark recognition. The SDK for app developers on iOS and Android are both available online and offline, depending on developers’ preference and network availability.