Robust real-time hand perception is one of the most challenging and complex computer vision projects right now. As human hands are flexible as well as highly articulated, they lack high contrast patterns. Therefore, one of the important reasons to develop hand gesture recognition is to establish a robust interaction between humans and machines.

This week, Google announced the release of a new approach to hand perception — an ML Pipeline for hand-tracking and gesture recognition. Earlier in June this year, Google previewed this new technique in the Computer Vision and Pattern Recognition conference 2019.

Behind the Architecture

The hand perception functionality is implemented in MediaPipe which is an open-source cross-platform framework for building pipelines in order to process perceptual data of different modalities, like video and audio. It provides high-fidelity hand and finger tracking by employing ML to infer 21 3D keypoints of a hand from just one single frame.

The hand tracking solution utilises a machine learning pipeline which is constituted of three models as mentioned below

- BlazePalm: BlazePalm is a single-shot detector model that operates on the full image and returns an oriented hand bounding box. To achieve this, the model follows some specific strategies such as training a palm detector instead of a hand detector, using an encoder-decoder feature extractor for bigger scene context-awareness even for small objects and lastly, minimising the focal loss during training. BlazePalm is mainly used to detect the occluded and self-occluded hands. So far, this method has achieved an average precision of 95.7% in palm detection.

- Hand Landmark Model: This model operates on the cropped image region defined by the palm detector and performs precise keypoint localisation of 21 3D hand-knuckle coordinates inside the detected hand regions via regression. In order to get robust hand poses, the researchers manually annotated approximately 30,000 real-world images with 21 3D co-ordinates. A high-quality synthetic hand model is also rendered over various backgrounds and map it to the corresponding 3D coordinates.

- Gesture Recogniser: This model classifies the previously computed keypoint configuration into a discrete set of gestures. The researchers used accumulated angles of joints to determine the state of the fingers and it is then mapped to a set of pre-defined gestures. This technique helped the researchers to estimate basic static gestures with reasonable quality.

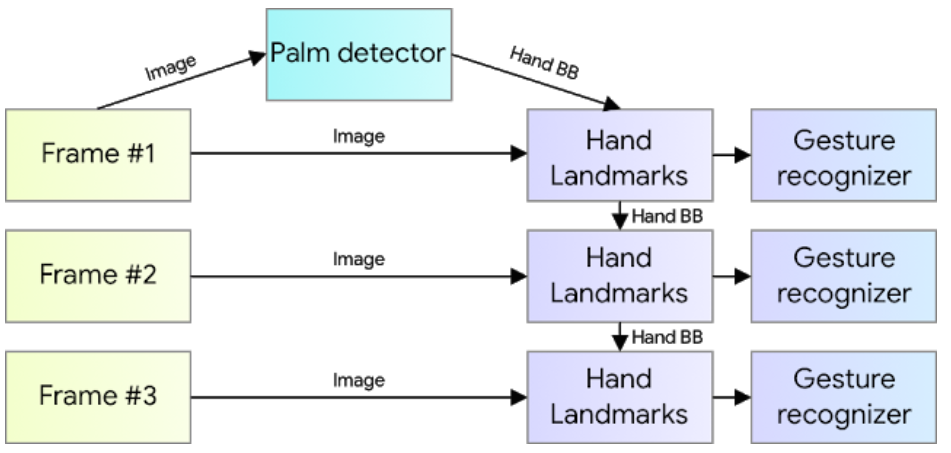

As mentioned earlier, this approach is implemented in the MediaPipe framework. The reason behind the implementation is that with the help of this cross-platform, the hand perception pipeline can be built as a directed graph of modular components, also known as Calculators. One important optimisation to the approach provided by this cross-platform is that the palm detector only runs when it is necessary thus saving a significant amount of computational time.

How It Is Different

This approach is different from the existing state-of-the-art approaches. The existing approaches rely primarily on powerful desktop environments for inference, while the new approach not only achieves real-time performance on a mobile phone but also has the capability to scale more than one hand.

Application of This New Approach

The hand perception approach can be used in various cases and few of them are mentioned below

- It can be enabled to the overlay of digital content and information on top of the physical world in augmented reality.

- Will help the differently-abled with sign language

- It can be applied in VR systems to manipulate virtual objects.

- It can also be utilised to control intelligent robots.