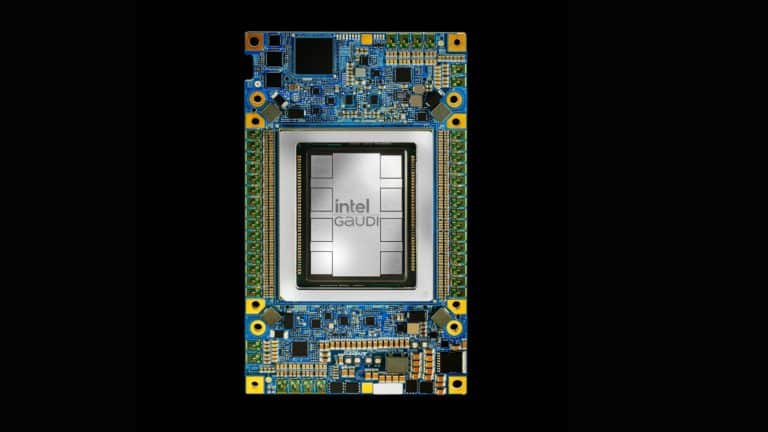

Intel has updated its OpenVINO toolkit to bring expanded natural language processing (NLP) support, added device portability on your hardware, and higher inferencing performance. The tool is used to develop applications and solutions to tackle a range of tasks including emulation of human vision, automatic speech recognition, natural language processing, recommendation systems, etc.

The new release will be available from March.

According to Intel, hundreds of thousands of developers have used OpenVINO to deploy AI workloads at the edge, including clients like BMW, Audi, Samsung and John Deere. Besides scaling models support, the new version of OpenVINO offers more device portability due to a simplified API and quickens the process of deploying the model to the market through an easy-to-use library of CV functions and pre-optimised kernels.

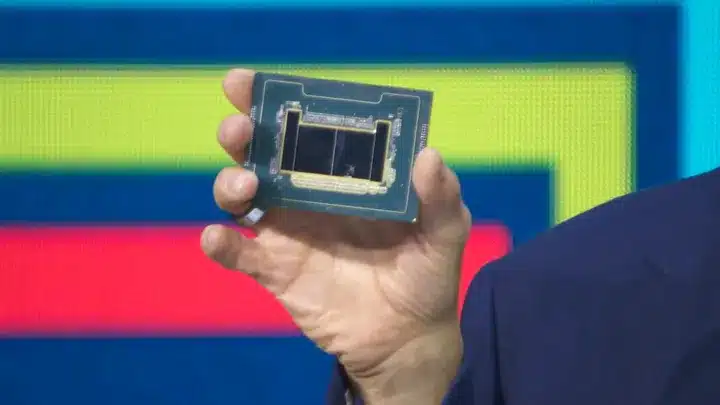

Intel has also announced a new system-on-chip (SoC) designed for the software-designed network and edge. The company’s new Xeon D processors are made for security appliances, enterprise routers and switches, cloud storage and wireless networks – cases where the processing needs to happen closer to where the data is generated. The chips deliver integrated AI and crypto acceleration, built-in Ethereum and a faster pace of working.