A collaborative team from Université de Montréal, the CERC-AAI lab, ServiceNow, and Morgan Stanley has introduced Lag-Llama, an open-source foundation model for time series forecasting.

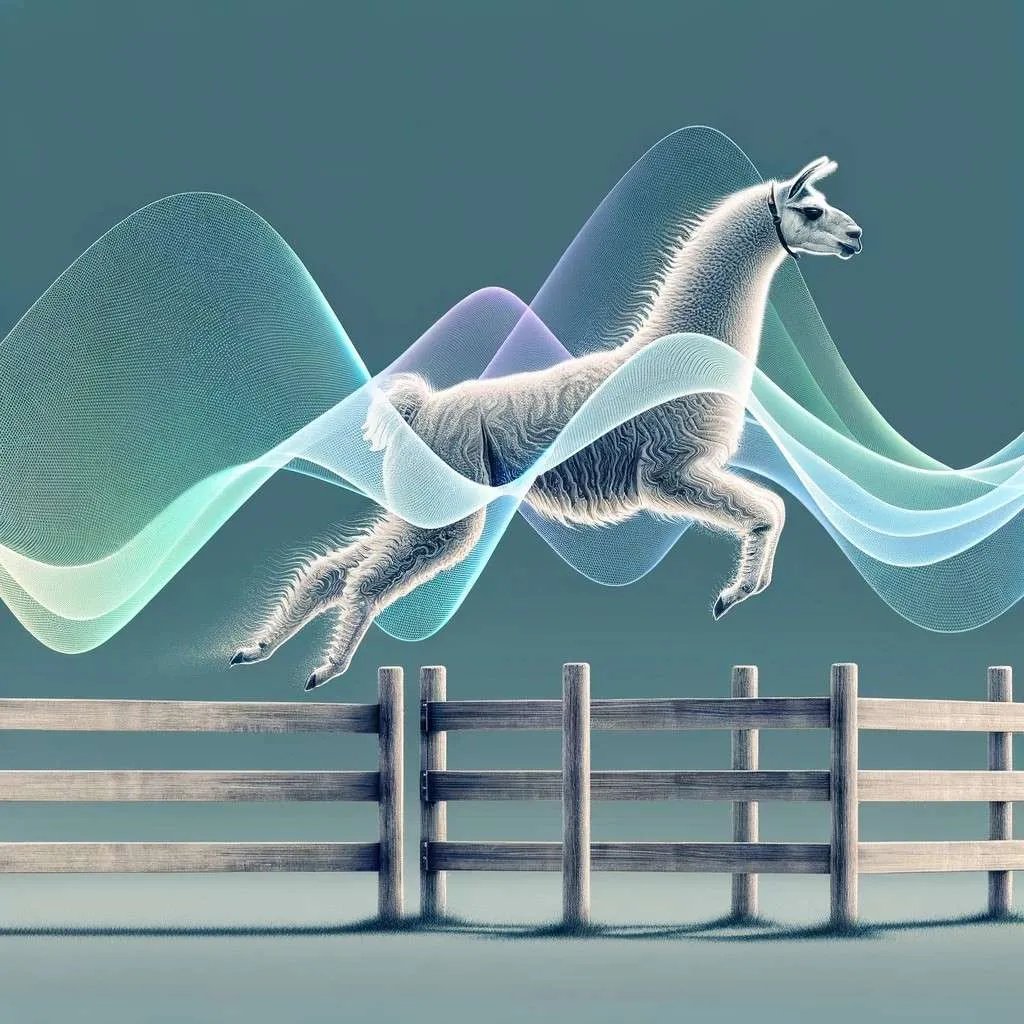

While foundation models have revolutionised natural language processing and computer vision in recent years, their application to time series forecasting has been notably absent. Lag-Llama aims to bridge this gap by presenting a unique, decoder-only transformer architecture that employs lags as covariates in univariate probabilistic time series forecasting.

Lag-Llama’s pretraining on a diverse corpus of time series data from various domains showcases its remarkable zero-shot generalisation capabilities. The pretraining corpus consists of 27 datasets across six different domains, including energy, transportation, economics, nature, air quality, and cloud operations, with close to 8K univariate time series and 352M tokens.

Its tokenization method uses lag features (specific points in the history) and date-time features (indicating the timestamp). The team has developed stratified batch sampling and normalisation strategies which prove effective during pre training.

Fine-tuning on previously unseen datasets propels Lag-Llama to a state-of-the-art position, surpassing prior deep learning approaches. This solidifies its status as the leading general-purpose model on average, heralding a significant breakthrough in time series forecasting.

Beyond its impact on machine learning, Lag-Llama holds promise for diverse applications in computational medicine, natural sciences, finance, climate, retail, ecology, energy, and more. Its adaptability to scenarios with limited data availability positions it as a crucial asset for applications requiring a transfer from a general-purpose pretrained model.

Forecasts on the unseen datasets closely match the ground truth. pic.twitter.com/vV4rnEN5yd

— Arjun Ashok (@arjunashok37) February 7, 2024