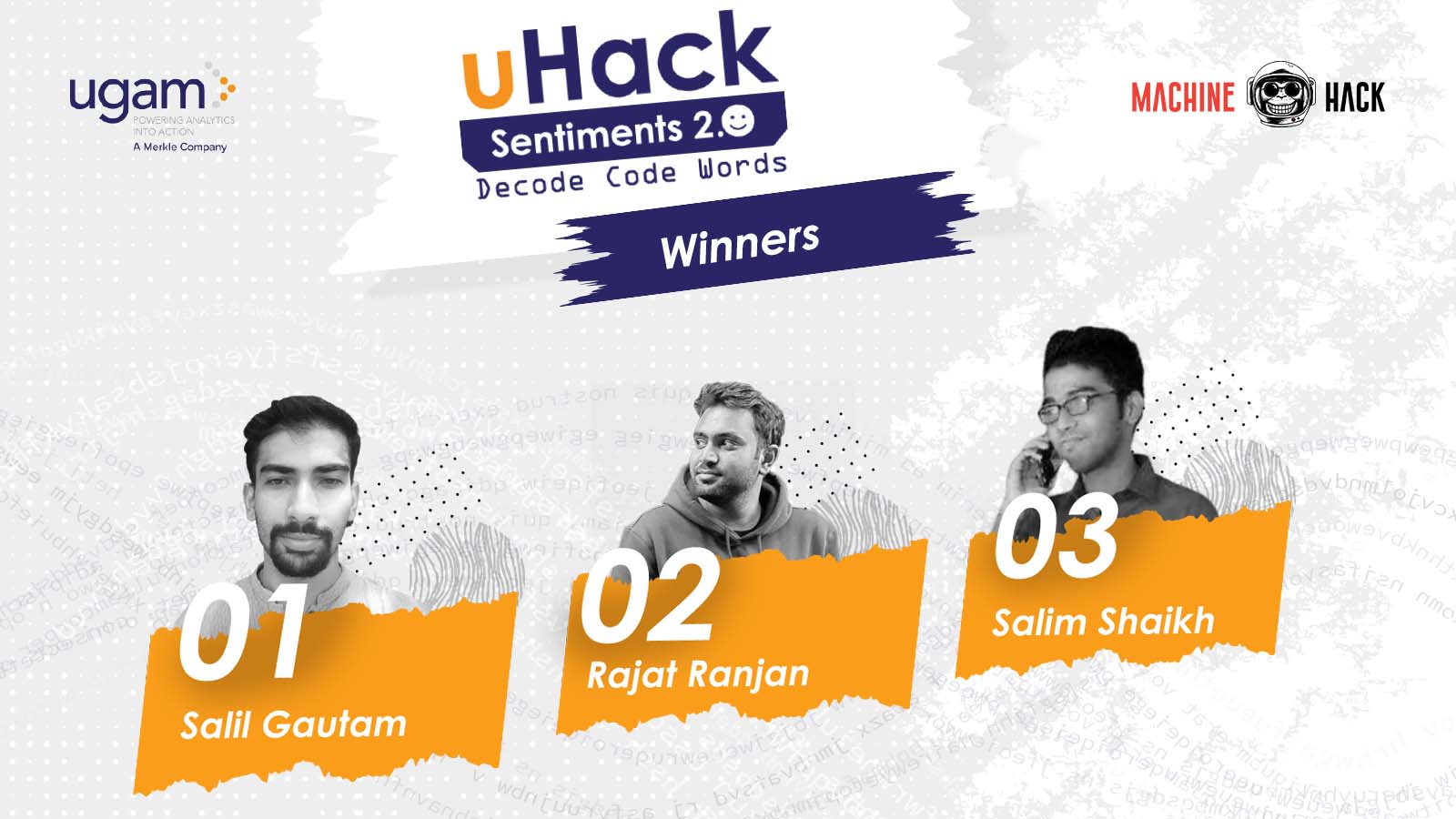

uHack Sentiments 2.0: Decode Code Words organised by Ugam, a Merkle company, in collaboration with MachineHack, concluded on January 13, 2022.

The data science hiring hackathon on sentimental analysis, saw 1016 participants and over 250+ solution submissions. The top three winners bagged cash prizes worth INR 70,000, and stand a chance to get hired by Ugam.

The winners also got an opportunity to present their learnings and solution approaches on Thu, January 20, 2022, at India’s leading conference for machine learning practitioners – Machine Learning Developers Summit (MLDS) 2022.

Check out their presentation here:

Let’s take a quick look at the winners’ journeys, solution approaches, and overall experience at MachineHack.

Rank 01: Salil Gautam

Salil Gautam is a data scientist at Société Générale Global Solution Centre. He started his data science journey during his second year in college. Since then, he has been actively participating in various Hackathons. He has won over 45+ product-based and data science hackathons in the last five years. “This has given me exposure to various complex industry problems across different domains,” said Gautam.

Approach

Gautam said he initially broke down the challenge into 12 individual problems using binary classification, but later found a multi-label approach working better. Further, he experimented with different architectures and found transformer models as the ideal solution.

“My final submission was a blend of 8 models involving different transformers along with variation in batch size, sequence length, folds, epochs, etc.,” he added. Gautam said his best single model score on the public leaderboard was 2.75688, which could have secured him a good rank, but he tried ‘ensembling’ to make the final submission more robust.

Experience

Gautam has been a member of MachineHack since December 2018. He said the platform’s user interface and prize pool make it really interesting and competitive.

Check out the solution here.

Rank 02: Rajat Ranjan

Rajat Ranjan is a data scientist at TheMathCompany. He has also worked with Infosys. He has 5+ years of experience in the data science and analytics field with experience of working in the IT and services/product industry.

Ranjan is skilled in machine learning, modeling, and visualization using Python. He is also proficient in strong onsite client interaction and analysing stakeholders needs. “I love interacting with data and creating models which best suit the business needs, along with participating in different ML hackathons to learn new technology and grow professionally,” he added.

Approach

Ranjan’s approach relied on text preprocessing using different pipelines, text features based approach using feature engineering, transfer learning using simple transformers using different models (BERT, RoBERTa), multi-label stratified cross-validation, optimizing log loss using ensemble approach to reduce variance, etc.

Experience

“I have been part of MachineHack from its inception,” said Ranjan. He said hackathons like these boost the confidence of any aspiring data scientist and help them to grow more technically proficient in the ML/DS field, as consistency is the key to learning to grow! “I had a great time participating in the hackathon and would like to participate in any hackathon hosted in the future as well,” he added.

Check out the solution here.

Rank 03: Salim Shaikh

Salim Shaikh is a data scientist at HDFC Bank. Shaikh has a Masters in Statistics. “Earlier, I was not aware of data science, but after my placement at Vodafone Idea, I found my future roadmap,” said Shaikh.

He has participated in various hackathons organised by Kaggle, Analytics Vidhya, Zindi, HackerEarth, and MachineHack. “This led to collaborative learning by reading solutions of other participants, teaming up with them and exchanging ideas, etc.,” he added.

Approach

Shaikh built a language model to understand the context. He used Hugging Face’s activebus/BERT-XD_Review’ because it is a cross-domain (beyond just laptop and restaurant) language model, where each example is from a single product/restaurant with the same rating, post-trained (fine-tuned) on a combination of 5-core Amazon reviews and all Yelp data. It is based on the paper BERT Post-Training for Review Reading Comprehension and Aspect-based Sentiment Analysis.

After training the language model, he used it as the initial weights to classify each review into the 12 classes. He also treated it as a multi-label problem, but it did not give him better results. “I may be wrong in colluding this,” he added, “Finally, I trained 12 models, one for each class using a five fold cross-validation and different hyperparameters for each model.”

Experience

“Every weekend, we get some new problems, covering all the aspects like text, tabular, vision, etc., to learn. I look forward to more hackathons in future,” he added.

Check out the solution here.