|

Listen to this story

|

Accelerating the progress on generative AI, Mark Zuckerberg’s Meta has introduced new text to video and editing models called Emu Video and Emu Edit, respectively.

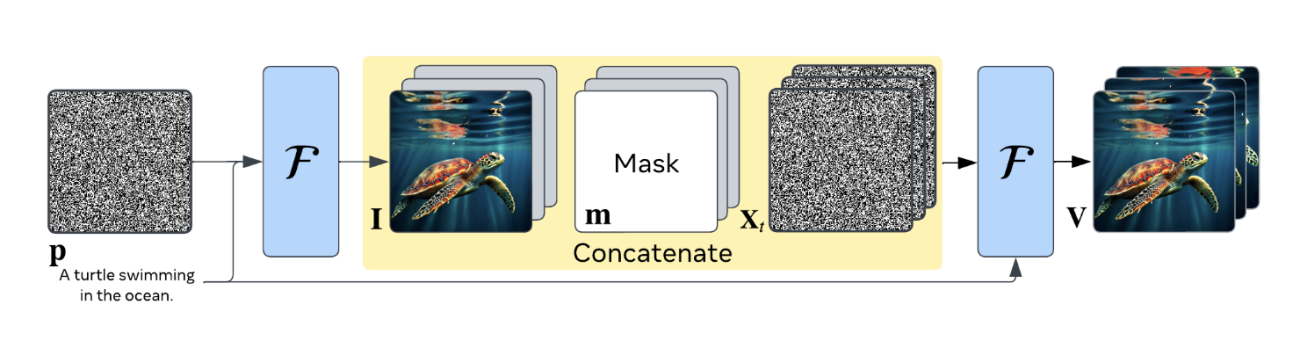

Emu Video is a new text-to-video generation model involving two steps: generating an image based on the text and then creating a video using both the text and the generated image. The model achieves high-quality, high-resolution videos through optimised noise schedules for diffusion and multi-stage training.

Human evaluations demonstrate superior quality compared to existing works, with preferences of 81% over Google’s Imagen Video, 90% over NVIDIA’s PYOCO, and 96% over Meta’s Make-A-Video. The model also outperforms commercial solutions like RunwayML’s Gen2 and Pika Labs. Notably, their factorizing approach is well-suited for animating images based on user text prompts, surpassing prior works by 96%.

On the other hand, Emu Edit is a multi-task image editing model demonstrating superior performance in instruction-based image editing. It outperforms existing models by training across various tasks like region-based editing, free-form editing, and computer vision tasks.

Emu Edit’s success is attributed to its multi-task learning, using learned task embeddings to guide the generation process accurately. The model proves its versatility by successfully generalising to new tasks with minimal labeled examples, addressing scenarios with limited high-quality samples. Additionally, a comprehensive benchmark with seven diverse image editing tasks is introduced for a thorough evaluation of instructable image editing models.

The model addresses the limitations of existing generative AI models in image editing. It focuses on precise control and enhanced capabilities by incorporating computer vision tasks as instructions. The model handles free-form editing, including tasks like background manipulation, color transformations, and object detection.

Unlike many existing models, it follows instructions precisely, ensuring that only pixels relevant to the edit request are altered. The model is trained on a large dataset of 10 million synthesized samples, presenting unprecedented results in terms of instruction faithfulness and image quality. Emu Edit surpasses current methods in both qualitative and quantitative evaluations for various image editing tasks, establishing new state-of-the-art performance.