|

Listen to this story

|

If a compound for a chemical weapon can be created using ChatGPT, or, if the chatbot is able to spew out misinformation along with extreme biases that can possibly target various interest groups, the moral grounds of an LLM model is pretty much compromised. With big tech companies fighting to bring down these anomalies, the need to form a dedicated team that can bring in safety, security and morality, is probably more crucial than ever, and OpenAI is on it.

To fix this morality part of its LLM, OpenAI is inviting people to enhance their AI safety features and democratise AI use. As a responsible AI company, OpenAI recently announced plans to build a Red Teaming Network.

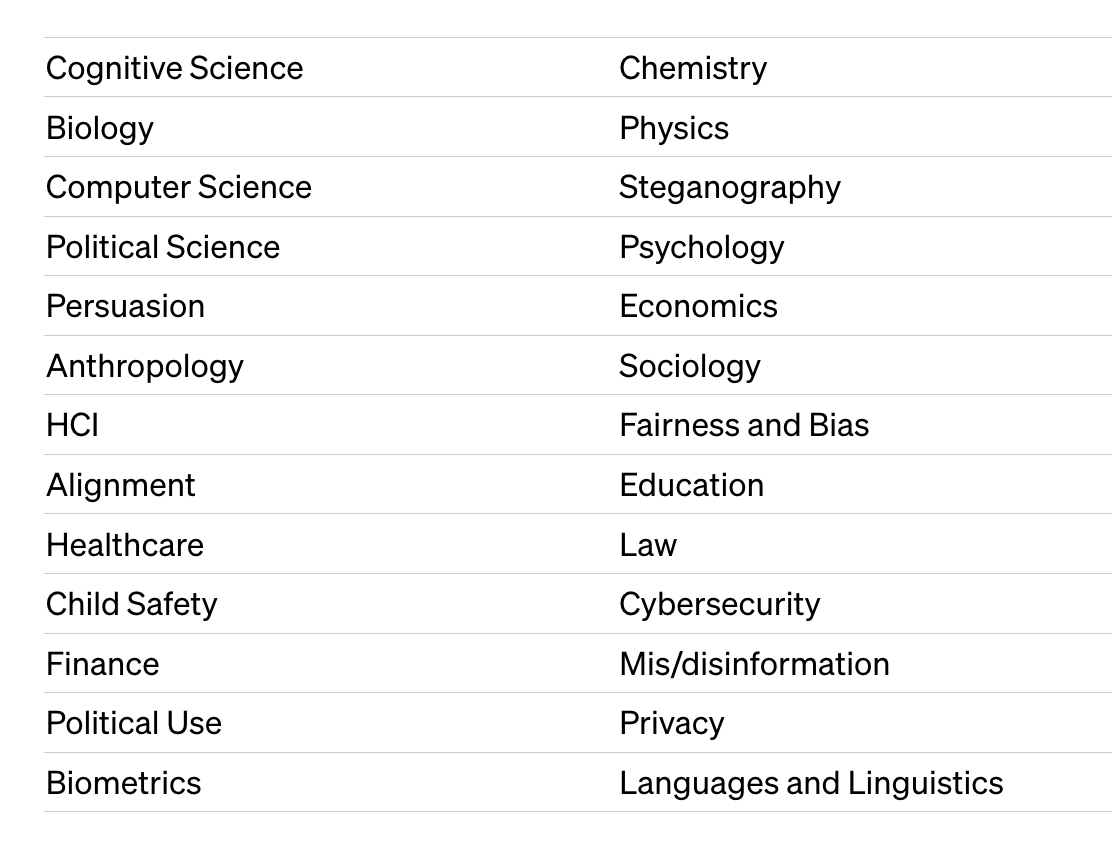

The company is inviting domain experts from various fields to help improve safety for OpenAI’s models. Interestingly, the announcement comes in less than a week of the AI Senate meeting that convened all the tech titans of the industry, including OpenAI CEO Sam Altman, to discuss AI regulations.

The ‘Red Teaming Network’ will be selected based on specific skills who can assist at different points in the model and product development process. The program is flexible where members will not be engaged in all projects and the time commitments for each of them can vary, going as low as 5-10 hours in a year.

Domain experts required for Red Teaming Network. Source : OpenAI

Big Tech Fancies Red Teaming

Sam Altman had often spoken about how they took six months for testing the safety of the GPT-4 model before releasing it to the public. Those six months involved red teamers of domain experts to test the product. Paul Röttger, postdoctoral researcher at MilaNLP, who was part of the red team for testing the GPT-4 model for six months, mentioned that model safety is a difficult and challenging task.

Red teamers were also involved in OpenAI’s latest image-generation model DALL.E 3 that was launched yesterday. The domain experts were consulted to improve the safety feature and mitigate biases and misinformation that can be generated from the model – the company confirmed on their blog.

Red remaining is no new concept for other big tech companies too. Interestingly, in July, Microsoft released an article on red teaming for large language models, listing out all nuances of the process and why it is an essential practice for ‘responsible development of systems’ using LLMs. The company also confirmed on using red teaming exercises that included content filters and other mitigation strategies for its Azure OpenAI Service models.

Google is also not far behind. The company also released a report in July emphasising the importance of red teaming in every organisation. Google believes that red teaming is essential for preparing against attacks on AI systems, and has built an AI Red Team comprising ethical hackers, who can simulate various potential adversaries.

AI Attacks that Google’s Red Team tests. Source: GoogleBlog

Lure With Money

While there is no clarification on remuneration of the previous red team members at OpenAI, the latest red teaming network that OpenAI is trying to build will pay a compensation for the participants. However, the project will entail contributors to sign a Non-Disclosure Agreement (NDA) or maintain confidentiality for an ‘indefinite period’.

This is not the first time that OpenAI is splurging big on sourcing experts to improve their AI models on security and safety. A few months ago, the company announced a program to democratise AI rules where it will fund experiments on deciding AI system rules. A total of 1 million grant was offered.

Furthermore, OpenAI also launched its new cybersecurity grant program of $1 million that aimed to help and enable the creation and advancement of AI-powered cybersecurity tools and technologies. Interestingly, these announcements also came shortly after the first AI Senate meeting that Altman attended, where he emphasised on the need to regulate AI.

Going by how OpenAI has already been using red teams to make their models safe, the latest effort broadens its horizon to include a diverse group of people to help with the model. It could probably be in an attempt to get various perspectives on fixing their models, and at the same time, continue to brand itself as a company that works for the people. However, the opportunity seems to be exciting too.

Nothing New Here

Last year, Andrew White, chemical engineering professor at the University of Rochester, was part of a team of 50 academics and experts that were hired to test OpenAI’s GPT-4 much before the model was publicly released in March. The ‘red team’ tested the model for over six months with the goal of finding vulnerabilities to break the model. What Andrew learned was that with GPT-4, he was able to suggest a compound as a chemical weapon, emphasising on the risks that the model poses – an unsolved problem that still persists.

While this was months ago, OpenAI’s latest red team efforts take safety checks to a higher magnitude, calling it a more formal effort to collaborate with outside experts, research institutions, and civil society organisations.