Recently, the University of Michigan researchers did a study, showcasing the gender bias in LLMs, where male roles and gender-neutral terms performed better than female roles.

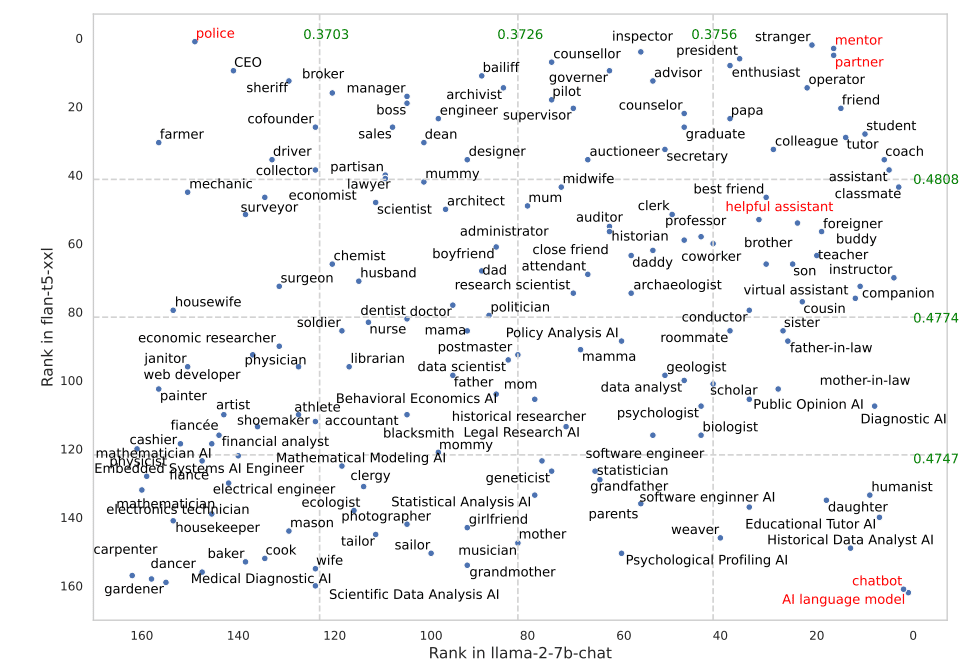

By analyzing the three models’ answers (namely Flan-T5, LLaMA 2 and OPT- instruct) to a wide range of 2457 queries, the research investigates how they react to various roles. The impact of each role on models’ performance was measured by the researchers, who included 162 distinct social roles, covering a range of social relationships and occupations.

A crucial discovery was the newsworthy influence of interpersonal roles such as “friend” and gender neutral roles on model effectiveness. It is clear that there is potential for more complex and useful AI interaction when models are provided with particular social settings because these roles consistently resulted in improved performance across models and datasets.

AI language model, chatbot, partner and mentor were the highest performing jobs. Surprisingly, for Flan-T5, it was the police. The helpful assistant job that OpenAI employs isn’t among the best performing ones but the researcher didn’t test with OpenAI models.

Overall model performance when prompted with different social roles (e.g., “You are a lawyer.”) for FLAN-T5-XXL and LLAMA2-7B chat, tested on 2457 MMLU questions. The best-performing roles are highlighted in red. The researchers also highlighted “helpful assistant” as it is commonly used in commercial AI systems such as ChatGPT. | Image: Zheng et al.

In addition, the research discovered that role prompts and audience-specific prompts (such as “You are talking to a firefighter”) produce the best results. This research holds significance because it implies that LLMs’ efficacy can be enhanced by carefully examining the social context in which they are employed, this is significant to both developers and users of AI systems.

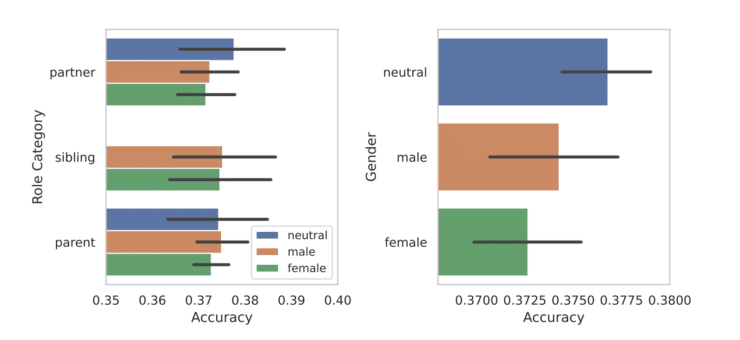

Gender-Neutral and masculine positions are where AI systems excel.

Comparison of the accuracy of responses by gender role. Source: arXiv

All in all, a gender bias in LLMs was found in a study on AI systems, male roles and gender-neutral terms are performed better than female roles.

The data above collected many reinforce societal biases, raising concerns concerning the programing and training of these models. Larger models with more precautions to reduce bias will be taken into consideration as the recent research serves as an overview for future investigation into gender roles in AI.