|

Listen to this story

|

Looks like Google unwittingly did a huge favour for India. The government recently put the shackles on India’s AI growth by asking companies working on generative AI, and other AI models, to seek explicit permission from the government before deploying their products.

Coincidently, the AI advisory comes within weeks of the recent Google Gemini fiasco, where Gemini had been found spewing inappropriate content, including a biased response against the country’s Prime Minister Narendra Modi. “These are direct violations of Rule 3(1)(b) of the Intermediary Rules (IT Rules) of the IT Act and violations of several provisions of the Criminal Code,” said IT minister Rajeev Chandrasekhar.

The outcome: The government announcement drew sharp criticism from AI startup founders and developer communities across countries, pointing to this form of regulation as a reason for other countries to speed ahead in AI development.

“Why is that for such a transformative tech like AI, there are no guardrails between what is in the lab and what goes out to the public,” said Chandrasekhar, on the new advisory. “If you do not comply with it, at some point, there will be a law and legislation that (will) make it difficult for you not to do it.”

Pratik Desai, CEO and founder of Kissan AI, that is working towards bringing transformation in agriculture using AI, expressed his disapproval with the new rule. “I was such a fool thinking I will work on bringing GenAI to Indian Agriculture from SF. We were training a multimodal low cost, pest and disease model, and were so excited about it. This is terrible and demotivating after working 4 years full-time bringing AI to this domain in India,” Desai posted on X.

Source: X

“Bad move by India,” chimed in IIT Madras alumnus and Perplexity AI founder Aravind Srinivas.

“India just kissed its future goodbye!” wrote Abacus AI chief Bindu Reddy, saying this is how monopolies thrive, countries decay and consumers suffer. “Sadly, India is already dominated by monopolies, nepotism and bureaucracy and this new rule just made it far worse.”

As the outcry was spread wide, Chandrasekar quickly tweeted details of the latest advisory to prevent further uproar. “The advisory is aimed at significant platforms, and permission seeking from MeitY is only for large platforms, and will not apply to startups,” he said.

The advisory is aimed at untested AI platforms, and he believes that disclosure as untested platforms can save them from being sued by consumers. Interestingly, just before this development, Google apologised to the Indian government on Gemini’s results on PM Modi, stating, “Sorry, we are unreliable.”

Lacks clarity

While the AI advisory safeguards AI startups, clarity on the definition of ‘large platforms’ still remains unclear. Whether parameters such as funding size, revenue returns or employee count qualifies them as significant platforms, is unknown. There are also further loopholes as to what will happen to big tech conglomerates working with Indian AI startups.

Of late, Indian AI startups building Indic language models have witnessed promising investments from big-tech companies. Sarvam AI, a generative AI Indian startup working on bringing Indic LLMs that received $41M from prominent VC players, recently announced its partnership with Microsoft to bring Indic voice LLM to Azure.

Even Perplexity, the latest AI-answer engine, has confirmed its partnership with Sarvam.

Founder of AI startup Vaayushop, Atul Mehra, has called the move a “huge opportunity in disguise”, pointing at the need for local AI stacks, datasets and GPUs.

Source: X

There is No Perfect LLM Yet

The advisory also does not clarify on what the limitations will be for companies labelling their models as ‘under testing’. Considering that an LLM is never free of hallucinations, a trait OpenAI CEO Sam Altman refers to as a ‘feature’ rather than a ‘bug’, the advisory rules will never permit a large company to shake off the testing label. This further complicates the working of large AI models.

Source: X

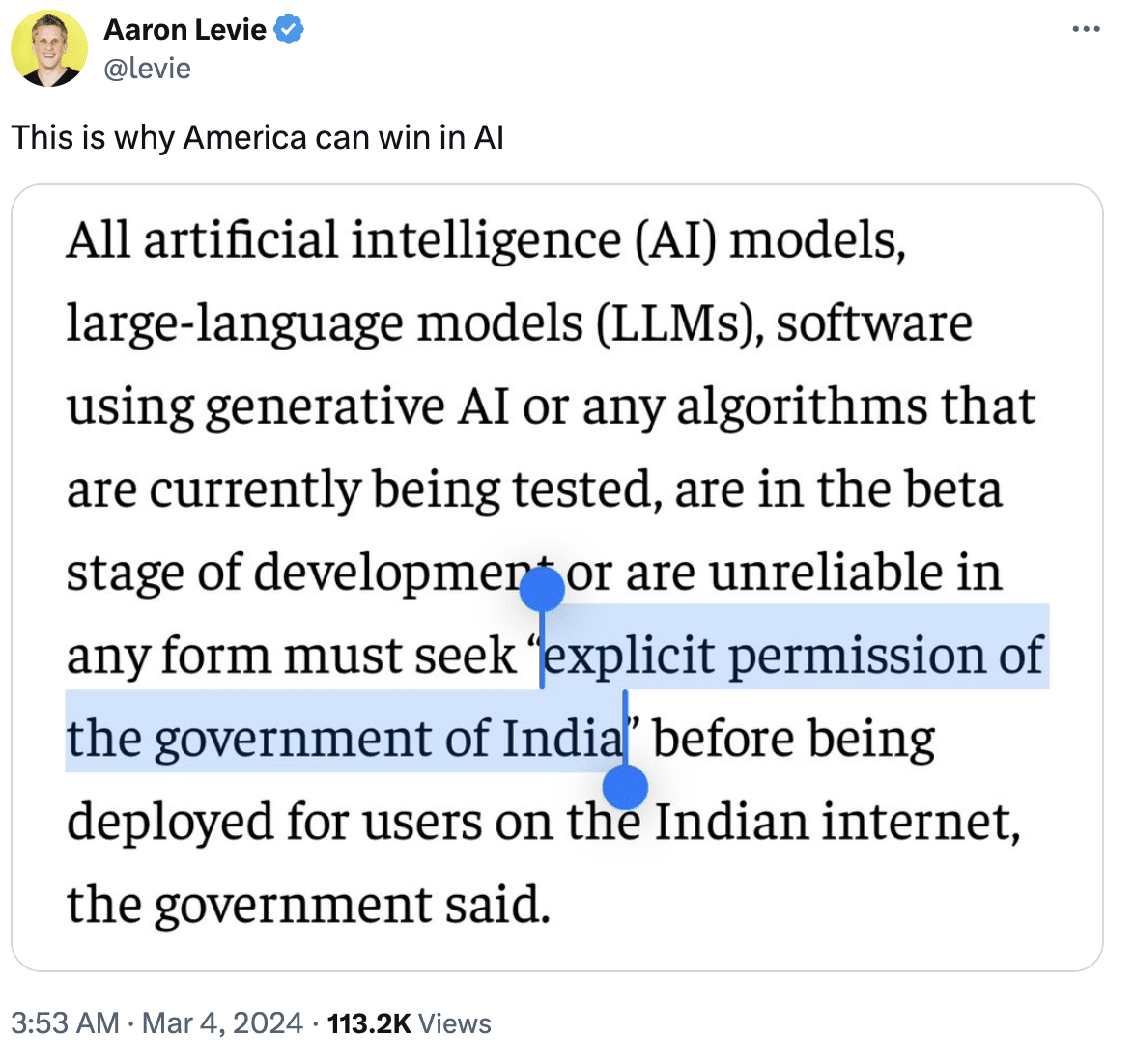

Countries Push Unhinged

Source: X

The ongoing debate on AI regulation is nothing new with the US Senate having already grilled big-tech leaders, such as Altman. Interestingly, Altman had stated the same thing that Chandrasekar tweeted when talking about regulating companies.

He believes that regulation should apply only to big tech companies and not startups. However, he also said that the regulation should be done in a way that it does not slow innovation or impede the positive economic benefits that could flow from AI.

While the US has been the OG of AI development, it is also slightly marred with regulatory controversies, the race for AGI and big tech leaders fighting each other. Other countries, however, are speeding towards promoting and developing AI skill development. Recently, Tan Wu Meng, MP of Jurong, Singapore, gave a speech on AI proposals for supporting skill development among Singaporeans.

“We can’t hide from these changes. No country or economy can hide from these changes in the world with AI. Even if an entire country tries to prevent AI from entering its borders, other economies will do so; your competitors will do so. So, we have to accept this world, as it is the way the world is going to be, and look after, support, empower, and uplift our people,” said Meng.

Irrespective of the furore over an advisory rule, India’s lead in the AI and semiconductor space remains unparalleled. In the past two years, the country received investment proposals of over ₹2.50 lakh crore in the semiconductor space, with Tata Group and Taiwan’s PPSMC collaborating to build India’s first semiconductor fab in Dholera, Gujarat for ₹91,000 crore.

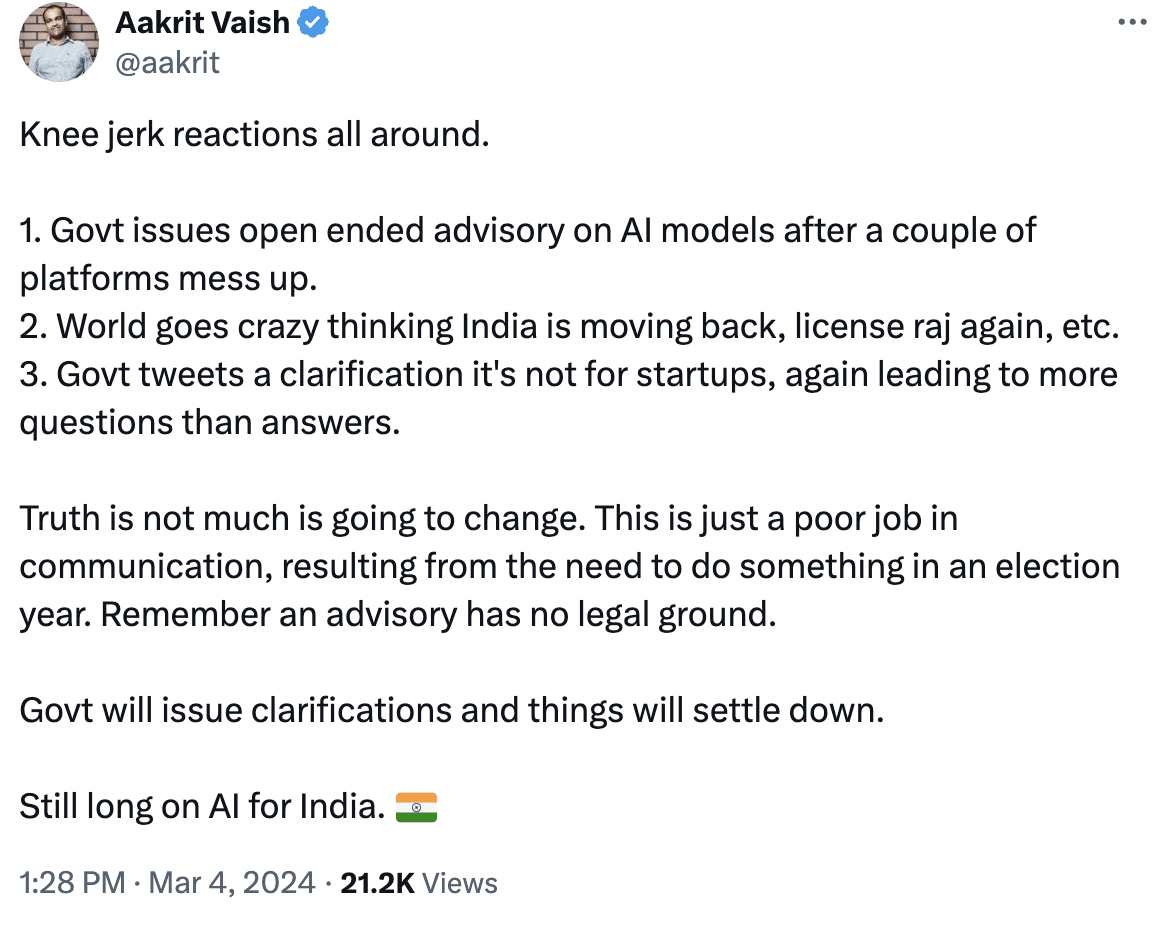

Aakrit Vaish, co-founder and CEO of conversational AI startup Haptik, has summed up the event in a recent post, clarifying that an advisory has no legal ground, and that the government will issue clarifications and things will settle down.

Source: X