|

Listen to this story

|

Cohere, the Toronto, Canada-based leading provider of enterprise-grade AI solutions, recently introduced Command R+, a scalable LLM designed to excel at real-world business applications.

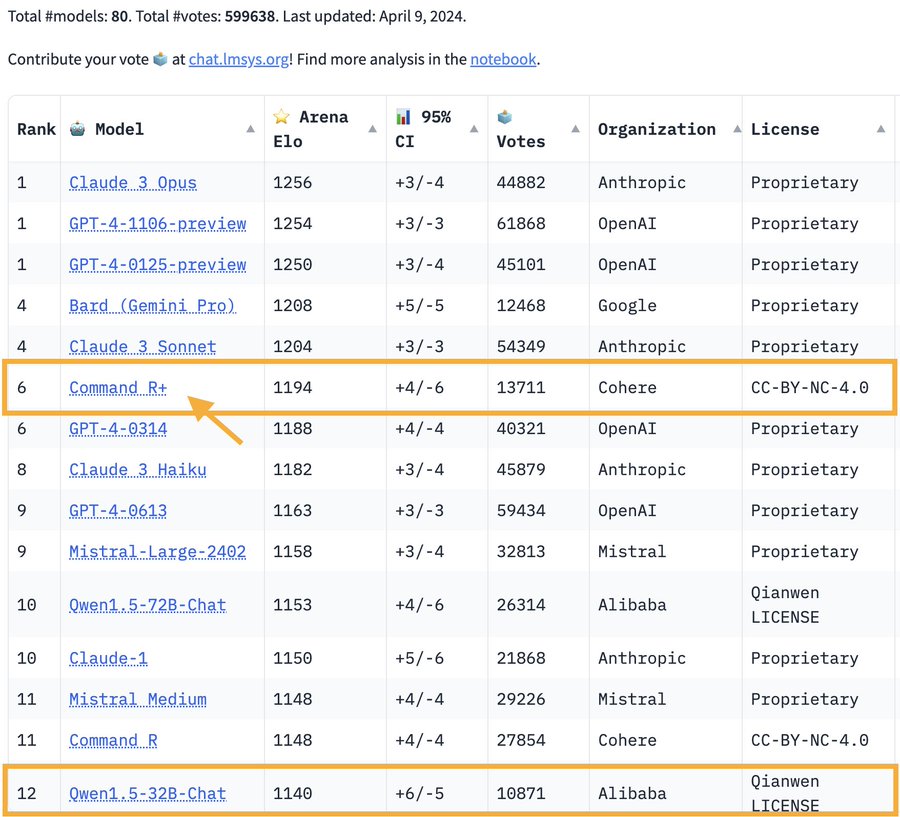

According to the latest Arena result, Cohere’s Command R+ has climbed to the 6th spot, matching the GPT-4-0314 level by 13K+ human votes! “It’s undoubtedly the best open model on the leaderboard now.”

Source: Twitter

Building upon the strengths of its predecessor, Command R, the new model offers advanced retrieval augmented generation (RAG) capabilities, improved performance, and multilingual support.

RAG is an innovative approach that combines the strengths of retrieval-based and generative models. While the former involves accessing and extracting information from a large corpus of sources such as databases, articles, or websites, the latter excels in generating coherent and context-aware text. By combining both these components, RAG stands out in generating more informative and contextually relevant responses.

Command R+, which features a 128k-token context window, is optimised for advanced RAG to provide business-ready solutions. The new model improves response accuracy and provides in-line source citations that mitigate hallucinations, empowering enterprises to scale with AI to support tasks across various business functions like finance, HR, sales, marketing, and customer support, across different sectors.

It also offers support for 10 key languages of global business: English, French, Spanish, Italian, German, Portuguese, Japanese, Korean, Arabic, and Chinese.

Tool Use Capabilities

Command R+ comes with tool use capabilities that are accessible through the Cohere and LangChain APIs. This can help automate complex business workflows, such as updating CRM tasks, activities, and records.

Multi-step tool use, a new feature in Command R+, enables the model to combine multiple tools over multiple steps to accomplish complex tasks. Command R+ also possesses the ability to self-correct when it tries to use a tool and fails, such as when encountering a bug or malfunction in a tool. This empowers the model to make repeated attempts to complete the task and enhances the likelihood of success.

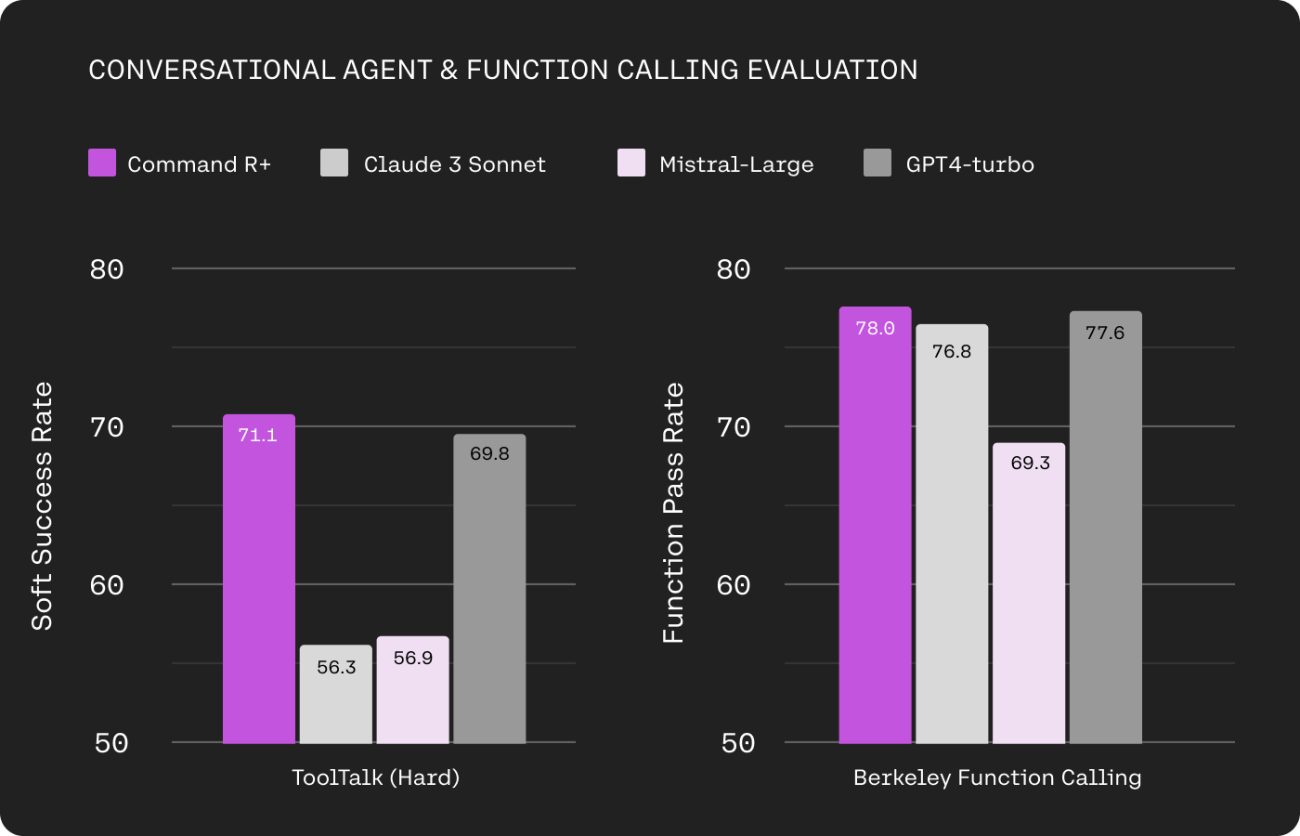

Image: Cohere

When evaluated on conversational tool-use and single-turn function-calling capabilities, Command R+ outperforms OpenAI’s GPT-4 Turbo, as well as Anthropic’s Claude 3 Sonnet, and Mistral Large in key enterprise AI benchmarks such as Microsoft’s ToolTalk (Hard) and Berkeley’s Function Calling Leaderboard.

Rising Competition in the Enterprise LLM Space

We’re living in the era of GenAI, and LLMs are the dynamite behind the generative AI boom. All big tech companies are either incubating their AI foundation models or are on the lookout to back the popular ones.

Various open source models like DBRX, Llama 2, Falcon 180b, and DeciLM 6B offer a means to equalise opportunities by allowing any enterprise access to powerful Gen AI tools that they can build applications upon or modify for their specific uses.

However, even then, those deploying open source AI models still need cloud servers for storing data and conducting inferences. Any enterprise signing up for Microsoft Azure or AWS would rather use one of the AI models suggested by the cloud server provider instead of trying to build or run their own. That is why cloud hyperscalers are the key players in the generative AI race.

This also explains the level of escalation between the three cloud computing leaders, Amazon, Microsoft, and Google, to collaborate with, benefit from, and provide their consumers with the latest cutting-edge generative AI technologies.

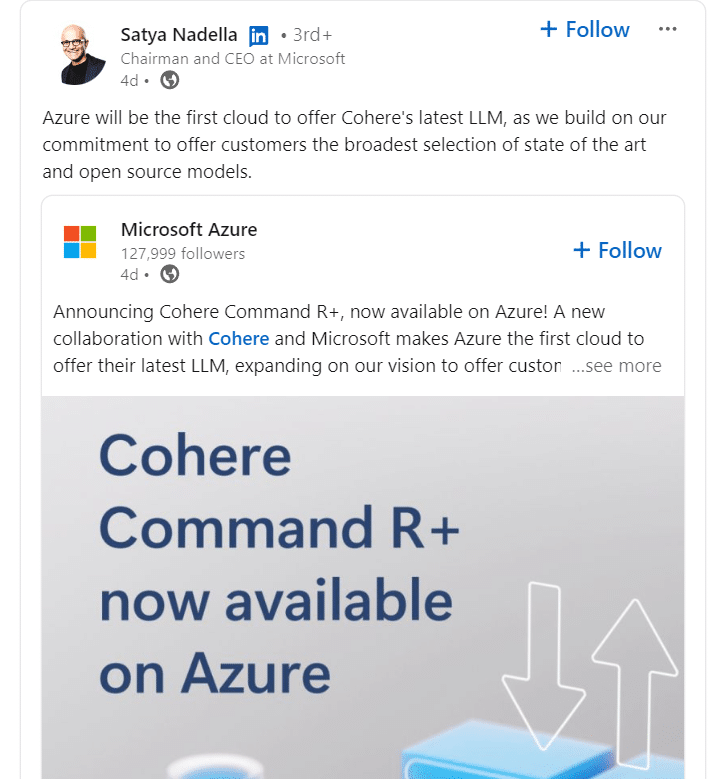

Source: Linkedin

Microsoft CEO Satya Nadella announced on Linkedin that Azure would be the first cloud to offer Cohere’s latest Command R+ LLM, also available on Amazon Sagemaker. The new model will soon be accessible on Oracle Cloud Infrastructure (OCI) and other cloud platforms. However, there is no collaboration announcement with Google Cloud as of yet.

OpenAI is still dominating, but others are fast catching up

Source: Reddit

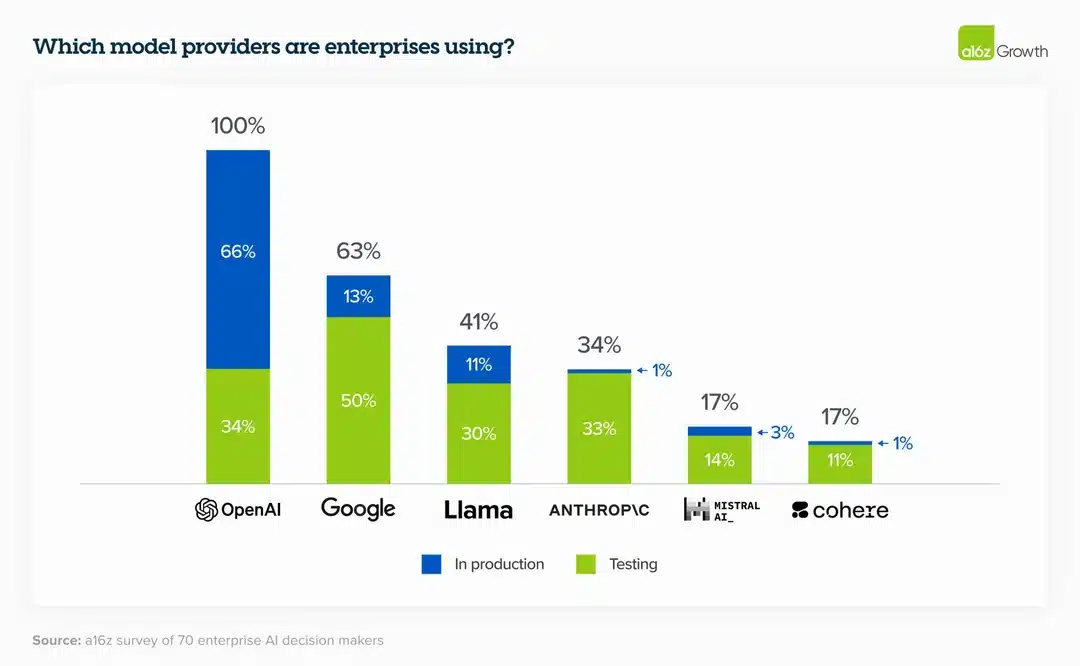

Although OpenAI is still dominating the LLM space, various other alternatives like those by Google, Llama, Anthropic, Mistral AI, and Cohere are booming and increasingly being adopted by cloud providers and enterprises alike.

With advanced capabilities and competitive pricing, they have the potential to emerge as a leader in the enterprise AI market. With input and output costs for one million tokens set at $3 and $15, Cohere’s pricing for Command R+ is competitive. Compared with others, the pricing is on par with Claude 3 Sonnet, whereas the latest OpenAI GPT-4 Turbo model costs $10 for one million input tokens and $30 for one million output tokens.

With the entrance of new competitors in the current steady march of AI innovation, it’s time for OpenAI to move quickly and release GPT-5 if it wants to keep its lead in the AI field.