|

Listen to this story

|

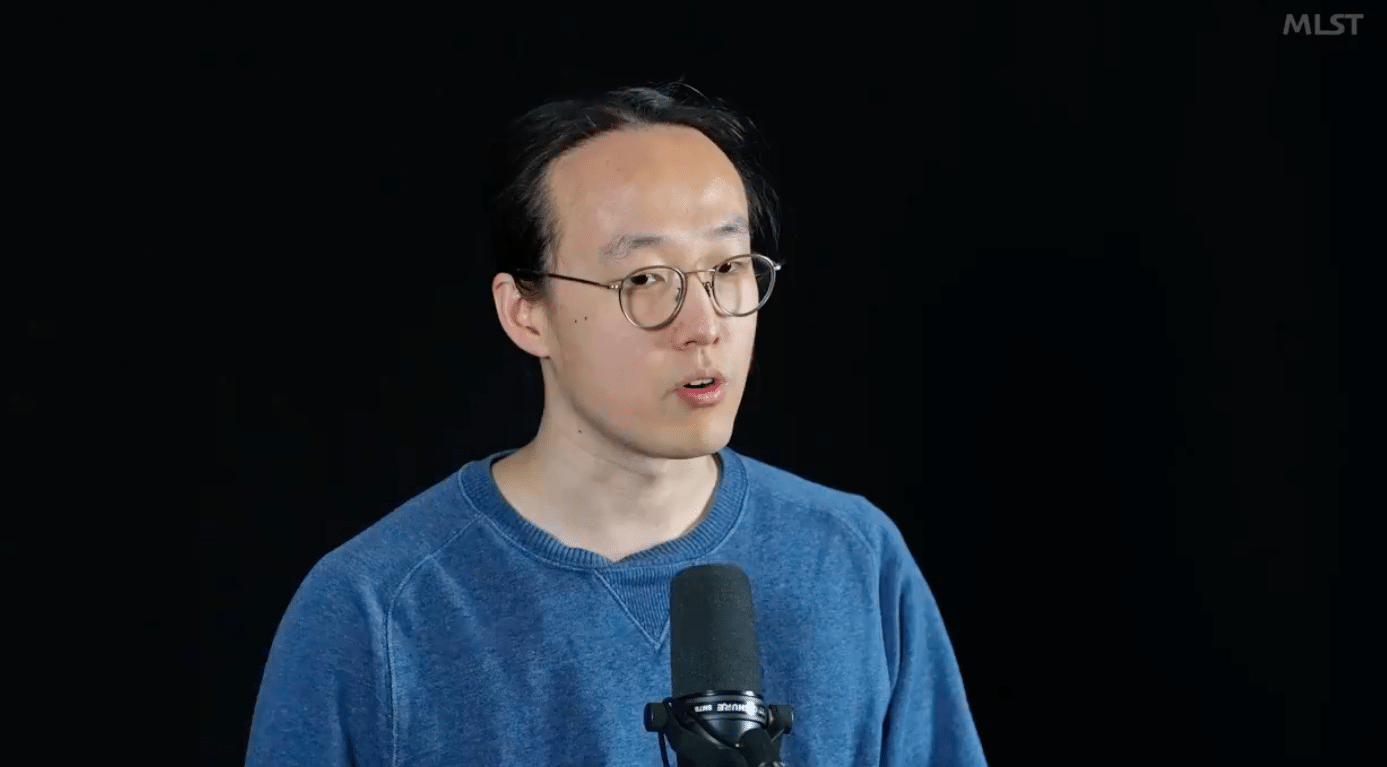

Google Deepmind AI researcher Minqi Jiang said the next frontier of AI is moving from systems that answer questions to systems which ask the questions. “The next frontier of AI is really how we design systems that don’t just answer questions, but they actually are the ones that start to ask the questions,” he said in a recent podcast.

“I think once we can have AI systems that start to ask interesting questions, um, that’s when we start to get closer to, I think, traditional notions of what a strong AGI might be,” he added.

Speaking of the current AI models from ChatGPT to Stable Diffusion, he said that these models are very impressive, but ultimately, what they do is answer questions. “Ultimately, what they do is they’re in the Q&A business. So, I basically ask these systems a question or give them a command, and they give me an answer.”

Minqi Jiang recently co-authored a paper ‘REWARD-FREE CURRICULA FOR TRAINING ROBUST WORLD MODELS’ which addresses the problem of generating curricula to train robust world models in a reward-free setting.

There is growing interest in developing generally capable agents that can adapt to new tasks without additional environment training. Learning world models from reward-free exploration is a promising approach for this. The model explores different environments or scenarios, gradually building a robust understanding of the world’s underlying dynamics.

The authors consider robustness in terms of minimax regret over all environment instantiations. They show that minimax regret can be connected to minimising the maximum error in the world model across environment instances.