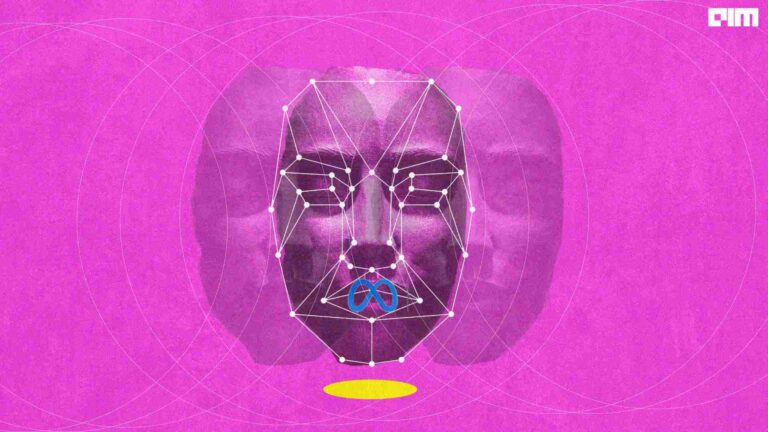

Do AI programs require oversight? A fresh debate has sparked post the release of a report by the US Government Accountability Office. It reveals a total lack of oversight by federal agencies on the use of facial recognition software purchased from third party vendors.

The report in detail analyses the softwares federal agencies use and the datasets they are trained on. Twenty agencies admitted to using facial recognition software in some form without oversight and primarily relied on image databases maintained by the Department of Defence and Department of Homeland Security.

These technologies have been very recently used to identify people on a massive scale, such as protestors from the Black Lives movement last year and the rioters from the Capitol Hill attack this year. It mentions that the agencies either had no idea of the software they used or were familiar with. This magnifies the privacy and security risks inherent to the use of such technologies.

Need for accountability and oversight

Facial recognition systems are not accurate in the real world. It can be accurate in unconstrained conditions, but identifying people from street cameras is not ideal. The software is known to make mistakes and identify wrong people. They are more unreliable and racially biased than we feared. For instance, Amazon’s Rekognition once identified Oprah Winfrey as a man. Another facial recognition software matched the images of 26 US Congressmen with a mugshot of a criminal.

A report by the National Institute of Standards and Technology found empirical evidence that most facial recognition algorithms exhibit demographic characteristics that can deteriorate the accuracy of software based on race, gender, and age. An analysis of three commercial facial recognition software showed an error rate of 34 percent for dark-skinned women– 49 times higher than that of middle-aged white men.

Yet another study revealed that Asian and African people are about a hundred times more likely to be misidentified than their white counterparts. Native Americans had the highest rate of false positives among all ethnicities.

Misidentifications by such software could lead to arrests, false suspicion and threats. It sparks the need for human verification before taking action.

Why are algorithms misidentifying?

Four steps are involved in the process of facial recognition- facial detection, facial analysis, conversion of the image to data and identification of a match. The slightest mistake at any step can lead to significant glitches.

Facial recognition softwares run on Deep Convolutional Neural Networks(DCNN). The training process of any deep learning algorithm is the key to its performance and the biases it develops. It is similar to other machine learning training, except they are given labelled data. The faces in datasets are labelled, and the evaluation of datasets is done via a loss function. It is trained like a usual classification network and then fine-tuned to output encodings.

The training process of DCNN is also its biggest challenge and drawback. Such deep learning requires a large amount of data to be trained on with large datasets containing enough variation to generalise all unseen samples. Mostly the datasets are biased and include more images of caucasian people.

Another challenge arises when people change their appearances. Several causes related to appearances can be:

- Illumination: The light on a person can affect the software’s operations as it affects the intensity of colour in a pixel. A single image of the same person in a different light can show more differences to each other rather than another subject.

- Expressions and facial blockers: Expressions of a person, facial hair, or anything that can hide or change the appearance can render the algorithm ineffective.

- Pose variation: The algorithm does not identify well when the image of the same person comes in a different pose or at a completely different angle.

Creating a programme that can create templates of images and training the network on that can mitigate these issues. Although, it can be a significant task to include every pose variation in an image.

Recent developments

The recent developments in the field of affordable, powerful Graphics Processing Units(GPUs) have drawn the primary research focus on the development of increasingly deep neural networks and the need to balance accuracy with time and resource constraints. However, despite the developments, achieving state-of-the-art accuracy is inhibited by dependence on large databases.

For inducing rapid development of the technology, the focus should be on reducing the high computational cost of DCNN and their dependence on databases. Minimising training time is also an area requiring attention, as does network architecture that would benefit from the selectiveness of the programme. The US Government Accountability Office suggests that human oversight can reduce misidentification until perfection in facial recognition is achieved.