Every day, a large number of pictures are captured and shared in social networks with a large percentage of them featuring people-centric content. Facebook is making sure that you don’t make your friends take endless photos, posing and reposing at important occasions. They are also helping you cut out the time you spend on Photoshop or similar image-correction apps and products by making their own photo uploading platform more imaginative.

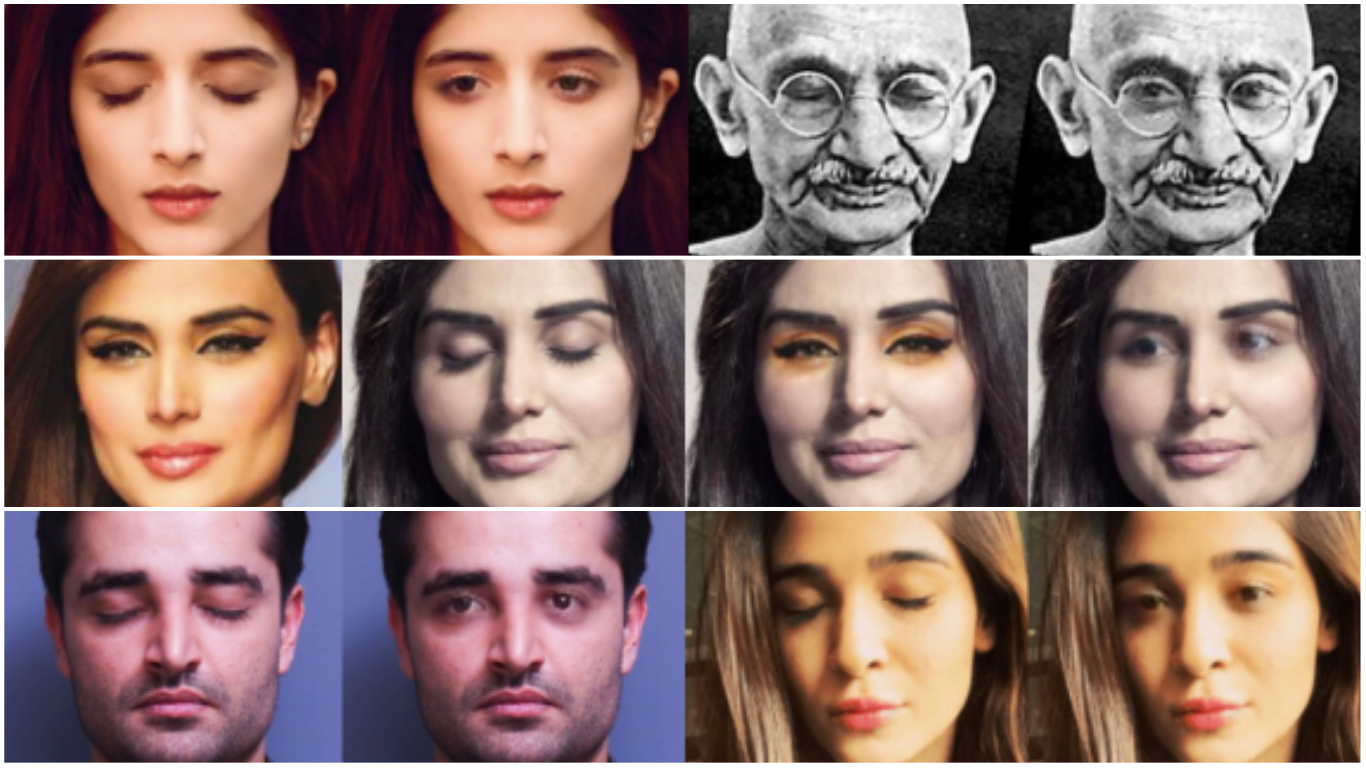

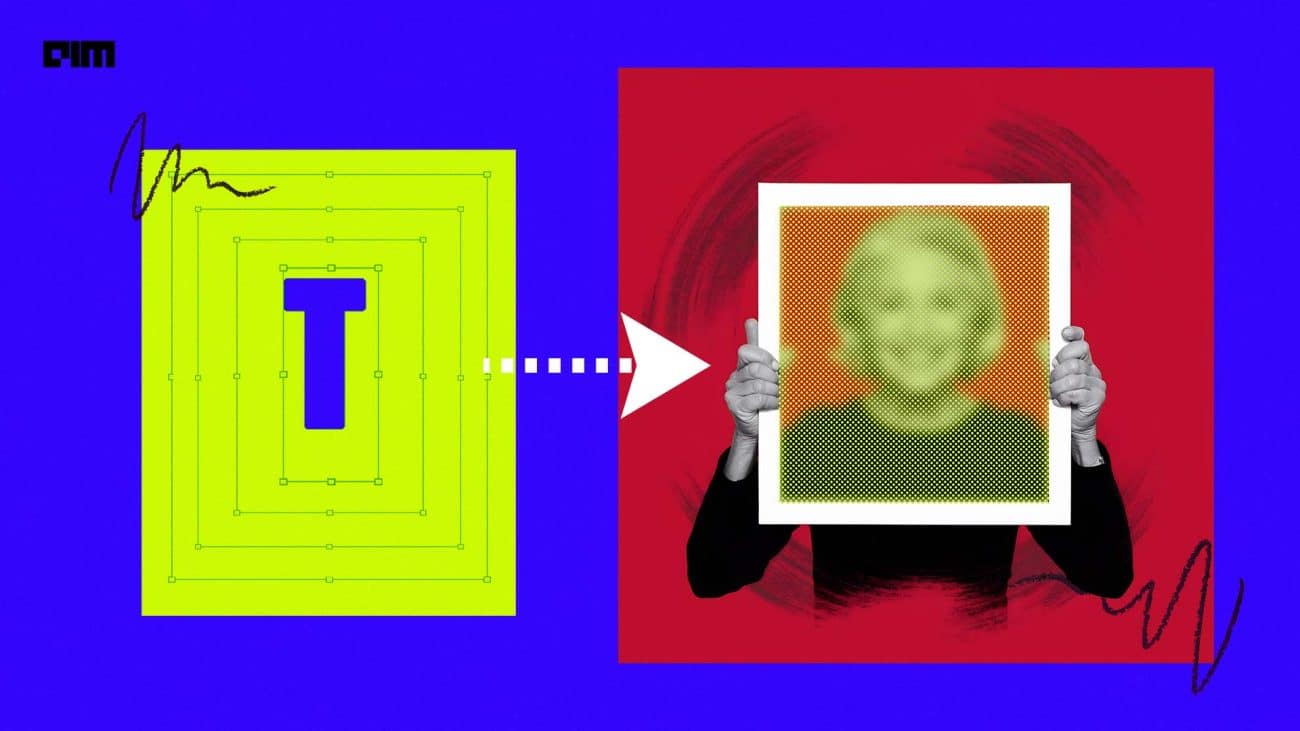

According to a recent paper published by Facebook, the Menlo Park-based social media giant is working on an “in-painting technology” that replaces closed eyes with open ones in a remarkably convincing manner. They are working on achieving this by using Exemplar Generative Adversarial Networks (ExGANs) — a type of conditional GAN that utilise exemplar information to produce high-quality, personalised in-painting results.

The paper says, “We propose using exemplar information in the form of a reference image of the region to in-paint, or a perceptual code describing that object. Unlike previous conditional GAN formulations, this extra information can be inserted at multiple points within the adversarial network, thus increasing its descriptive power. We show that ExGANs can produce photo-realistic personalised in-painting results that are both perceptually and semantically plausible by applying them to the task of closed-to-open eye in-painting in natural pictures. A new benchmark dataset is also introduced for the task of eye in-painting for future comparisons.”

Basically, with data collected from the target people when they kept their eyes open, the GAN system learns what eyes should go on the person on the basis of shape, colour and other features of the eyes of a particular person.

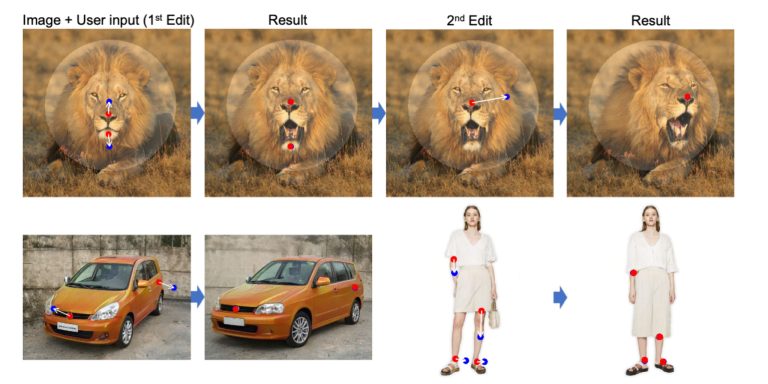

Created by Brian Dolhansky and Cristian Canton Ferrer from Facebook added in the paper, “…Because Exemplar GANs are a general framework, they can be extended to other tasks within computer vision, and even to other domains. In the future, we wish to try more combinations of reference-based and code-based exemplars, such as using a reference in the generator but a code in the discriminator.”

Last year, researchers at Facebook’s AI lab had developed an expressive bot, the animations of which were controlled by an artificially intelligent algorithm. The algorithm was trained by making it watch hundreds of videos of Skype conversation and was now capable of mimicking the way humans adjust their expressions while talking.