This post is in continuation with our previous article about Alchemy, the very first benchmark on meta-Reinforcement Learning. Deepmind with the University of London has released an open-source benchmark environment for meta-RL : Alchemy: A structured task distribution for meta-reinforcement learning by Jane X. Wang, Michael King, Nicolas Porcel, Zeb Kurth-Nelson, Tina Zhu, Charlie Deck, Peter Choy, Mary Cassin, Malcolm Reynolds, Francis Song, Gavin Buttimore, David P. Reichert, Neil Rabinowitz, Loic Matthey, Demis Hassabis, Alex Lerchner, and Matthew Botvinick.

Meta-Learning or Learning-to-learn requires a series of tasks that provide both complete knowledge of task(accessibility) and shared structure from the real-world point of view(interesting). Existing work on meta-RL offered either of interest ability or accessibility but Alchemy extended the boundaries by providing both. Alchemy is a single-player 3D video game, whose environment is implemented in Unity. Here is a demonstration video of how Alchemy works.

Requirements

- Docker

- Python >= 3.6.1

- x86-64 CPU with SSE4.2 support

- Alchemy is fully supported on Linux but can be run on Windows via WSL.

Installation

The following installation procedure is for Linux 20.04.

- Install docker from here. Run commands

docker run hello-world and docker run -d gcr.io/deepmind-environments/alchemy:v1.0.0

To check the proper installation of the docker.

- Install Alchemy by cloning the git repository

!git clone https://github.com/deepmind/dm_alchemy.git !pip install wheel !pip install --upgrade setuptools !pip install ./dm_alchemy

Getting Started with Alchemy

First step is to create a 3D environment and observe it.

- Import all the required packages and modules.

import os import matplotlib.pyplot as plt import numpy as np import seaborn as sns import dm_alchemy from dm_alchemy import io from dm_alchemy import symbolic_alchemy from dm_alchemy import symbolic_alchemy_bots from dm_alchemy import symbolic_alchemy_trackers from dm_alchemy import symbolic_alchemy_wrapper from dm_alchemy.encode import chemistries_proto_conversion from dm_alchemy.encode import symbolic_actions_proto_conversion from dm_alchemy.encode import symbolic_actions_pb2 from dm_alchemy.types import stones_and_potions from dm_alchemy.types import unity_python_conversion from dm_alchemy.types import utils

- Create an environment.

#an image of size 240 X 200 width, height = 240, 200 #define the task, description can be found here: #https://github.com/deepmind/dm_alchemy/blob/master/docs/index.md#tasks level_name = 'alchemy/perceptual_mapping_randomized_with_rotation_and_random_bottleneck' seed = 1023 #https://github.com/deepmind/dm_alchemy/blob/master/docs/index.md#configurable-environment-settings settings = dm_alchemy.EnvironmentSettings( seed=seed, level_name=level_name, width=width, height=height) env = dm_alchemy.load_from_docker(settings)

- Check the observations via env.observation_spec() whose description can be found here.

- Alchemy provides many actions to the agent like to move left, right, up, down, etc, you can find the whole list here or via this command env.action_spec().

- Start a new episode and plot it.

#plot the new episode timestep = env.reset() plt.figure() plt.imshow(timestep.observation['RGB_INTERLEAVED'])

- From the image above, it is visible that the person is far from the table so to get closer to it, let’s take some actions.

for _ in range(38):

timestep = env.step({'MOVE_BACK_FORWARD': 1.0})

for _ in range(6):

timestep = env.step({'LOOK_LEFT_RIGHT': -1.0})

for _ in range(3):

timestep = env.step({'LOOK_DOWN_UP': 1.0})

plt.figure()

plt.imshow(timestep.observation['RGB_INTERLEAVED'])

Now we can see the potions of different colours which can be used to transform stones to increase their value before placing them in the big white cauldron to get reward.

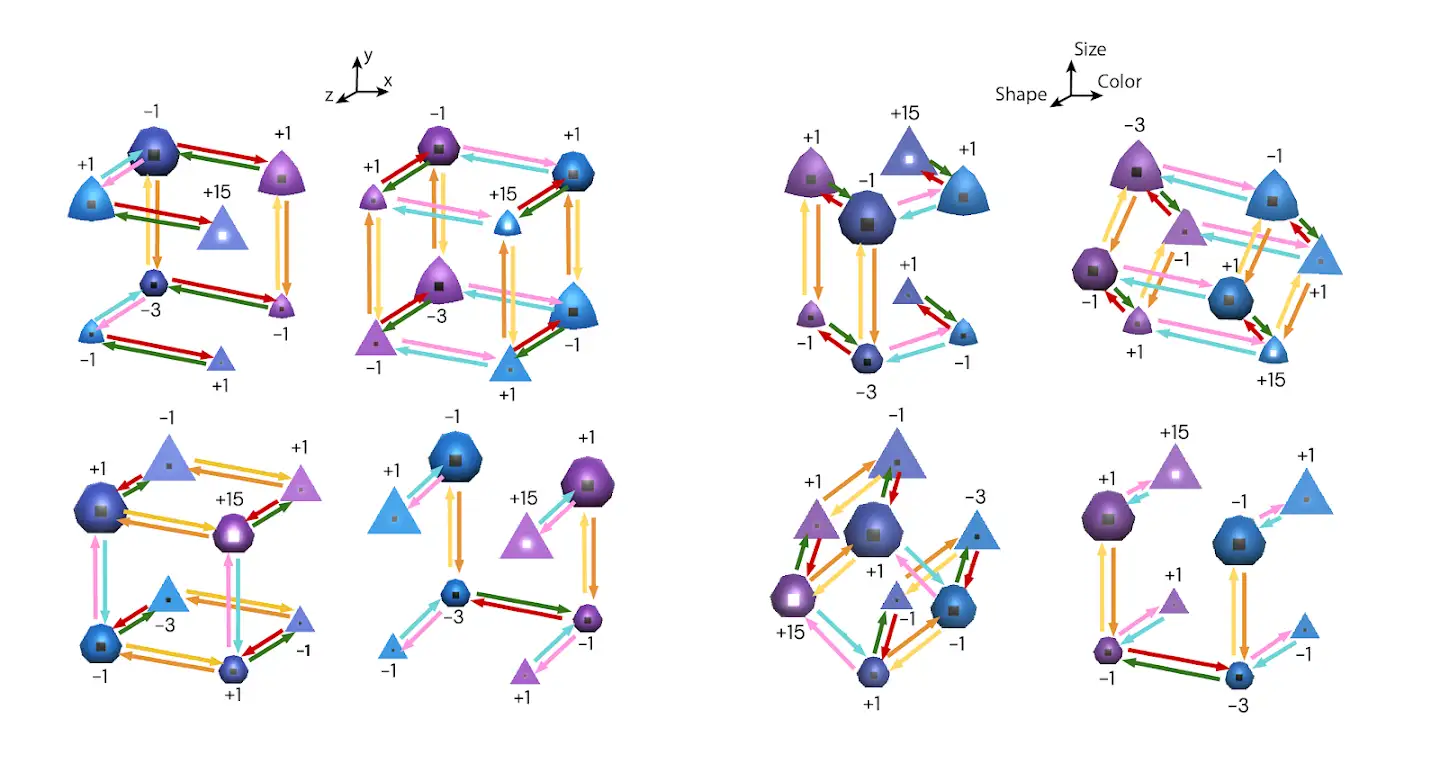

Create a Symbolic Environment : Representing the above challenge in symbolic form which eliminates the complex perceptual challenges, more difficult action space and longer timescales of the 3d environment. Full details of the symbolic environment can be found in the Appendix of the Research Paper.

- Create an environment.

env = symbolic_alchemy.get_symbolic_alchemy_level(level_name, seed=314)

- Check the observations via env.observation_spec(). Here the symbolic environment is concatenated into a single array of length 39 with 5 features for each stone. Details can be found here.

- Total actions provided by alchemy for symbolic link are 40 (0 – 39) where 0 represents nothing and other integers represents putting stone in a potion or cauldron.

env.action_spec()

- Start the observation with stone 0 being purple and small but in mid way it found round and pointy. Hence, its reward indicator shown -1

timestep = env.reset() timestep.observation['symbolic_obs']

- After step 2, symbolic representation can be found as :

timestep = env.step(2) timestep.observation['symbolic_obs']

If we put stone 0 into potion 0 and look at the resulting observation we can see that stone 0 has become purple, large and halfway between round and pointy and its reward indicator shows -3. From this an agent which understands the task and the generative process can deduce things.

Combined Environment

This environment combines 3D environment and symbolic environment via wrapper around 3D environment. The wrapper listens to the events output and uses them to initialize and take actions in a symbolic environment so as to sync symbolic environment with 3D environment.

seed = 1023 settings = dm_alchemy.EnvironmentSettings( seed=seed, level_name=level_name, width=width, height=height) env3d = dm_alchemy.load_from_docker(settings) env = symbolic_alchemy_wrapper.SymbolicAlchemyWrapper( env3d, level_name=level_name, see_symbolic_observation=True)

Here, the agent takes actions in the 3D environment and receives observations from the 3D environment. Apart from this, it can also receive observations generated from symbolic environments.

- Create a new episode using the same seed

timestep = env.reset() plt.figure() plt.imshow(timestep.observation['RGB_INTERLEAVED'])

- Take some actions in a 3D environment.

for _ in range(37):

timestep = env.step({'MOVE_BACK_FORWARD': 1.0})

for _ in range(4):

timestep = env.step({'LOOK_LEFT_RIGHT': -1.0})

for _ in range(3):

timestep = env.step({'LOOK_DOWN_UP': 1.0})

plt.figure()

plt.imshow(timestep.observation['RGB_INTERLEAVED'])

- Now, with symbolic observation, we see that the output matches the symbolic environment, even the color of the potions are the same.

print('Stones:')

for i in range(0, 15, 5):

print(timestep.observation['symbolic_obs'][i:i + 5])

print('Potions:')

for i in range(15, 39, 2):

potion_index = int(round(timestep.observation['symbolic_obs'][i] * 3 + 3))

potion = stones_and_potions.perceived_potion_from_index(potion_index)

print(unity_python_conversion.POTION_COLOUR_AT_PERCEIVED_POTION[potion])

Additional Information about the combined environment can be found here.

EndNotes

In this post, we have covered the basic usage of Alchemy. Reference material can be found here:

Note: The above implementation is verified on Linux (Ubuntu LTS 20.04) operating system.