|

Listen to this story

|

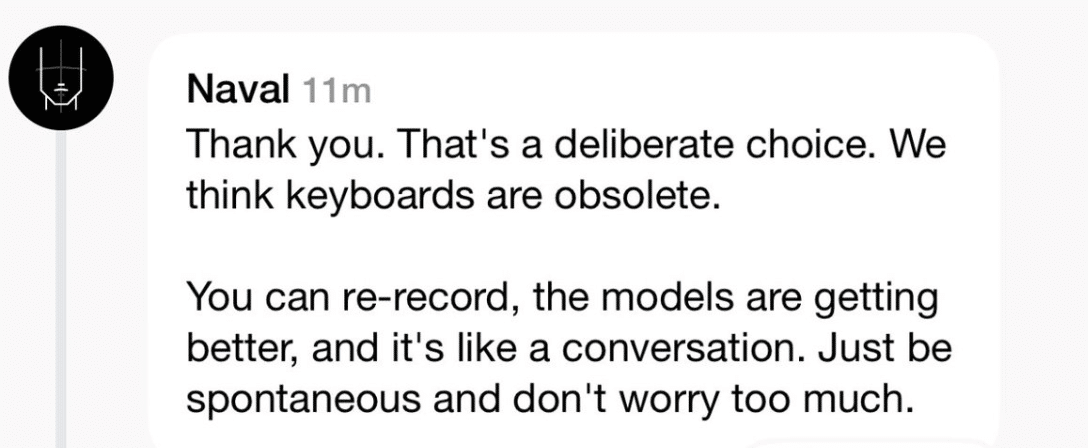

Entrepreneur and investor Naval Ravikant recently re-launched his social media app, Airchat, when there was no dearth of such platforms already. However, the USP of this one is that the app is completely voice-centric – the interaction is via voice only.

Source: X

Airchat might just be the latest entrant highlighting the power of voice, but a number of recent AI platforms and devices have already brought voice as a predominant user interface.

Dawn of the Voice Era

Multimodal AI was identified as one of Microsoft’s AI trends for the year, and going by the AI developments this year, voice modality is emerging as a key feature.

The latest Humane Ai Pin, a small wearable device that performs as a personal assistant and even works as a probable replacement for a smartphone, essentially works on voice. Any type of interaction with the device like making calls, reading messages, and clicking pictures can be executed through voice commands.

Bethany Bongiorno, co-founder of Humane, believes that voice will be an integral part of an AI future. “Voice-first in an AI future,” she said. Similarly, AI devices such Rabbit R1, a pocket-size gadget which is an integrated language action model, also operates on voice commands.

Brett Adcock, CEO and founder of robotics company Figure AI, said, “We believe the default user interface for the robot is speech. You’re going to want to talk to the robot. Even in an industrial setting, when you’re unboxing the robot for the first time, we think the initialisation process is speech.”

How Do We Assess Them?

With voice models come a different set of evaluation parameters. Interestingly, the shift has started after a year-long emphasis on text-based AI generation.

Benchmarks and leaderboards for evaluating text-based models have always been a topic of LLM discussions. The need has also given rise to an Indic LLM Leaderboard. However, leaderboards for voice-based generative models are not that prominent.

There are evaluation parameters for voice-based models such as latency, word error rate (WER), short-time objective intelligibility (STOI), miss-rate, and ROC Curve. These parameters measure accuracy in terms of speech quality and speech intelligibility.

Shift from Text to Voice

Chatbots that aid multiple functions such as HR operations or finding love, are essentially text-based. However, there is a casual shift now.

Last month, Hume AI released EVI, an empathetic voice interface AI model. Users can converse with the model normally, where the model will be able to analyse and understand a user’s emotion based on the tone of the voice and other features. It almost serves as a therapist.

Hume comes as a huge shift from other similar platforms such as Inflection’s Pi, which acts as an emotionally intelligent AI that helps with one’s emotional needs.

Not All Big Tech are Gung-ho

While the big tech companies are integrating voice in one form or another, be it OpenAI’s ChatGPT or Google’s Gemini, the models are multimodal allowing voice interface as a normal mode. Interestingly, a major player, Apple, is not too keen on this form of modality yet.

For a company making strides in bringing generative AI features to its phone, and even releasing AI models such as RealM that could possibly beat GPT-4, Apple is yet to catch up on the voice game.

However, voice is not completely alien to Apple. Apple’s spatial computing device, Apple Vision Pro can be controlled using voice features.

Further, the company’s famed voice assistant, Siri, is expected to get advanced AI features which will probably be announced at the Apple WWDC 2024 event in June. The feature might be a major boost to Apple’s voice modality function.

While voice is being increasingly adopted, companies are still relying on text-based chatbots. IT company, Happiest Minds recently announced ‘hAPPI’, a generative AI-powered chatbot that will converse with users on health and wellness-related queries.

It is obvious that to get to the closest level-of human-like interaction, voice becomes indispensable. After all, “Humans are all meant to get along with other humans, it just requires the natural voice,” said Ravikant.

PS: The story was written using a keyboard.