LLMs are just like toothpaste, you have to apply slight pressure (prompt rightly) to get the desired formula (answers) out. What if, by the time you take your toothbrush out and begin brushing, but realise that your teeth have succumbed to decay?

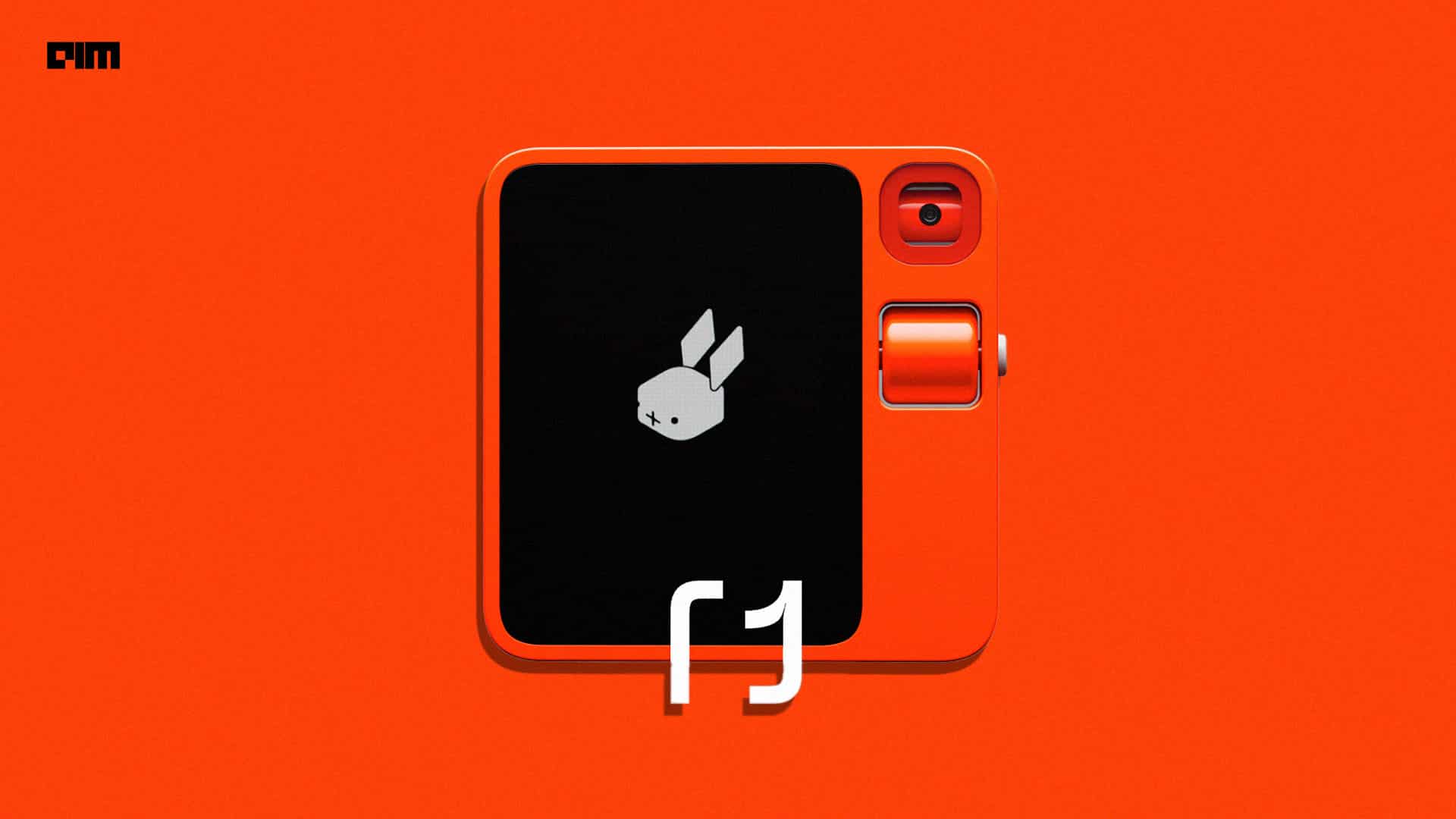

Highly unlikely, but this is where language action model, or LAM, comes into play. It enables you to swiftly accomplish your task with just a squeeze (via text or voice prompt), almost at the speed of a rabbit – 10x faster than existing LLMs — as shared by Jesse Lyu, the founder of AI startup Rabbit, at CES 2024. Lyu unveiled R1 a hottest new pocket-size gadget which sold 10,000 units in just 24 hours of its official launch.

Lyu believes that the company is on a mission to create the simplest computer—something so intuitive that you won’t need to learn how to use it. “The best way to achieve this is to break away from the app-based operating system currently used by smartphones. Instead, we envision a natural language-centered approach,” he added.

While it’s still a little early to say this, but Rabbit R1’s ability to not directly interact with interfaces or apps might pose a threat to UI/UX designers or developers in the coming years as an AI agent simply can focus on the task it needs to complete. Interestingly, Rabbit has also introduced an upgraded ‘teach mode’, enabling users to demonstrate workflows for the R1 to gradually acquire new skills over different interfaces.

Rabbit R1 features a minimalist design with a touchscreen, rotating camera, and scroll wheel, prioritising intuitive gestures and voice commands over keyboards and menus. Boasting a 2.88-inch touchscreen, the Rabbit R1 is powered by a robust 2.3GHz MediaTek processor, accompanied by 4GB of RAM and a capacious 128GB storage capacity.

A user on X wrote, “Rabbit R1 offers a preview of the future. One where apps are gone and operating systems are abstracted away by LLMs.”

Rabbit R1 not only looks funky and cool on the outside with its eye-catching Luminous Orange colorway but also comes with an operating system that navigates all your apps quickly and efficiently, so you don’t have to.

LAM vs LLM

Instead of relying on OpenAI’s models, Rabbit has created its own foundational model, which they call LAM (large action model). “LAM as we call it, is a new foundational model that understands and executes human intentions on computers. Driven by our research in neuro-symbolic systems, with a large action model, we fundamentally find a solution to the challenges that apps, APIs, or agents face,” said Lyu.

LAM is designed to make AI systems see and act on apps in the same way humans do. It learns by demonstration – observing a human using an interface and reliably replicating the process, even if the interface is changed.

It comprehends how users use applications and services daily instead of relying on APIs. LAM has seen most interfaces of consumer applications on the internet and is more capable as we feed it with more data of actions taken on them.

“LAM can learn any interface from any software, regardless of the platform they’re running on. In short, the large language model understands what you say, but the large action model gets things done. We use LAM to bring AI from words to action,” said Lyu.

What sets LAM apart from LLMs is that LAMs go beyond language processing and aim to perform actions in the real world based on textual instructions. It takes instructions and uses their language comprehension to navigate digital environments and complete tasks, like booking flights, ordering food, or controlling smart home devices.

“Large language models, like ChatGPT, showed the possibility of understanding natural language with AI; our large action model takes it a step further – it doesn’t just generate text in response to human input – it generates actions on behalf of users to help us get things done,” mentioned Lyu.

LAM works with Rabbit OS, which operates apps on a secured cloud. The Rabbit Hole is an all-in-one web portal designed to manage relationships with Rabbit OS and companion devices. For instance, if someone wants to listen to music, they can visit the Rabbit Hole Web Portal and log into third-party applications like Spotify.

Similarly, Humane Ai also has an OS by the name Cosmos which powers Ai Pin, which is likely to be shipped in March this year.

Is it Perfect?

Rabbit R1 runs on the cloud and does not have on-edge capabilities. Meanwhile, the majority of tech giants are trying to bring LLMs on edge, including Apple, Google, and Samsung.

Lyu claimed that with Rabbit OS, he gets a response 10 times faster than most voice AI projects. “Rabbit answers my questions within 500 milliseconds.” However, a user on Hacker News questioned this claim, “Where is the inference running? I don’t believe it’s on the device. And if it’s in the cloud, then why make the claim it is under 500ms?”

One way to perceive Rabbit R1 is by not placing significant emphasis on its hardware, which may not rank among the best available. But it’s an attempt to make the most of the AI agent, allowing it to take actions effectively. Another user on Hacker News wrote, “I don’t think the hardware is the main product. I believe the AI is, but they didn’t want to be ‘just an app’; they aim to be the first OS for the new way of computing. So, they designed a new device.”