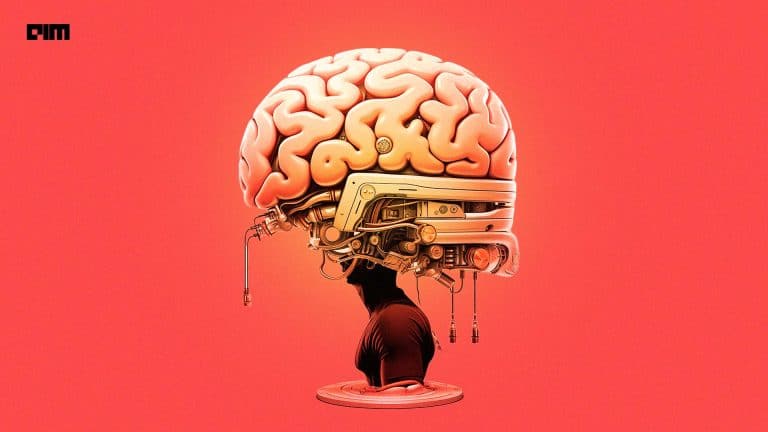

Meta AI announced a long-term research initiative to understand how the human brain processes language. In collaboration with neuroimaging centre Neurospin (CEA) and INRIA, Meta AI is comparing how AI language models and the brain respond to the same spoken or written sentences.

Over the past two years, Meta AI has applied deep learning techniques to public neuroimaging data sets to analyse how the brain processes words and sentences. The data sets were collected and shared by several academic institutions, including Max Planck Institute for Psycholinguistics and Princeton University.

The comparison between brains and language models has already led to valuable insights that include:

- Language models that closely resemble brain activity best predict the next word from context. Prediction based on partially observable inputs is at the core of self-supervised learning (SSL) in AI and may be key to how people learn the language.

- Specific regions in the brain anticipate words and ideas far ahead in time, while most language models today are typically trained to predict the very next word. Unlocking this long-range forecasting capability could help improve modern AI and language models.

“Of course, we’re only scratching the surface — there’s still a lot we don’t understand about how the brain functions, and our research is ongoing.”

Meta AI

Meta AI’s collaborators at NeuroSpin are now creating an original neuroimaging data set to expand this research. They will be open-sourcing the data set, deep learning models, code, and research papers resulting from this effort to help spur discoveries in both AI and neuroscience communities. This work is part of Meta AI’s broader investments toward human-level AI that learns from limited to no supervision.