|

Listen to this story

|

NVIDIA with Run:ai, will be providing a consistent, full-stack solution that allows developers to build and test their AI applications on GPU-powered on-premises or on-cloud instances.

Once an AI application is developed and validated on a GPU-powered NVIDIA platform, it can be deployed on any other GPU-powered platform without requiring extensive code changes. This flexibility enables organizations to deploy AI applications seamlessly across hybrid and multi-cloud environments, saving time and effort while maintaining consistent performance.

NVIDIA recognizes that MLOps teams and developers face the complexity of adapting AI applications to run seamlessly across various target platforms due to changing technology stacks. NVIDIA empowers organizations to harness the full potential of AI without the burden of extensive code modifications.

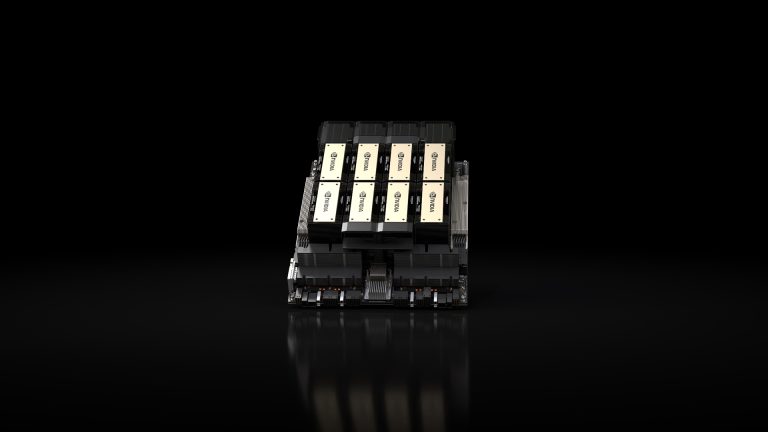

Run:ai, an industry leader in compute orchestration for AI workloads, has certified NVIDIA AI Enterprise, an end-to-end, secure, cloud-native suite of AI software, on its Atlas platform.

Run:ai Atlas includes GPU Orchestration capabilities to help researchers consume GPUs more efficiently. They do this by automating the orchestration of AI workloads and the management and virtualization of hardware resources across teams and clusters.

Run:ai can be installed on any Kubernetes cluster, to provide efficient scheduling and monitoring capabilities to your AI infrastructure. With the NVIDIA Cloud Native Stack VMI, you can add cloud instances to a Kubernetes cluster so that they become GPU-powered worker nodes of the cluster.

Customers can purchase NVIDIA AI Enterprise through an NVIDIA Partner to obtain enterprise support for NVIDIA VMI and GPU Operator.