Artificial neural networks are computational models that work similarly to the functioning of a human nervous system. There are several kinds of artificial neural networks. These types of networks are implemented based on the mathematical operations and a set of parameters required to determine the output. Let’s look at some of the neural networks:

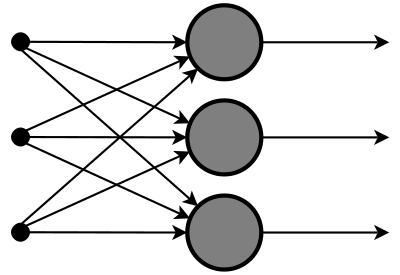

1. Feedforward Neural Network – Artificial Neuron:

This neural network is one of the simplest forms of ANN, where the data or the input travels in one direction. The data passes through the input nodes and exit on the output nodes. This neural network may or may not have the hidden layers. In simple words, it has a front propagated wave and no backpropagation by using a classifying activation function usually.

Below is a Single layer feed-forward network. Here, the sum of the products of inputs and weights are calculated and fed to the output. The output is considered if it is above a certain value i.e threshold(usually 0) and the neuron fires with an activated output (usually 1) and if it does not fire, the deactivated value is emitted (usually -1).

Application of Feedforward neural networks are found in computer vision and speech recognition where classifying the target classes is complicated. These kind of Neural Networks are responsive to noisy data and easy to maintain. This paper explains the usage of Feed Forward Neural Network. The X-Ray image fusion is a process of overlaying two or more images based on the edges. Here is a visual description.

2. Radial basis function Neural Network:

Radial basic functions consider the distance of a point with respect to the center. RBF functions have two layers, first where the features are combined with the Radial Basis Function in the inner layer and then the output of these features are taken into consideration while computing the same output in the next time-step which is basically a memory.

Below is a diagram that represents the distance calculating from the center to a point in the plane similar to a radius of the circle. Here, the distance measure used in euclidean, other distance measures can also be used. The model depends on the maximum reach or the radius of the circle in classifying the points into different categories. If the point is in or around the radius, the likelihood of the new point begin classified into that class is high. There can be a transition while changing from one region to another and this can be controlled by the beta function.

This neural network has been applied in Power Restoration Systems. Power systems have increased in size and complexity. Both factors increase the risk of major power outages. After a blackout, power needs to be restored as quickly and reliably as possible. This paper how RBFnn has been implemented in this domain.

This neural network has been applied in Power Restoration Systems. Power systems have increased in size and complexity. Both factors increase the risk of major power outages. After a blackout, power needs to be restored as quickly and reliably as possible. This paper how RBFnn has been implemented in this domain.

Power restoration usually proceeds in the following order:

- The first priority is to restore power to essential customers in the communities. These customers provide health care and safety services to all and restoring power to them first enables them to help many others. Essential customers include health care facilities, school boards, critical municipal infrastructure, and police and fire services.

- Then focus on major power lines and substations that serve larger numbers of customers

- Give higher priority to repairs that will get the largest number of customers back in service as quickly as possible

- Then restore power to smaller neighborhoods and individual homes and businesses

The diagram below shows the typical order of the power restoration system.

Referring to the diagram, first priority goes to fixing the problem at point A, on the transmission line. With this line out, none of the houses can have power restored. Next, fixing the problem at B on the main distribution line running out of the substation. Houses 2, 3, 4 and 5 are affected by this problem. Next, fixing the line at C, affecting houses 4 and 5. Finally, we would fix the service line at D to house 1.

3. Kohonen Self Organizing Neural Network:

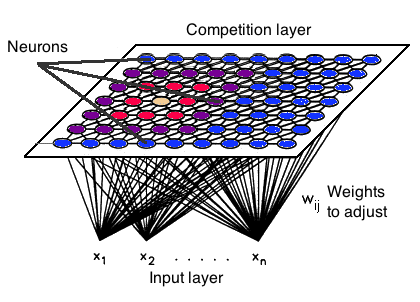

The objective of a Kohonen map is to input vectors of arbitrary dimension to discrete map comprised of neurons. The map needs to be trained to create its own organization of the training data. It comprises either one or two dimensions. When training the map the location of the neuron remains constant but the weights differ depending on the value. This self-organization process has different parts, in the first phase, every neuron value is initialized with a small weight and the input vector.

In the second phase, the neuron closest to the point is the ‘winning neuron’ and the neurons connected to the winning neuron will also move towards the point like in the graphic below. The distance between the point and the neurons is calculated by the euclidean distance, the neuron with the least distance wins. Through the iterations, all the points are clustered and each neuron represents each kind of cluster. This is the gist behind the organization of Kohonen Neural Network.

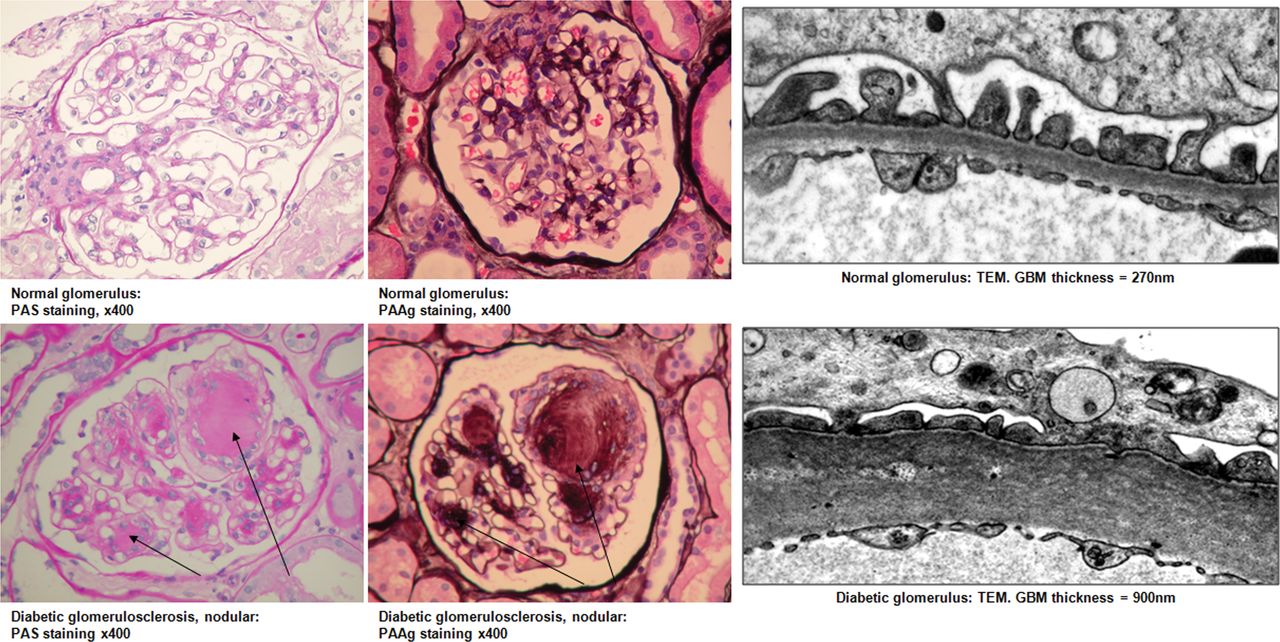

Kohonen Neural Network is used to recognize patterns in the data. Its application can be found in medical analysis to cluster data into different categories. Kohonen map was able to classify patients having glomerular or tubular with an high accuracy. Here is a detailed explanation of how it is categorized mathematically using the euclidean distance algorithm. Below is an image displaying a comparison between a healthy and a diseased glomerular.

4. Recurrent Neural Network(RNN) – Long Short Term Memory:

The Recurrent Neural Network works on the principle of saving the output of a layer and feeding this back to the input to help in predicting the outcome of the layer.

Here, the first layer is formed similar to the feed forward neural network with the product of the sum of the weights and the features. The recurrent neural network process starts once this is computed, this means that from one time step to the next each neuron will remember some information it had in the previous time-step.

This makes each neuron act like a memory cell in performing computations. In this process, we need to let the neural network to work on the front propagation and remember what information it needs for later use. Here, if the prediction is wrong we use the learning rate or error correction to make small changes so that it will gradually work towards making the right prediction during the back propagation. This is how a basic Recurrent Neural Network looks like,

The application of Recurrent Neural Networks can be found in text to speech(TTS) conversion models. This paper enlightens about Deep Voice, which was developed at Baidu Artificial Intelligence Lab in California. It was inspired by traditional text-to-speech structure replacing all the components with neural network. First, the text is converted to ‘phoneme’ and an audio synthesis model converts it into speech. RNN is also implemented in Tacotron 2: Human-like speech from text conversion. An insight about it can be seen below,

5. Convolutional Neural Network:

Convolutional neural networks are similar to feed forward neural networks, where the neurons have learnable weights and biases. Its application has been in signal and image processing which takes over OpenCV in the field of computer vision.

Below is a representation of a ConvNet, in this neural network, the input features are taken in batch-wise like a filter. This will help the network to remember the images in parts and can compute the operations. These computations involve the conversion of the image from RGB or HSI scale to the Gray-scale. Once we have this, the changes in the pixel value will help to detect the edges and images can be classified into different categories.

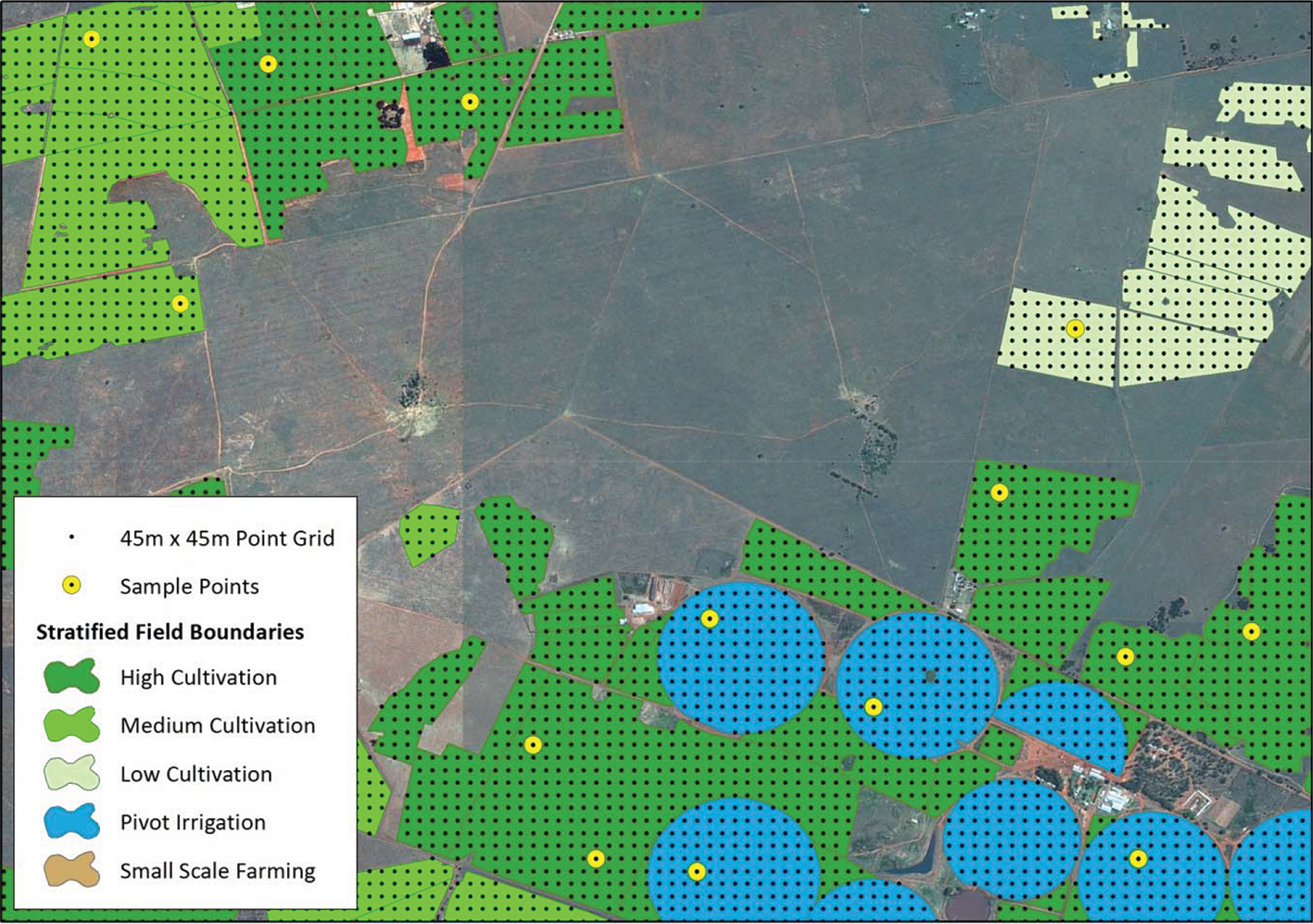

ConvNet are applied in techniques like signal processing and image classification techniques. Computer vision techniques are dominated by convolutional neural networks because of their accuracy in image classification. The technique of image analysis and recognition, where the agriculture and weather features are extracted from the open-source satellites like LSAT to predict the future growth and yield of a particular land are being implemented.

6. Modular Neural Network:

Modular Neural Networks have a collection of different networks working independently and contributing towards the output. Each neural network has a set of inputs that are unique compared to other networks constructing and performing sub-tasks. These networks do not interact or signal each other in accomplishing the tasks.

The advantage of a modular neural network is that it breakdowns a large computational process into smaller components decreasing the complexity. This breakdown will help in decreasing the number of connections and negates the interaction of these networks with each other, which in turn will increase the computation speed. However, the processing time will depend on the number of neurons and their involvement in computing the results.

Below is a visual representation,

Modular Neural Networks (MNNs) is a rapidly growing field in artificial Neural Networks research. This paper surveys the different motivations for creating MNNs: biological, psychological, hardware, and computational. Then, the general stages of MNN design are outlined and surveyed as well, viz., task decomposition techniques, learning schemes and multi-module decision-making strategies.