|

Listen to this story

|

Deep networks require a substantial quantity of training data to perform well. To get a satisfactory outcome from the model, the input data needs to be pre-processed. It is the process of cleaning the data and preparing it for the model. Data augmentation is a frequent picture preparation approach. Image augmentation builds training pictures artificially by using various processing methods or a mix of numerous processing methods, such as random rotation, shifts, shear and flips, etc. It will assist us in expanding the dataset utilizing the existing data. This article will familiarize you with preprocessing image data using the Keras function. Following are the topics to be covered.

Table of contents

- Brief about data augmentation

- Preprocessing image data with Tensorflow

Brief about data augmentation

Data augmentation (DA) is a collection of techniques that generate new data points from current data to enhance the amount of data artificially. Making minor adjustments to data or utilizing deep learning models to produce additional data points are examples of this. It is a recommended practice to utilize DA to prevent overfitting if the original dataset is too small to train on or to compress the DL model for better performance.

To be clear, data augmentation is employed for more than only preventing overfitting. A big dataset is critical for the performance of both ML and Deep Learning (DL) models. However, we may increase the model’s performance by supplementing the data we currently have. This suggests that Data Augmentation can help improve the model’s performance.

Data collection and labelling may be time-consuming and expensive operations for machine learning models. Companies can cut operating expenses by transforming datasets using data augmentation techniques.

Cleaning data is one of the processes of a data model that is required for high-accuracy models. However, if cleaning affects data representability, the model cannot offer appropriate predictions for real-world inputs. Data augmentation approaches make machine learning models more robust by introducing variances that the model may encounter in the real world.

Are you looking for a complete repository of Python libraries used in data science, check out here.

Preprocessing image data with Tensorflow

This article will demonstrate preprocess with two different examples. The example demonstrates the use of the generator function to preprocess the data for a specific DNN model. The second example demonstrates the usage of general data augmentation techniques like height, flip, brightness, etc.

The data used for the first method is the famous flower dataset with five different classifications. The preprocessing would be performed by using the Keras image preprocessing module.

Importing necessary dependencies for preprocessing

import tensorflow as tf

import matplotlib.pyplot as plt

import numpy as np

import warnings

warnings.filterwarnings('ignore')

Skipping the dataset downloading part, refer to the notebook attached in the references section.

During the training of a model, the Keras deep learning package facilitates data augmentation automatically. The ImageDataGenerator class performs this task.

The class may be created first, and the configuration for the different forms of data augmentation is supplied using parameters to the class function Object().

img_preprocesser = tf.keras.preprocessing.image.ImageDataGenerator(preprocessing_function=tf.keras.applications.vgg16.preprocess_input)

This generator uses a preprocessing function in which the vgg16 model is imputed for preprocessing the dataset. The generator will preprocess the data according to the requirement of the model.

Once built, an image dataset iterator may be formed. For each iteration, the iterator will return one batch of enhanced photos. Using the flow() method, an iterator may be built from an image dataset that has been loaded into memory. An iterator may also be generated for an image dataset stored on a disc in a specific directory, where photos are sorted into subdirectories based on their class.

images, labels = next(img_preprocesser.flow(data,batch_size=10))

The batch size is taken as 10 for the ease of visualization as well as for training purposes too.

A data generator can also be used to define the validation and test datasets. Here, a second ImageDataGenerator instance is eventually employed, which can have the same pixel scaling values as the ImageDataGenerator instance used for the training dataset but does not require data augmentation. This is because data augmentation is only used to artificially increase the training dataset to improve model performance on an unaugmented dataset.

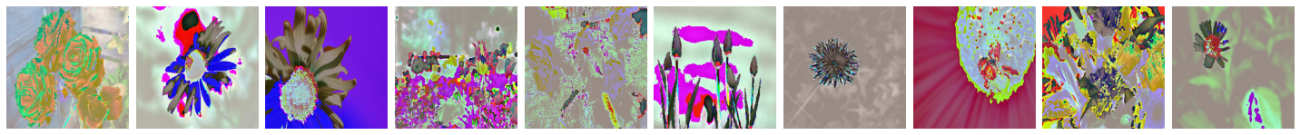

Now let’s visualize the augmented data.

visualizer(images.astype('uint8'))

Here converting the unsigned integers for viewing it could be ignored, but it would be shown as a warning.

Similarly, the other example where no preprocessing function is defined will augment the data by changing height, width, brightness, and flip.

img_gen = tf.keras.preprocessing.image.ImageDataGenerator(horizontal_flip=True,

height_shift_range=0.5,

rotation_range=45,

brightness_range=[0.2,0.85])

Once the generator is defined, use the flow() to generate batches. Here only using a single image so the batch size would be one.

sample_iterator = img_gen.flow(sample_img, batch_size=1) batch = sample_iterator.next()

Conclusion

Preprocessing the raw data is necessary for the model training. It prevents the model from overfitting as well as when the data is less it could be augmented to generate synthetic data. With this article, we have understood about preprocessing image data with Keras preprocessing module.