Bosch spins off AIShield as an autonomous corporate-startup

AIShield is an industry-first, ready-to-deploy solution with more than 20 patents in the AI security space.

AIShield is an industry-first, ready-to-deploy solution with more than 20 patents in the AI security space.

Now, after a year, where do we stand with AlphaFold and structural biology on the whole?

Chandramauli Chaudhuri leads the Data Science initiatives across Fractal’s Tech Media & Telecom vertical in the UK & Europe.

Over the years, Amazon and AWS have contributed massively to the open-source community by releasing their comprehensive datasets to the public.

“All of Google was built because we started understanding text and web pages. So the fact that computers can understand images and videos has profound implications for our core mission”.

Not just Ampere and SambaNova Systems, other chip startup companies are drawing lucrative investments and slowly emerging as tough rivals to industry behemoths like Intel and AMD.

It is important to understand that technology is just an enabler to data science/data analytics professionals which helps in drawing meaningful conclusions from complex and varied data sources, now possible by advances in parallel processing and cheap computational power.

Feature stores are a management layer for machine learning; they allow sharing and discovery of features that helps users in creating better machine learning pipelines.

As outlined by Damien Benveniste, a machine learning tech lead at Meta, in a detailed LinkedIn post, Jupyter fails to provide a ‘robust framework for reproducibility and maintainable codebase for ML systems’.

The last few months have seen a slow down in the otherwise bustling AI landscape in the country.

DeepMind researchers have introduced a novel method where agents are endowed with prior knowledge in the form of abstractions that are derived from large vision language models which are pretrained on image captioning data.

It was not till November 2021 that GPT-3 had a public release. In fact, DALL.E 2’s predecessor DALL.E is yet to be publicly released.

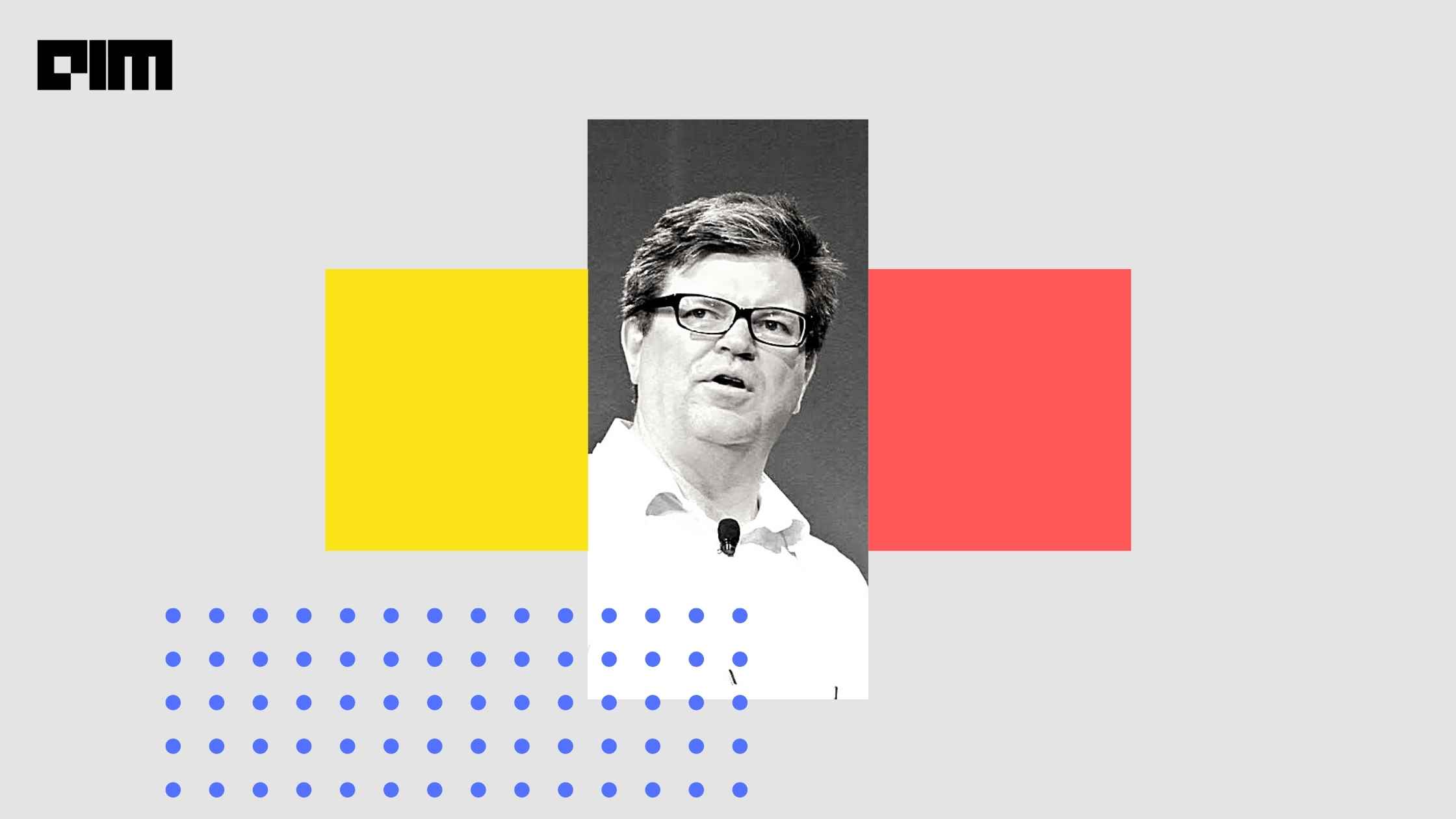

AI pioneer and the founder of DeepLearning.ai and Coursera, Andrew Ng has now announced that he will be teaching a new and updated Machine Learning Specialization course.

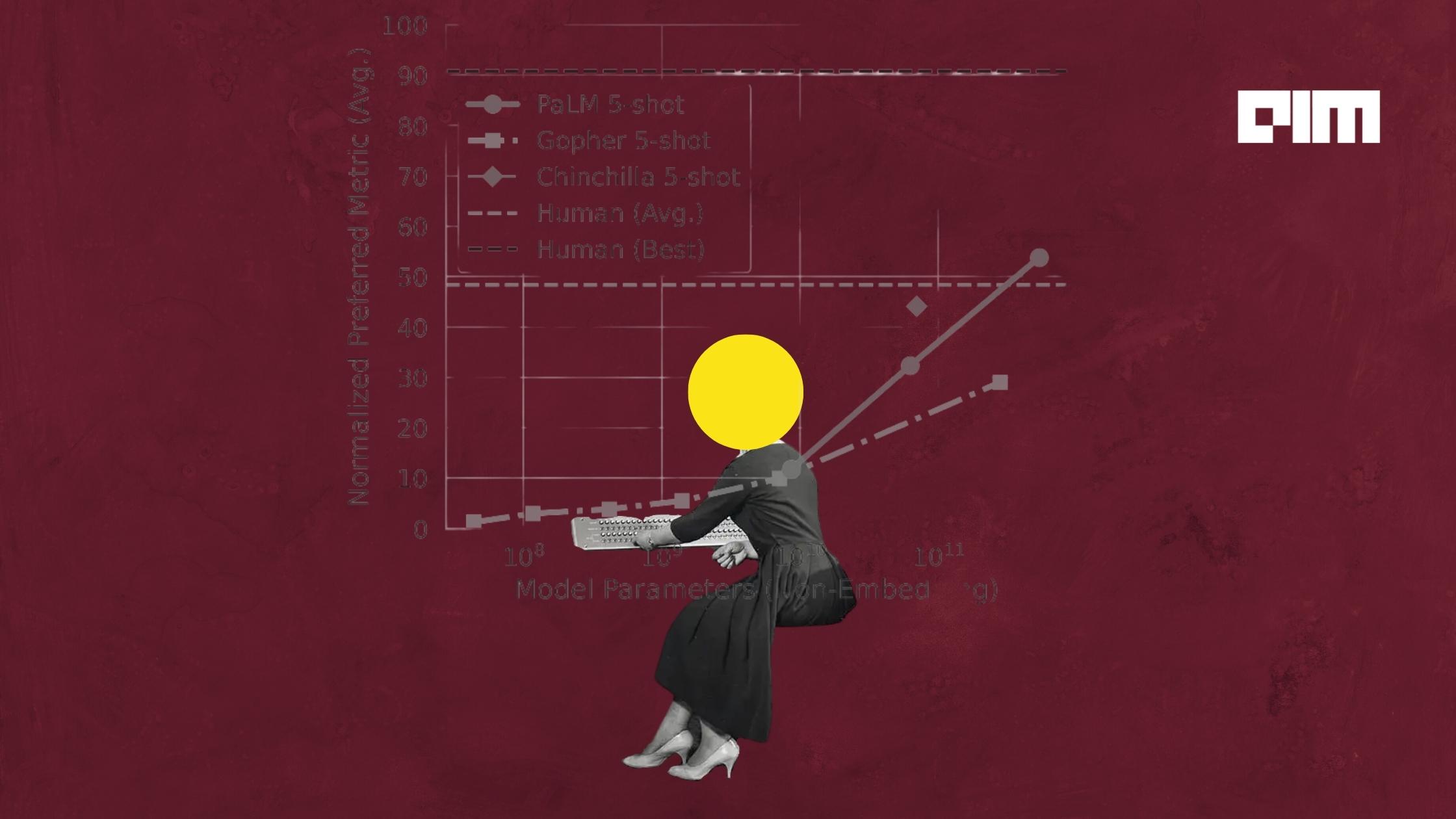

Apart from the various interesting features of this model, one feature that catches the attention is its decoder-only architecture. In fact, not just PaLM, some of the most popular and widely used language models are decoder-only.

Global IT company Wipro has announced the appointment of Satya Easwaran as the Country Head for India.

PaLM is not only trained with the much-publicised Pathway system from Google (introduced last year), but it also avoids using pipeline parallelism, a strategy used traditionally for large language models.

When you look at intelligence, it is evolving mainly because there is life and consciousness. There is the will to survive. My feeling is that unless you can teach AI about death, you will not make it intelligent.

Sait came up with an ingenious solution to this challenge – he framed playing Chess as a text generation problem.

Business experimentation brings in the rigour of making sure that we establish a counterfactual in the technical sense.

Python is often used as a glueing layer that relies on compiled optimised packages that it strings together to perform the target computations.

If you’re on the lookout for an organization that values its people, look no further than eClerx, which ranked 3rd in “Learning & Support” in AIM’s 50 Best Firms in India for Data Scientists to Work For report.

Innovation in the field of AI is very resource-intensive and often needs strong financial backing.

Analytics India Magazine caught up with Bhaskar Roy, Client Partner, Fractal, to understand the relationship between innovation and sustainability in business enterprises.

New research from DeepMind attempts to investigate the optimal model size and the number of tokens for training a transformer language model under a given compute budget.

Researchers from MIT and Facebook AI have introduced projUNN, an efficient method for training deep networks with unitary matrices.

Our goal behind making the toolkit open-source was for research to progress faster in the domain of generative modelling.

the performance of GNNs does not improve as the number of layers increases. This effect is called oversmoothing.

there have been a few mild yet significant signs that data science as a job might be slowly losing its sheen.

Over the last few years, the company has open-sourced a number of libraries and tools, especially in the NLP space.

Join the forefront of data innovation at the Data Engineering Summit 2024, where industry leaders redefine technology’s future.

© Analytics India Magazine Pvt Ltd & AIM Media House LLC 2024