|

Listen to this story

|

A few days ago, Spain was rattled with the nude images of over 20 teenagers circulating in a town called Almendralejo. Faces of school children were morphed onto naked bodies. An app was used to facilitate it, and at the forefront of this appalling incident was AI deepfake. With AI-generated deepfake crimes persisting over years, and no solid regulations to protect people from it, the latest focus of formulating AI regulations, fuelled by LLMs, is all about future use-cases where machines can go rogue.

However, the present laws that are supposed to deal with deepfakes are too weak. So, why isn’t anyone acting on it?

Forget the Present, Future is Here

The countless AI Senate hearings and summits that have been happening this year to mitigate future AI regulations had created immense buzz. The last one called upon all tech honchos to discuss, behind closed doors, the probable formulating of a legislation Bill on AI regulations. The irony being the aggressive precautionary measures by the government and tech leaders for a situation that may or may not occur, while grave issues such as deepfake crimes continue to be ignored.

From surfacing in 2017 to being ranked as one of the most serious AI crime threats, laws around deepfakes are still not solid. In the US, crimes related to deepfakes jumped from 0.2% to 2.6% in Q1 2023, with more than 90% being deepfake porn. Till date, there is no federal law that criminalises the creation or sharing of non-consensual deepfake porn in the country.

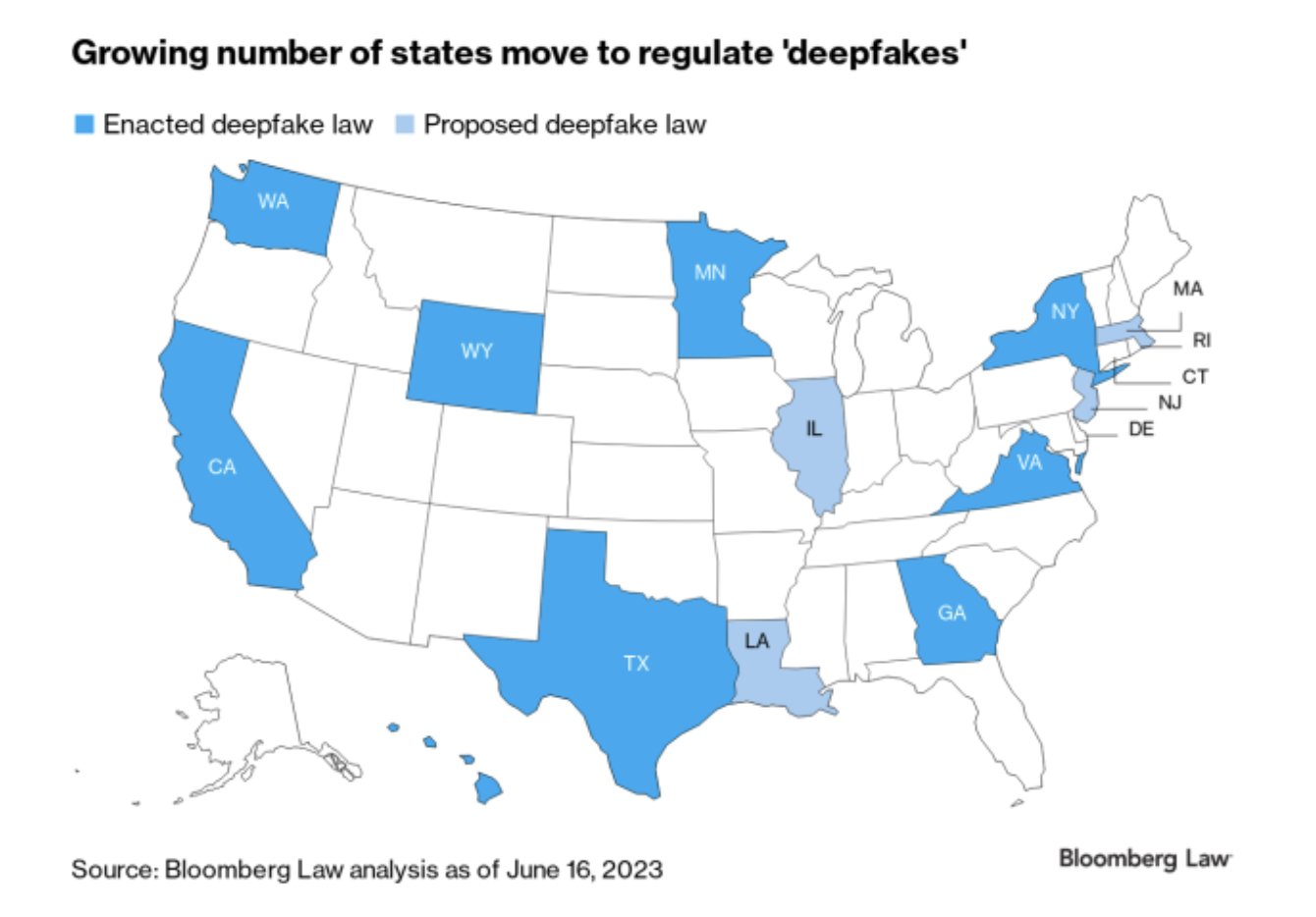

Currently, only a few states in the US – Hawaii, Texas, Virginia, and Wyoming, have made non consensual deepfakes a criminal violation. Whereas, in states such as New York and California, victims are allowed to file civil lawsuits. A couple of other states are in the process of proposing a law, as well.

Source: Bloomberg

Furthermore, if the creator of deepfake content operates beyond the jurisdiction of the relevant state, the legislation becomes inadmissible. With only a meagre number of states adopting the Bill, the enforcement of it as a common law across the US seems unlikely.

Considering how deepfake tech is evolving, proving the legitimacy of such cases becomes crucial if any form of law is implemented. Rebecca Delfino, a law professor at Loyola Law School, said that with technology getting better, it becomes difficult to identify if something is fake or not unless one is a digital forensic expert.

If you think that enforcing regulations governing deepfakes is a problem with only the US, think again.

Not So Stringent, After All

The EU that treats privacy with utmost importance, has surprisingly not sorted the whole issue surrounding deepfakes. The EU AI Act focuses on categorising AI systems on various levels of risk, and steering the development of AI systems amongst member states. However, the issue of deepfakes has been touched upon differently.

The Act does not outright ban the use of deepfakes, but aims to regulate them by imposing transparency requirements on creators. It also states that the creator will need to disclose the content to indicate if it is artificially generated or not, something that the US government has also been discussing with big tech companies to tackle through watermarking. It remains an ambitious project still in process. Even in the recent Spain incident, the obscurity and lack of laws that criminalise such crimes, leans in favour of the perpetrators.

Furthermore, similar to the issue in the US, content creators of malicious content outside the jurisdiction of the EU, remains an issue. However, in the far east, things are quite different.

Asian Countries Speed Runs

Earlier this year, the Cyberspace Administration of China (CAC) released a comprehensive legislation that will govern deepfake content. It prohibits deepfakes without user content and mandates specific identification for content generated using AI. In Singapore, POFMAN (Protection from Online Falsehoods and Manipulation Act) prevents the use of deepfake videos.

In India, though there are no specific laws or regulations that ban the use of deepfake technology, existing laws under Sections 67 (punishment for publishing or transmitting obscene material in electronic form) and 67A of the IT Act (2000) have elements that can be applied in similar scenarios. Defamation and publishing explicit content that falls under the Act, can be used in favour of deepfake victims.

Though Asian countries are speeding up the process regarding cybercrimes that also spill to AI-related ones, the inter-country challenge can prohibit the full implementation of the same. For instance, in spite of stringent laws within the country, deepfake apps from China-based developers are downloaded over a million times from users in the US. Until EU countries and the US, which are considered the forerunners for formulating any kind of AI laws, implement stringent rules around deepfakes, the situation will never be fully addressed.

While the US will actively debate and discuss whether AI will bring about the doom of humanity in the future, present AI-related crimes such as deepfakes will continue to have grave consequences.