Innovators from the Indian Institute of Technology (IIT) Jodhpur and All India Institute Of Medical Science (AIIMS) Jodhpur, in a joint initiative, have developed low-cost ‘Talking Gloves’ for people with a speech disability. The developed device costs less than Rs 5000, which uses principles of Artificial Intelligence (AI) and Machine Learning (ML) to automatically generate speech that will be language independent and facilitate the communication between mute people and normal people. It can help individuals convert hand gestures into text or pre-recorded voices. Hence, it makes a differently-abled person independent and communicates their message effectively.

Prof Sumit Kalra, Assistant Professor, Department of Computer Science and Engineering, IIT Jodhpur, along with his team of innovators including Dr Arpit Khandelwal from IIT Jodhpur, and Dr Nithin Prakash Nair (SR, ENT), Dr Amit Goyal (Prof & Head, ENT), Dr Abhinav Dixit (Prof, Dept of Physiology), from AIIMS Jodhpur, have recently acquired a patent for this innovation.

“The language-independent speech generation device will bring the people back to the mainstream in today’s global era without any language barrier. Users of the device only need to learn once, and they would be able to verbally communicate in any language with their knowledge. Additionally, the device can be customised to produce a voice similar to the original voice of the patients, which makes it appear more natural while using the device,” said Prof Sumit Kalra, Assistant Professor, Department of Computer Science and Engineering, IIT Jodhpur.

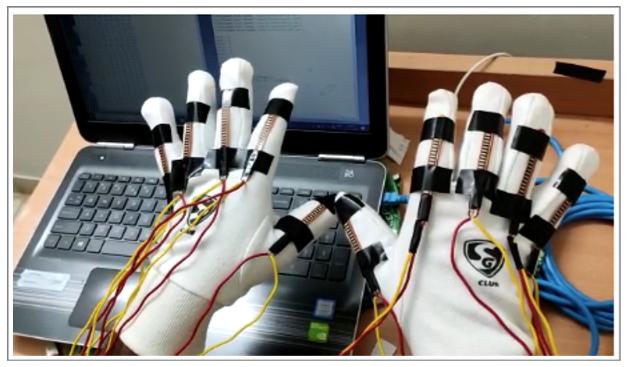

In the developed device, electrical signals are generated by the first set of sensors, wearable on a combination of a thumb, finger(s), and/or a wrist of the first hand of a user. These electrical signals are produced by the combination of fingers, thumb, hand and wrist movements. Similarly, electrical signals are also generated by the second set of sensors, on the other hand.

These electrical signals are received at a signal processing unit.

The magnitude of the received electrical signals is compared with a plurality of predefined combinations of magnitudes stored in a memory by using the signal processing unit. By using AI and ML algorithms, these combinations of signals are translated into phonetics corresponding to at least one consonant and a vowel. In an example implementation, the consonant and the vowel can be from Hindi language phonetics. A phonetic is assigned to the received electrical signals based on the comparison.

An audio signal is generated by an audio transmitter corresponding to the assigned phonetic and based on trained data associated with vocal characteristics stored in a machine learning unit. The generation of audio signals according to the phonetics having a combination of vowels and consonants leads to the generation of speech and enables the speech impaired to audibly communicate with others. The speech synthesis technique of the present subject matter uses phonetics, and therefore the speech generation is independent of any language.

In recent years, the technological advancement in the field of electro-medical devices for life support, implantable biomedical devices, and wearable medical devices have successfully provided artificial abilities to afflicted people related to any kind of disability or impairment. The patented innovation from IIT Jodhpur and AIIMS Jodhpur is a big step towards advancement in this field.

The team is further working to enhance the features such as durability, weight, responsiveness, and ease-of-use, of the developed device. The developed product will be commercialised through a startup incubated by IIT Jodhpur.