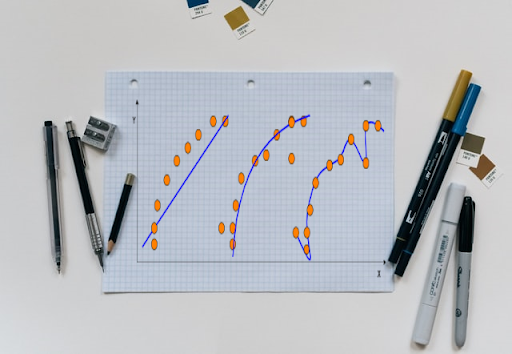

While building a machine learning model, there is always the problem of underfitting and overfitting. Finding a sweet spot between these two requires diligent hyperparameter tuning.

Researchers from top organisations and academia have been doing a lot in order to automate the hyperparameter tuning process. Some of the commercial offerings are Google AutoML, Amazon SageMaker, and SigOpt productize Hyperparameter Optimisation algorithms, among others.

However, these approaches typically fail to provide extensibility for the users to integrate their specific Hyperparameter Optimisation (HPO) algorithms along with the scalability to utilise a large pool of computing resources on-premise.

Now, researchers from LG AI team have introduced a model called Auptimizer (automated optimizer) which helps in automating the entire process of HPO algorithms:

- Initialise search space and configuration

- Propose values for hyperparameters

- Train model and update result

- Repeat the second and third steps

How It Works

The two key components that constituted Auptimizer are Resource Manager and Proposer along with its Tracking and Visualisation components. The Proposer controls how Auptimizer interacts with HPO algorithms for recommending new hyperparameter values. The

Proposer interface reduces the effort to implement an HPO algorithm by defining two functions:

- get_param() to return the new hyperparameter values

- update() to update the history

The other component, Resource Manager connects the computing resources to model training automatically and thus allowing codes to run on resources based on their availability. It also sets a callback mechanism to trigger the update() function when a job is finished.

Why Use It

Auptimizer is designed primarily as a tool for data scientists. It is also designed to support researchers and developers to easily extend the framework to other HPO algorithms and computing resources. The framework design goals are focused on a user-friendly interface which benefits both practitioners and researchers as its design simplifies the integration and development of HPO algorithms.

Auptimizer mitigates the most common factors, such as flexibility, usability, scalability, and the extensibility which generally limit the HPO algorithms from having the best performance over most of the problems. To reach these goals, the Auptimizer design has fulfilled the

following requirements as mentioned below

- Flexibility: In Auptimizer, all implemented HPO algorithms share the same interface which enables users to switch between different algorithms without changes in the code.

- Usability: It is time-consuming to integrate an existing ML project into an HPO package and most often, the users need to rewrite their code for a specific HPO toolbox. Auptimizer reduces this issue by lessening the friction to switch between different existing codes.

- Scalability: Auptimizer can deploy to a pool of computing resources to automatically scale out the experiment, and users only need to specify the resource. This helps the users to train their models efficiently with all computing resources available.

- Extensibility: New HPO algorithms can be easily integrated into the Auptimizer framework if the algorithms followed the specified interface.

Key Points

- Auptimizer addresses the number of challenges by reducing the efforts to use and switch HPO algorithms.

- This framework provides scalability for cloud / on-premise resources.

- It simplifies the process to integrate new HPO algorithms and new resource schedulers.

- Auptimizer tracks the results for reproducibility.

Outlook

Tuning machine learning models at scale, more specifically, finding the right hyperparameter values to build a robust machine learning model can be difficult and time-consuming. Auptimizer provides a universal platform to develop new algorithms efficiently. One of the crucial benefits Auptimizer provides to the data scientists is that it requires only minimal changes to existing scripts or codes and once these are modified, it can be reused easily for other projects and researches directly.

Read the paper here.