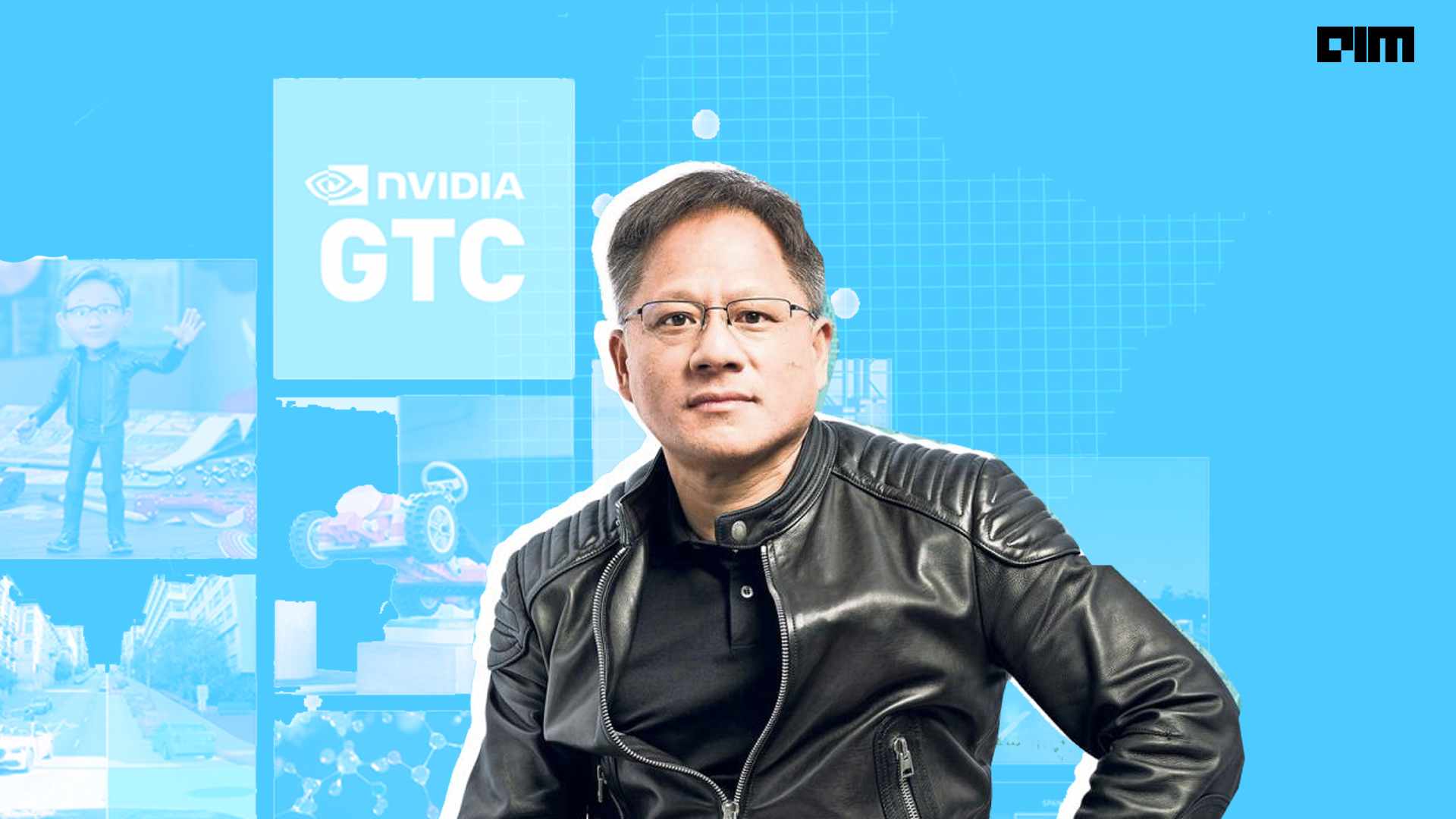

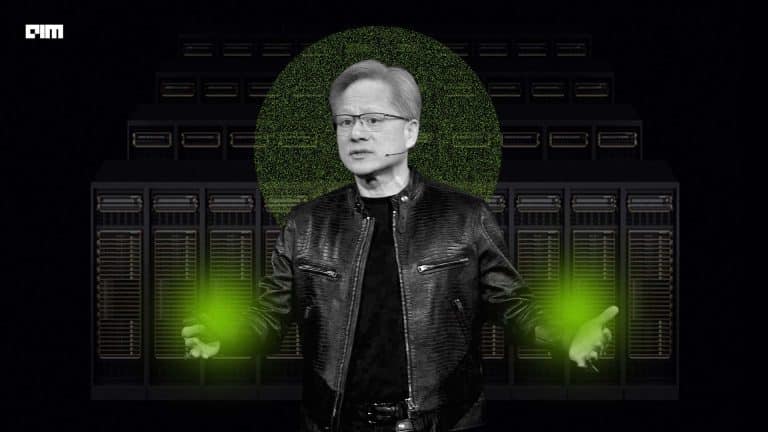

NVIDIA GTC 2022 began on March 21 and will continue till March 24. One of the highlights of the event was CEO Jensen Huang’s keynote address. The 2-hour long speech was accompanied by multiple exciting announcements on AI advancements, Omniverse, GPU for data centres, and many more. Huang’s digital avatar, who was dressed like and spoke exactly like him, made several appearances throughout the speech.

Following are the major announcements:

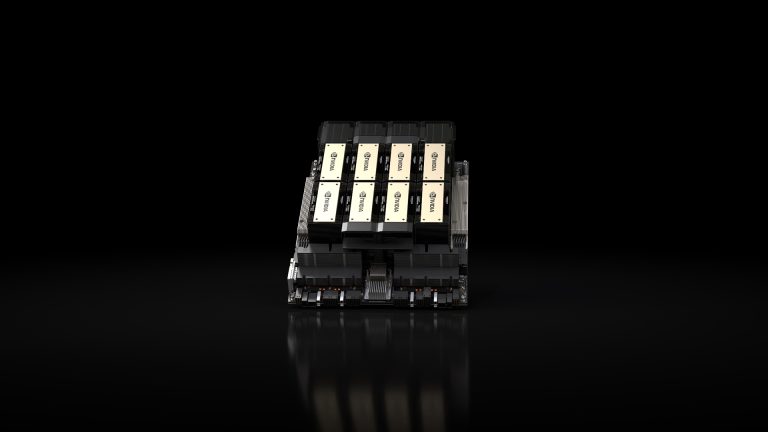

Hopper architecture

Huang introduced the new NVIDIA H100 Tensor Core GPU-based Hopper architecture. The NVIDIA H100 Tensor Core GPU is the ninth-generation data centre GPU that will be used for delivering high performance on large-scale AI and HPC. In terms of design, H100 builds on the previous A100 model with substantial improvements on the architectural efficiency side. As per the company claims, H100 is the first truly asynchronous GPU. It extends A100’s global-to-shared asynchronous transfers across address spaces and offers support for tensor memory access patterns.

Other important features include the new transformer engine that enables H100 to deliver nine times faster AI training and up to 30 times faster AI. Further, the new NVLink Network interconnect enables GPU-to-GPU communication between 256 GPUs across multiple compute nodes.

Accelerated digital twins platform

NVIDIA announced an accelerated digital twins platform for scientific computing. This platform consists of the Modulus AI framework for developing physics-machine learning neural network models along with NVIDIA Omniverse 3D virtual world simulation platform. This platform will be capable of creating interactive AI simulations in real-time that accurately reflect the real world and accelerate simulations like computational fluid dynamics up to 10,000 times faster than traditional methods. The goal of this platform is to make it easier for researchers to model complex systems at higher accuracy and speed observed with previous models.

NVIDIA Modulus takes into account the data and the governing principles to train the neural network that creates an AI surrogate model for digital twins. This twin then infers system behaviour in real-time and enables dynamic and iterative workflow. The integration with Omniverse helps with the visualisation and interactive exploration part.

NVIDIA Omniverse announcements

Since its first announcement, NVIDIA regularly introduced several enhancements and improvements to its 3D design and simulation platform, Omniverse. GTC 2022 was no different. Huang unveiled a bunch of tools and resources for Omniverse, including a framework for developing full-animated digital avatars.

A suite of cloud services to provide instant access to the NVIDIA Omniverse platform called the Omniverse Cloud was also announced. One of Omniverse Cloud’s services is Nucleus Cloud which is a simple collaboration and sharing tool that helps artists in accessing and editing large 3D scenes from anywhere without requiring to transfer massive datasets. Another feature is Omniverse Create for technical designers, artists, and creators to build interactive 3D worlds in real-time. For non-technical users, there is the View to check Omniverse scenes streaming full simulation and rendering capabilities.

NVIDIA also introduced Warp, a new Python framework to write differentiable graphics and simulation GPU code in Python. It also provides the building blocks for writing high-performance simulation code, which offers the productivity of working in an interpreted language.

AI models and libraries

NVIDIA introduced a range of software tools for building industry-leading AI applications.

These include:

- Triton: It is an open-source hyperscale model inference solution. As per the latest update, Triton will include a model navigator for accelerated deployment of optimised models, a Management Service for efficient scaling in Kubernetes, and a Forest Inference Library enabling inference on tree-based models with explainability.

- Riva 2.0: NVIDIA Riva is a speech AI SDK for enabling developers to customise real-time speech AI applications. The latest version includes speech recognition in seven languages, custom tuning with NVIDIA Tao toolkit, and deep-learning text-to-(human-like) speech.

- NeMo Megatron 0.9: NeMo Megatron is used by researchers for training large language models. The latest version announced has new optimisations and tools to shorten the end-to-end development and training time, along with offering support for training on the Cloud.

- Merlin 1.0: It is an end-to-end AI framework for building high performing recommenders at scale. The new version has two libraries – Merlin Models and Merlin Systems to choose the best fitting features and models for a specific use case.

- Maxine: It is an audio and video quality enhancement SDK for real-time communications with AI. In its latest form, Maxine includes acoustic echo cancellation and audio super-resolution.

NVIDIA DRIVE Hyperion 9

The newly introduced DRIVE Hyperion 9 is the next-generation open platform for automated and autonomous vehicles from NVIDIA. It is a programmable architecture built on multiple DRIVE Atlan computers to achieve intelligent driving and in-cabin functionality.

It is compatible across versions and users can leverage current investments in the DRIVE Orin platform and migrate to DRIVE Atlan and beyond.