|

Listen to this story

|

The Paris-based AI startup that believes in open source and raised the highest seed funding ever, that too without a product, has proved that it is here to make AI better, and fun at the same time. Mistral AI has released a 7 billion model that has outperformed Llama 2 13B on all benchmarks and Llama 1 34B on several benchmarks.

What’s interesting is that before releasing the open source model on GitHub, Mistral AI decided to post the torrent magnet link on X, promoting the true essence of open source.

7B model coming up?

— Mohit Pandey (@MohitingAround) September 27, 2023

Mistral 7B was released with an Apache 2.0 licence, which means that it is actually open source, unlike Meta’s Llama 2, which has several restrictions on usage. This means that the model can be used for research and commercial purposes without any restrictions. Mistral 7B proves that small models can actually perform a lot better than their larger counterparts, which is the whole point of open source.

The best open source model?

Mistral AI says that it is proud that Mistral 7B is the most powerful model of its size to date. The release also includes a model fine-tuned for chat, which also outperforms Llama 2 13B chat. If we compare the model with the benchmarks, it is actually quite a proud moment for the team — the model outperforms Llama 7B on all benchmarks.

The model is very close in performance to CodeLlama 7B on coding benchmarks. It can be downloaded from GitHub or even torrent, and run locally with just a 13.4 GB size. It can also be deployed on AWS, Google Cloud, or Azure, using vLLM inference server and Skypilot. Users on HackerNews say that they have already started deploying it on MacBook M1 Air and compare the performance with GPT-3.5.

Furthermore, Mistral 7B Instruct, a model fine-tuned on instructions dataset on Hugging Face outperformed all 7B models on MT-Bench, and came close to 13B chat models. The blog reads, “No tricks, no proprietary data.”

The next Mistral step is not open source

It is not like Mistral AI does not understand the problems with open source. The team has decided to open a GitHub repository and Discord channel for discussing the problems and fixing them with the community. They are also calling universities and researchers to figure out the flaws with the model and help improve them. “Our ambition is to become the leading supporter of the open generative AI community, and bring open models to state-of-the-art performance,” says the team.

Though, Mistral is not just going to go the open source way. The team has announced that it is parallelly developing a commercial product that will be optimised for proprietary data and private cloud deployment. “These models will be distributed as white-box solutions, making both weights and code sources available. We are actively working on hosted solutions and dedicated deployment for enterprises.”

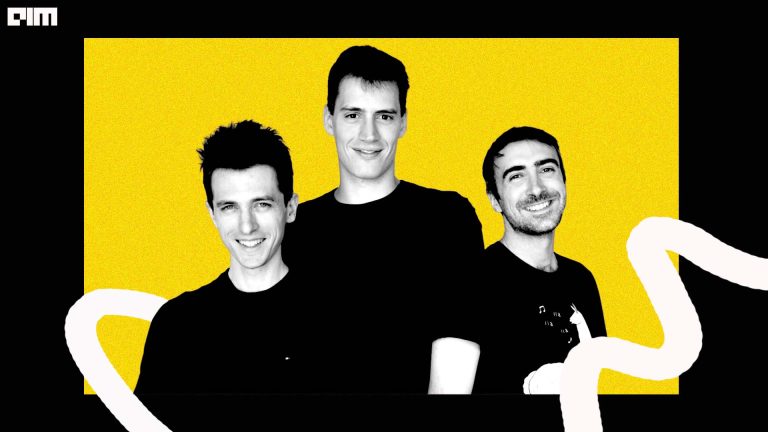

Mistral AI was founded by Arthus Mensch from DeepMind, and Guillaume Lample and Timothée Lacroix from Meta AI. Lample was one of the core members of the team behind Meta AI’s LLaMA model, which has been leading the way in open-source. The company believes that open source is the way forward for AI. Since the release of OpenAI models, the trio said that GPT felt like a shot in the arm for a lot of people in the AI world. “We could see the technology really start to accelerate last year,” said Mensch.

Since the company has shown that its smaller model can outperform others, the larger models that the company strives to build would be the money minting solution for the company. Let’s hope that it sticks to its words.

Initially, OpenAI also started out as an open source company and now we know where they are headed. While OpenAI might have achieved AGI internally, “The year is 2028, MistralAI releases their 38th torrent link, with the text only “AGI achieved externally”, Apache 2.0”, said Nathan Lambert on X.