|

Listen to this story

|

In a reverse trend of sorts, researchers are now looking for ways to reduce the huge computational cost and size of language models without hampering their accuracy.

Source: neuralmagic.com

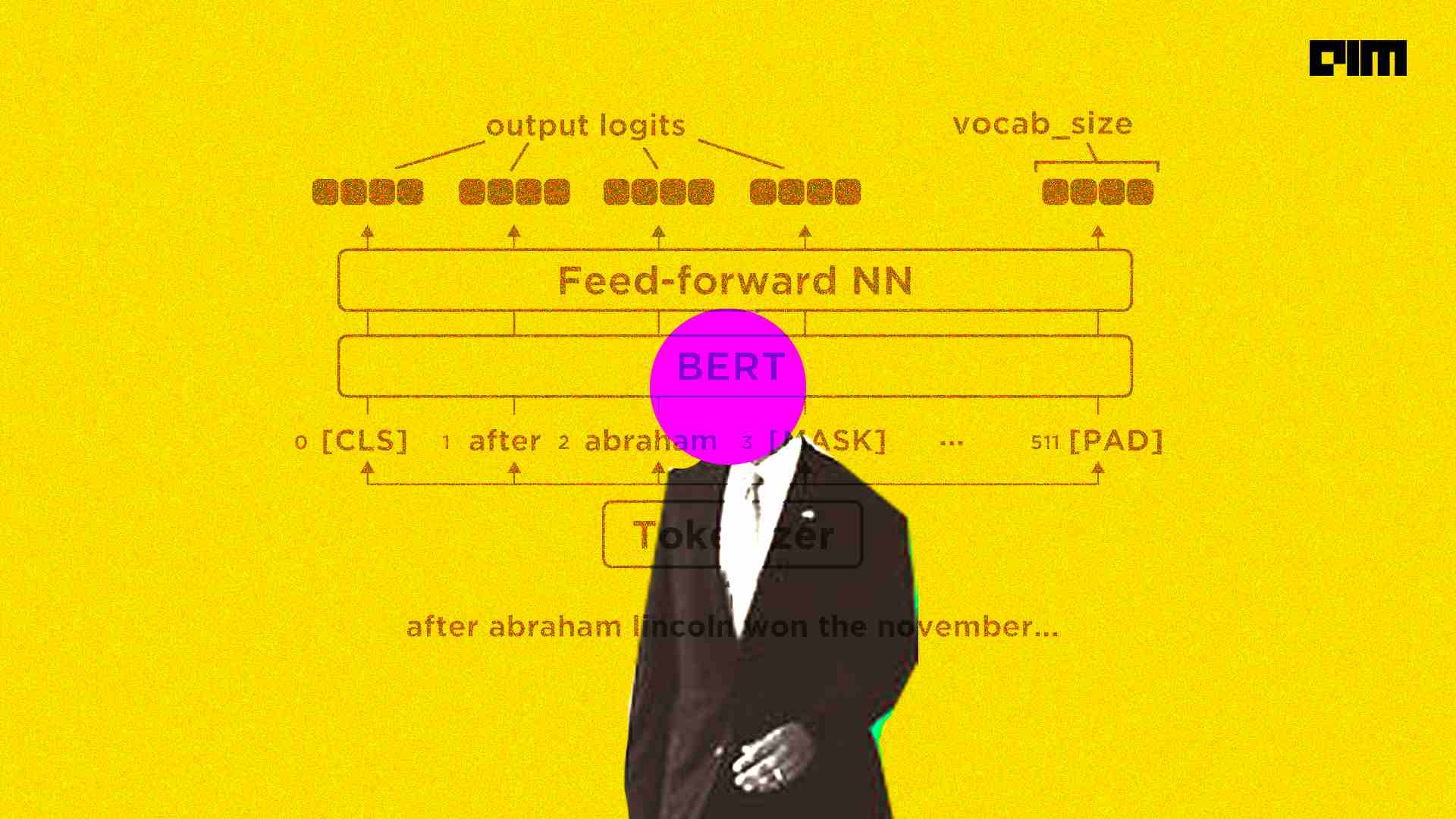

In this endeavour, US-based Neural Magic, in collaboration with Intel Corporation, has developed their own ‘pruned’ version of BERT-Large that is eight times faster and 12 times smaller in size and storage space. To achieve this, The researchers combined pruning and sparcing processes in the pre-training stage to create general, sparse architectures finetuned and quantised onto datasets for standard tasks like SQuAD for question answering. This method resulted in highly compressed networks without considerable deviation in terms of accuracy with regard to the unoptimised models. As part of their research, Intel has released the Prune OFA models on Hugging Face.

Deployment with DeepSparce

The DeepSparse Engine is specifically engineered to accelerate sparse and sparse-quantized networks. This approach leverages sparsity to reduce the overall compute and take advantage of the CPU’s large caches to access memory at a faster pace. With this method, a GPU-class performance can be achieved on commodity CPUs. Combining DeepSparse with the Prune Once for All sparse-quantized models yields 11x better performance in throughput and 8x better performance for latency-based applications, beating BERT-base and achieving DistilBERT level performance without sacrificing accuracy.

Source: neuralmagic.com

The graph above highlights the relationship between networks for scaling their structured size vs sparsifying them to remove redundancies. The performant DistilBERT model has the least number of layers and channels and the lowest accuracy. With more layers and channels added, BERT-base is less performant and more accurate. Finally, BERT-Large is the most accurate with the largest size but the slowest inference. Despite the reduced number of parameters, the sparse-quantized BERT-Large is close in accuracy to the dense version and inferences 8x faster. So, while the larger optimisation space helped when training, not all of these pathways were necessary to maintain accuracy. The redundancies in these larger networks surface even more when comparing the file sizes necessary to store these models, as shown in the graph below.

Source: neuralmagic.com

For more information, click here