Last week, the internet went berserk with OpenAI’s first video-generation model Sora. However, at the same time, a flurry of AI experts and researchers from competitor companies were quick to dissect and criticise Sora’s transformer model, igniting a physics debate of sorts.

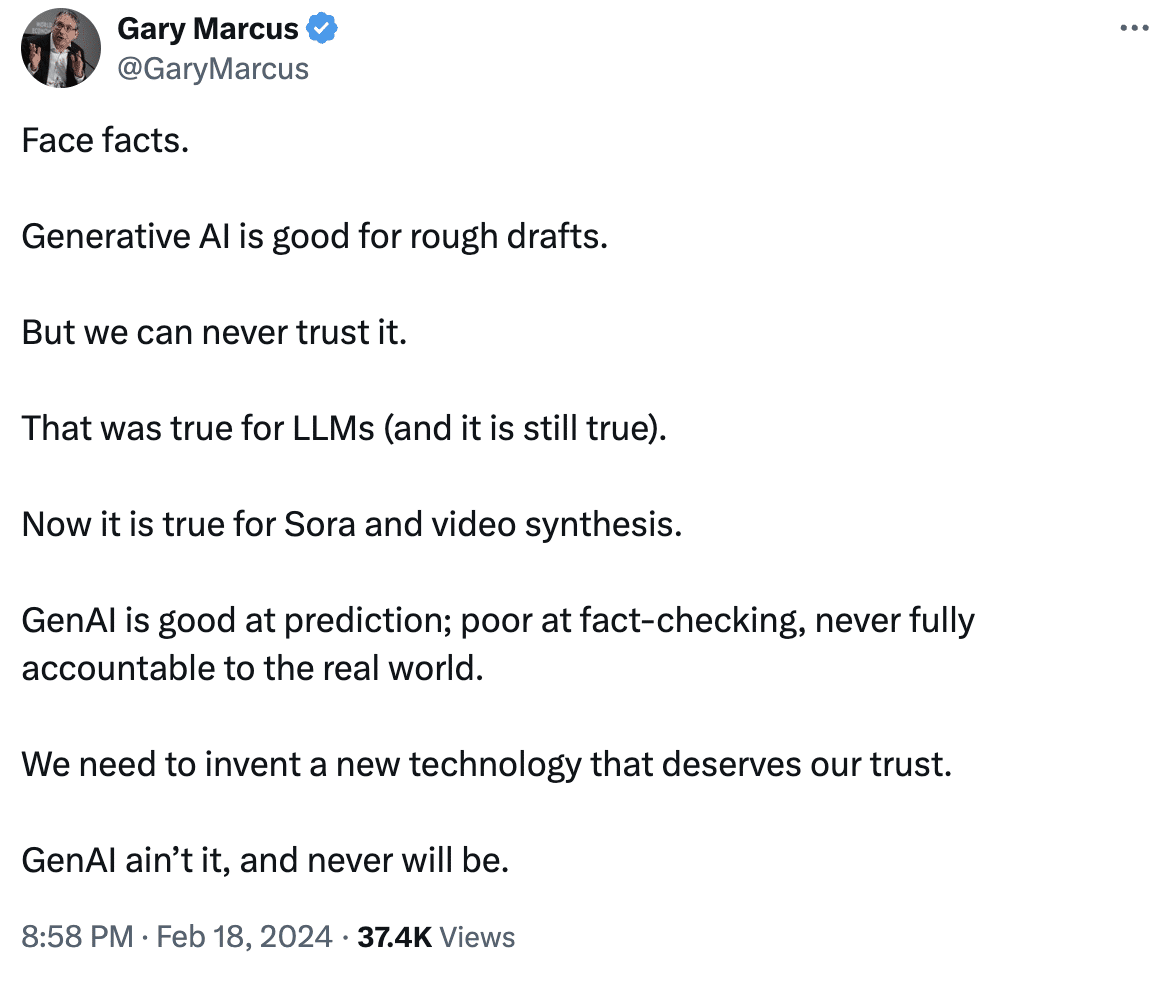

AI scientist Gary Marcus was one among the many to criticise not just the accuracy of the videos generated by Sora, but also the generative AI model used for video synthesis.

Competitors Unite

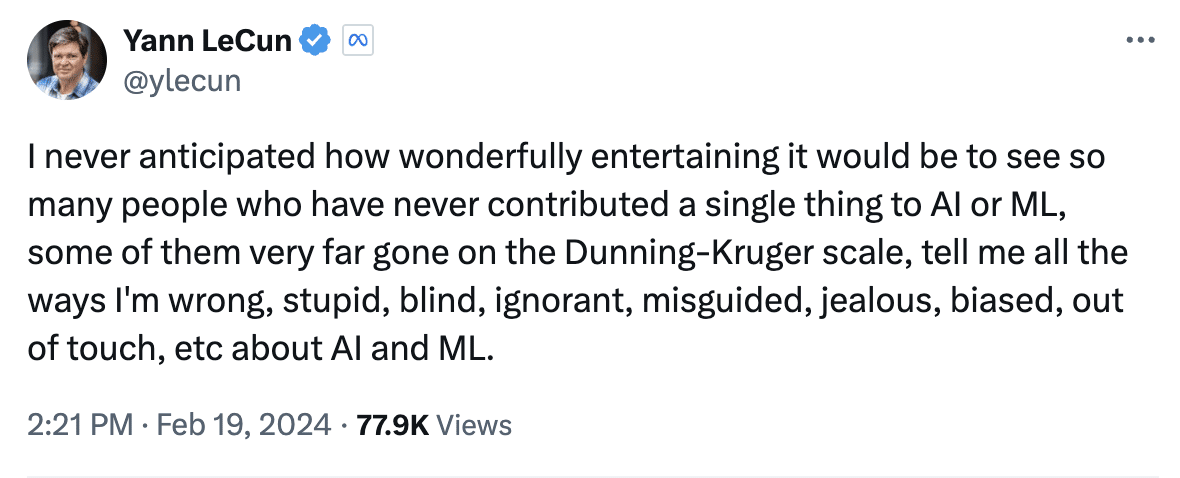

In a move that seemed to undermine Sora’s diffusion model structure, Meta and Google dissed the model’s understanding of the physical world.

Meta chief Yann LeCun said, “The generation of mostly realistic-looking videos from prompts does not indicate that a system understands the physical world. Generation is very different from causal prediction from a world model. The space of plausible videos is very large, and a video generation system merely needs to produce one sample to succeed.”

LeCun explains further in order to differentiate Sora from Meta’s latest AI model offering, V-JEPA (Video Joint Embedding Predictive Architecture), a model that analyses interactions between objects in videos. He said, “That is the whole point behind the JEPA (Joint Embedding Predictive Architecture), which is not generative and makes predictions in representation space” – a push to make V-JEPA’s self-supervised model seem superior to Sora’s diffusion transformer model.

Researcher and entrepreneur Eric Xing chimed in to support LeCun’s views. “An agent model that can reason based on understanding must go beyond LLMs or DMs,” he said.

The timing of the Gemini Pro 1.5 announcement couldn’t have been better. The videos generated by Sora were made to run on Gemini 1.5 Pro, where the model critiqued the inconsistencies in the video suggesting that “it is not a real-life scene”.

Elon Musk was not far behind. He called Tesla’s video-generation capabilities superior to OpenAI’s with respect to predicting accurate physics.

Source: X

While the experts have been quick to dismiss the generative model’s capabilities, the understanding of the ‘physics’ behind the model has been overlooked.

The Physics of Things

Sora uses a transformer architecture similar to GPT models, and OpenAI believes that the foundation will ‘understand and simulate the real world’, which will help towards achieving AGI. Though not called a physics engine, it is possible that Unreal Engine 5’s generated data may have been used to train Sora’s underlying model.

Senior research scientist at NVIDIA, Jim Fan, clarified OpenAI’s Sora model by explaining a data-driven physics engine. “Sora learns a physics engine implicitly in the neural parameters by gradient descent through massive amounts of videos,” he said, referring to Sora as a learnable simulator or world model.

Fan also expressed his disapproval of Sora’s reductionist views. “I see some vocal objections: ‘Sora is not learning physics, it’s just manipulating pixels in 2D’. I respectfully disagree with this reductionist view. It’s similar to saying, ‘GPT-4 doesn’t learn coding, it’s just sampling strings’. Well, what transformers do is just manipulate a sequence of integers (token IDs). What neural networks do is just manipulating floating numbers. That’s not the right argument,” he said.

Sora is at the GPT-3 Moment

Perplexity founder Aravind Srinivas, who has been vocal on social media of late, also spoke in support of LeCun. “Reality is Sora, while being amazing, is still not ready yet to model physics accurately,” he said.

Interestingly, OpenAI themselves have called out the limitations of the model before anyone could point them out. The company blog states that Sora may struggle with accurately simulating the physics of a complex scene, where it may not understand specific instances of cause and effect. It can also get confused with spatial details of a prompt, such as following a specific camera trajectory, and more.

Fan has also likened Sora with the ‘GPT-3 moment’ in 2020, when the model required ‘heavy prompting and babysitting’. However, it was the ‘first compelling demonstration of in-context learning as an emergent property’.

The current limitations do not cloud the quality of output generated. When OpenAI acquired Global Illumination, a digital product company that created open-source game Biomes (that resembles Minecraft) in August last year, the scope of video generation and building simulation-model platforms via auto agents were some of the speculations.

Now, with the release of Sora, the possibilities to disrupt the video game industry has only escalated. If Sora is at the GPT-3 moment, GPT-4 of the model will be incomprehensible. Until then, sceptics will continue to debate and probably teach one another a thing or two.

Source: X