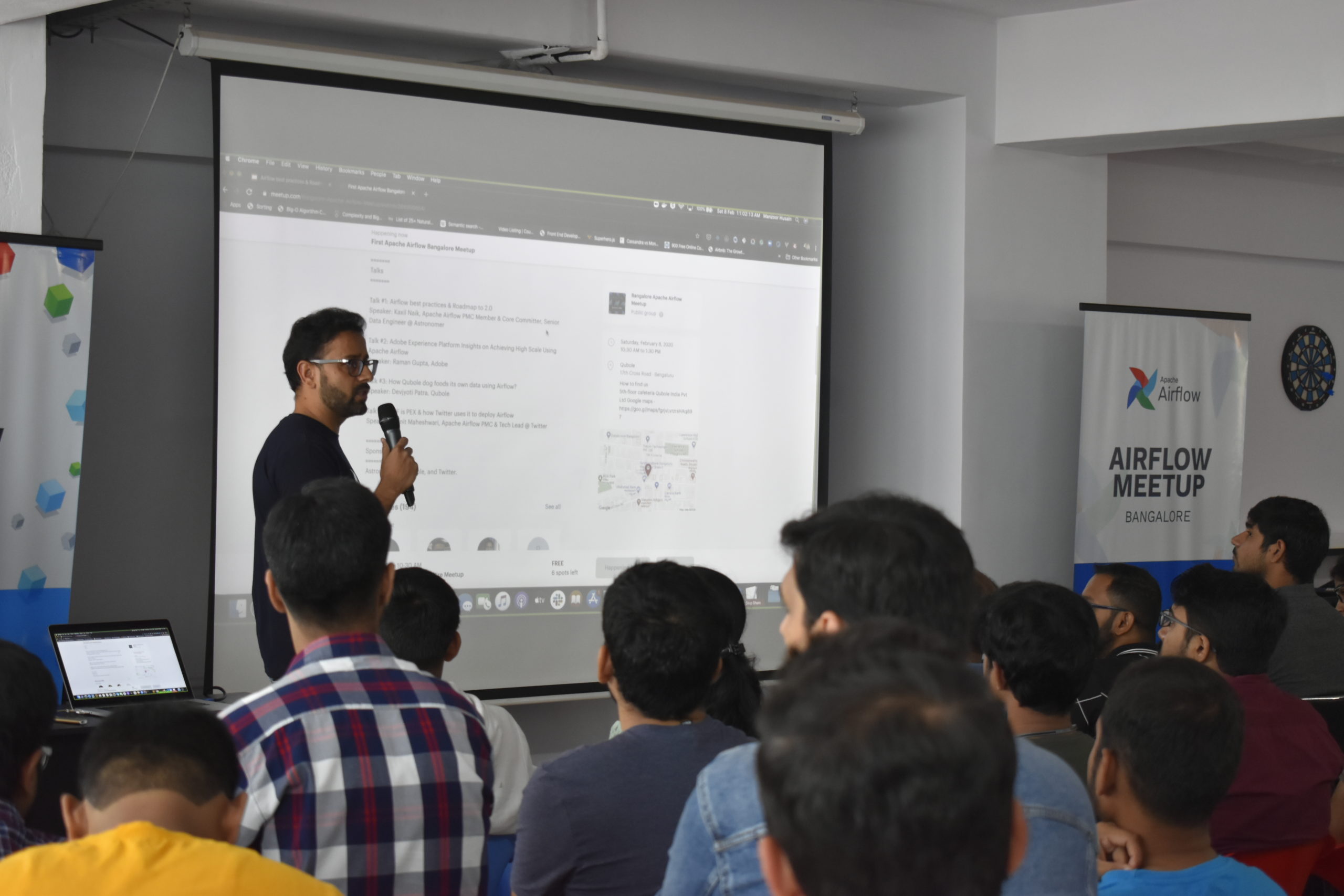

Apache Airflow has been one of the best workflow management systems around. In modern business practices, the software serves as a platform where huge workloads can be easily be managed by organisations. The recent meetup hosted by Qubole, together with co-hosts Twitter and Astronomer, was held at their Bangalore office on Feb 8th, 2020 – it represented the first Apache Airflow meetup, where the speakers shed light into a few important topics and updates on the popular software, Apache Airflow.

From understanding platform insights on achieving high scale using Airflow to how Qubole is utilising its data using Airflow, this meet up went beyond and above. The meetup was overflowed with more than 100 attendees and witnessed a considerable amount audience from the Airflow community.

Due to several benefits such as modular architecture, extensibility, being dynamic in nature, etc., several popular organisations like Twitter and Netflix, have started adopting this software into their platforms. Qubole have been working on Airflow for a few years now. According to the sources, last year, the enterprise cloud startup, Astronomer has raised $5.7 million in seed funding to deliver enterprise-grade Apache Airflow.

There were a total of four talks where the discussions mainly centred on how Apache Airflow portrays a vital role in organisations when it comes to monitoring workflows. The talks were delivered by industry data experts that included, Kaxil Naik, an Apache Airflow PMC Member & Core Committer, and a Senior Data Engineer at Astronomer; Raman Gupta, a Senior Computer Scientist at Adobe; Devjyoti Patra of Qubole; and Sumit Maheshwari, Apache Airflow PMC & Tech Lead at Twitter.

Click Here to Subscribe to Qubole’s APAC Field Events

Talk 1: Airflow Best Practices & Roadmap to 2.0

This talk was delivered by Kaxil Naik from Astronomer. In this talk, Naik spoke about various intuitive features of Airflow version 1.10.8 which have been updated along with some bug fixes, a few interesting tips for writing Directed Acyclic Graphs (DAGs) as well as the upcoming upgrades in Airflow 2.0.

Some of the new features discussed are mentioned below

- Addition of tags to DAGs and using it for filtering.

- Allowing adding conf. option to add DAG Run view

- Allowing externally triggered DAGs to run for future exec dates

- Updating documentation.

- Center DAG on graph view load.

Next, Naik shared some of the tips and tricks for writing DAGs in order to reduce the redundancies and improve the way to write DAGs. Some of them are mentioned below.

- DAGs as Context Manager: DAGs can be used as context managers to assign new operators to DAG automatically.

- Default Arguments: In Airflow 1.10.8, one can use default_args to avoid repeating arguments. For instance, If a dictionary of default_args is passed to a DAG, it will apply them to any of its operators. This makes it easy to apply a common parameter to many operators without having to type it many times.

- Using the list to task dependencies.

- Restrict the number of Airflow variables in the DAG.

- Avoid code outside of an operator in DAG files

- Use Flask-App builder based UI.

- Set configs using environment variables.

- Instead of using initdb, apply migration using “airflow upgragdedb”

Lastly, Naik also discussed some of the enhancements and the roadmap to 2.0; some of them are mentioned below.

- DAG Serialisation

- Revamped real-time UI.

- Production-grade modern API.

- Official Docker Image and Helm Chart.

- Scheduler Improvements

- Data Lineage.

Talk 2: Adobe Experience Platform Insights on Achieving High Scale Using Apache Airflow

This talk was delivered by Raman Gupta, who is the Senior Computer Scientist at Adobe. In this talk, Gupta discussed how the Platform Orchestration Service at Adobe is utilising AirFlow to fulfil the need of workflow management system. He also discussed the APIs exposed to its users to manage workflows as well as the challenges faced by them and steps to overcome them.

Some of the key challenges faced are mentioned below:

- To run 1000 tasks concurrently.

- To keep scheduling latency within some predefined threshold.

- To improve service usage or debugging experience.

Then, Gupta talked about some of the steps which are being taken to achieve high-scale with latency, as mentioned below:

- Support for multiple Airflow cluster behind Orchestration service. This will also restrict the number of workflows per cluster to control.

- Scalability such as support for multi-cluster in Orchestration service, Airflow setup on Kubernetes to scale worker task horizontally and support for reschedule mode to achieve high concurrency.

Talk 3: How Qubole Dog Food Its Own Data Using Airflow?

The speaker of this talk was Devjyoti Patra from Qubole. In this talk, Patra gave an overview of the Qubole unified data platform, and he showed how to create an Airflow cluster easily just by using the console. He then discussed the ETL workflow orchestration and some of the use-cases at Qubole where Airflow is being used. For instance, Qubole has BiFrost Pipeline for sharing data with clients, run multiple pipelines for billing, insights, alerts and reports, validating the output data from upstream pipelines, among others.

Talk 4: WTF is PEX & How Twitter Uses it to Deploy Airflow

This was the last talk for the event which was initiated by Sumit Maheshwari, Apache Airflow PMC & Tech Lead at Twitter. In this talk, Sumit discussed what PEX or Python executables are, and how it is similar to .exe files. He also discussed Python ANTS or PANTS, which is an Ant-based Python built tool and the ways to use it to build Airflow.pex file. Lastly, The speaker discussed a few points on how to deploy Airflow on Kubernetes. Some of the points mentioned are as follows

- Bundle airflow.pex file into docker image on top of a python-based image.

- Supply Airflow configs via Kubernetes Config map

- Write/Read task logs from GCS.

Outlook

With the conclusion of the meetup, the audience learnt a lot about the Apache Airflow as well as a few tips and tricks on how to use this software with ease. Some of the key takeaways are mentioned below.

- AirFlow 2.0 will include the official Docker Image and Helm Chart.

- How Adobe is using Airflow to monitor workflow.

- Understanding Qubole’s unified data platform.

- The use of PEX and DAGs at Twitter.

- How to deploy Airflow on Kubernetes.