Most of the recently trending technologies such as BERT, GPT-3, Transformers, LSTM, GANs and others have deep learning at the core. These deep learning-based applications are transforming many industries such as self-driving, language translation, fraud detection and more. The researchers in the field of deep learning are contributing immensely to bring some fantastic applications in the field. In this article, we list ten deep learning researchers, in no particular order, who are re-defining the application areas of deep learning.

Geoffrey Hinton

A pioneer in deep learning and machine learning-based research, Hinton’s work is aimed at finding complex structure in large, high-dimensional datasets, and understanding how the brain learns to see. He popularised the back-propagation algorithm for training multi-layer neural networks, along with David Rumelhart and Ronald J. Williams. He also contributed to Boltzmann machines, variational learning, time-delay neural-nets, and more. Often called the “Godfather of Deep Learning”, many leading deep learning researchers of recent times have apprenticed under his guidance. He received the 2018 Turing Award alongside Yoshua Bengio and Yann LeCun for their work on deep learning. He currently works at Google Brain along with teaching at the University of Toronto.

Ian Goodfellow

Currently working as the director of machine learning in the Special Projects Group at Apple, Goodfellow invented generative adversarial networks (GANs), which is one of the most exciting developments in the field of AI in recent times. It has sparked huge excitement and controversies in the area of machine learning. Before Apple, he has worked as a research scientist at Google Brain. He has made several contributions to the field of deep learning such as demonstrating security vulnerabilities of machine learning systems, and more. He is also the co-author of the textbook Deep Learning.

Ruslan Salakhutdinov

Director of AI Research at Apple, Salakhutdinov is also a computer science professor in the machine learning dept., school of computer science at Carnegie Mellon University. With research interests in the field of statistical machine learning, he has led several research projects in deep learning, probabilistic graphical models and large-scale optimisation. His work on Restricted Boltzmann Machines (RBM) along with other researchers, is much remembered today. RBM is essentially a generative stochastic artificial neural network that can learn a probability distribution over its set of inputs. Trained on supervised and unsupervised learning, RBM is used in areas such as dimensionality reduction, classification, feature learning and more.

Yann Lecun

Lecun has been working on deep learning methods since the mid-1980s, notably the convolutional network model, which he invented during his early work in deep learning. It is being used in various domains such as image, video and speech recognition across all major companies. He has published over 190 papers on it, along with extensive research on n handwriting recognition, image compression, and more. Currently working as the chief AI scientist at Facebook, he is the founding director of the NYU Center for Data Science where he revolutionised unsupervised learning. He is also the co-creator of the DjVu image compression technology and co-developed the Lush programming language. He is the co-recipient of the 2018 Turing Award.

Yoshua Bengio

Known for his work on artificial neural networks and deep learning, he is the co-recipient of the 2018 Turing Award. He is currently a professor at the Montreal Institute for Learning Algorithms (MILA) and popularised deep learning in the 1990s and 2000s. His significant contributions to deep neural networks made it a critical component of computing. He is also an advocate of the social impacts of the emerging technologies and actively contributed to the Montreal Declaration for Responsible Development of Artificial Intelligence.

Jurgen Schmidhuber

Known as the father of modern deep learning, along with his students Sepp Hochreiter, Fred Cummins, Alex Graves, and others, he was the first to publish a paper on Long short-term memory (LSTM), a sophisticated version of recurrent neural networks. LSTM has now evolved to be used in speech recognition such as Google’s software for smartphones, its smart assistant Allo, Apple’s Siri, Amazon’s Alexa and more. Schmidhuber is a co-director of the Dalle Molle Institute for Artificial Intelligence Research in Manno, Switzerland.

Sepp Hochreiter

The co-creator of LSTM, Hochreiter led the Institute for Machine Learning at the Johannes Kepler University of Linz since 2018. He developed LSTM, the results of which were first reported in his diploma thesis in 1991. Since then, it is considered a significant milestone in the field of machine learning and deep learning. It is used in areas such as language recognition, machine translation, robotics, drug design and more. Along with it, he has also contributed to reinforcement learning via actor-critic approaches. He has developed new activation functions for neural networks such as exponential linear units or scaled ELUs.

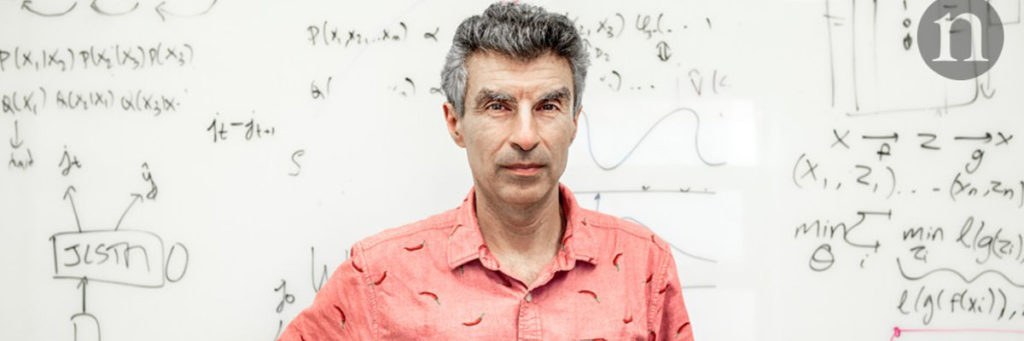

Michael Jordan

Jordan is known for his work in recurrent neural networks when he started developing RNN as a cognitive model in the 1980s. He is majorly responsible for popularising Bayesian Networks as the machine learning community started experimenting with it as a link between ML and statistics. He has worked extensively on Bayesian nonparametric analysis, probabilistic graphical models, spectral methods, kernel machines and applications to problems in distributed computing. He also popularised the expectation-maximisation algorithm in machine learning. He is a distinguished professor in the Dept. of Electrical Engineering and Computer Science and the Department of Statistics at UCB.

Ilya Sutskever

Co-founder and Chief scientist of OpenAI, Sutskever has made significant contributions in the field of deep learning. He also co-founded DNNresearch, which was acquired by Google in 2013, post which he worked as a research scientist at Google Brain. A student of Geoffrey Hinton, he has led the revolution in both computer vision and natural language processing. He was also a postdoc in Stanford with Andrew Ng’s group. Sutskever has co-invented AlphaGo and TensorFlow, which are considered benchmarks in the field of deep learning. He also co-invented AlexNet, a convolutional neural network, which he invented along with Alexander Krizhevsky.

Andrej Karpathy

Currently working as the senior director of AI at Tesla, Karpathy leads a team responsible for all neural networks on the Autopilot. He has worked on areas such as deep learning, computer vision, Generative Modeling and Reinforcement Learning while working as a research scientist at OpenAI. He has worked with Fei-Fei Li on CNN and RNN architectures and their applications in computer vision and NLP. He has developed several deep learning libraries in Javascript such as ConvNetJS, RecurrentJS, REINFORCEjs, t-sneJS.