|

Listen to this story

|

Ever wondered how Google Lens scans and reads wifi password at your favourite Starbucks? The answer lies in real time scene text detection.

In a recent paper, Real Time Scene-Text Detection with Differentiable Binarization, researchers have proposed an adaptive segmentation technique for various use cases of scene text detection. Before proceeding further, let’s understand Image segmentation and Image Binarization.

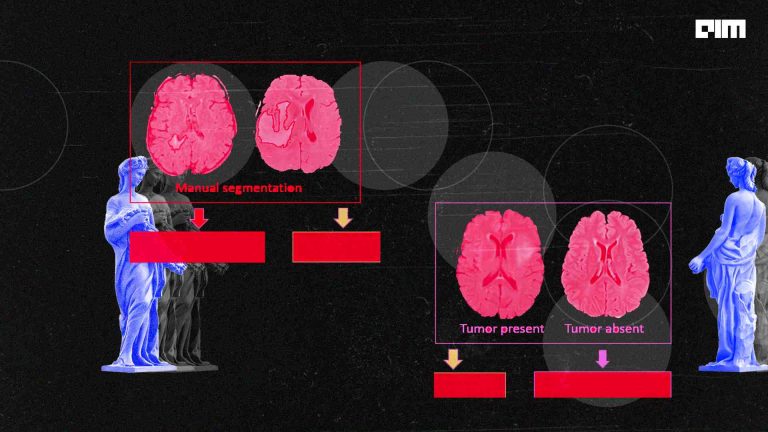

Image segmentation is a technique of grouping similar segments of an image together based on similarity in pixel values (Semantic Segmentation) or based on similarity in Instances (Instance Segmentation) of the same object in an image.

Fig 1: Example of Image Segmentation in autonomous driving systems

Fig 2: How Image Segmentation fits in a Machine Learning Architecture

Image Binarization is a technique to convert any image to a binary scaled image.

Fig 3: Example of Image Binarization

In most text detection systems, segmentation is followed by Binarization and pixel grouping techniques to detect texts in real scenarios. The proposed architecture in this paper uses binarization in the segmentation network using a Differentiable Binarization Function so that the binarized image can be trained end to end in a CNN.

Fig 4 : Model Architecture

Three key concepts in the model architecture are:

Differentiable Binarization: Any standard binarization function like one mentioned below (P is the probability map and t is the set threshold) would be non – differentiable and hence it cannot be optimised along with segmentation.

Hence, to make binarization optimizable, a function like mentioned below (P and T are the probability map and threshold map learned from the network) would be differentiable:-

Adaptive Thresholding: Adaptive Thresholding is optimized thresholding for effective binarization within the segmentation network. Refer Adaptive Threshold under section Methodology in the paper.

Deformable Convolution: This step helps in the convoluting over spatial images or images with larger aspect ratios. Refer here for more on Deformable Convolution Network.

To get an understanding of how the deformable convolution has been performed in the paper take a look here.

Fig 5:- Source code snippet on implementation of the Deformable Convolution layer

How does the model work?

- The input image is firstly fed into a feature-pyramid backbone (for e.g using RESNET). To know more about feature-pyramid backbone refer here.

- Then, the obtained pyramid features are up-sampled (using image augmentation )to the same scale of the input image and cascaded to produce a feature F, which will be used to predict probability and threshold.

- Obtained feature F from the previous step is used to predict both the probability map (P) and the threshold map (T). Threshold map generates threshold for effective binarization in the following steps.

- After that, the approximate binary map (Bˆ) is calculated by P and F as enlisted in formula (2) of the paper.

- Supervision is then applied during model training on probability map, threshold map and approximate binary map with probability and binary map sharing the same supervision.

- In the inference period, the bounding boxes can be obtained easily from the approximate binary map or the probability map by a box formulation module using the Vatti Clipping Algorithm as enlisted in formula (10) of the paper.

Fig 6:- Some visualisation results on text instances of various shapes, including curved text, multi-oriented text, vertical text, and long text lines. For each unit, the top right is the threshold map; the bottom right is the probability map.

As per review metrics, DB has worked well with RESNET-50 and RESNET-18 as backbones for real time inference and accurate text detections. However, the method can’t handle cases of “text inside text” or if an overlapping text region is in the centre region of another text instance.

Refer the section Implementation Details to know how the model was trained , sections Limitation and Conclusion for more details on the model performance.

For accessing the entire research paper with highlighted sections and added notes, click here.