Reinforcement learning and games have had a long, mutually-beneficial relationship. For decades, games have been considered as one of the important testbeds for reinforcement learning models. All this started with Samuel’s Checkers Player, one of the first world’s first successful self-learning programs. Since then, there are a number of researches on reinforcement learning which have been tested using games like poker, StarCraft, backgammon, checkers, Go, among others.

Games play a crucial role in testing reinforcement learning algorithms. In one of our articles, we discussed the reasons for how chess has become the testbed for machine learning researchers.

Recently, researchers from Facebook AI took the game of Hanabi as a new challenge domain with problems which is a combination of purely cooperative gameplay with two to five players and imperfect information. Hanabi is a cooperative card game in which players are aware of other players’ cards but not their own. To succeed, the players must coordinate to efficiently reveal information to their teammates, however, players can only communicate through hint actions that point out all of a player’s cards of a chosen rank or colour.

One main motive behind all the research is to create intelligent machines that will mimic human abilities and behave more human-like. This is why the researchers this time tried to solve the challenges using “Theory of Mind”. Theory of mind is the process of reasoning about others as agents with their own mental states such as perspectives, beliefs, and intentions to explain and predict their behaviour. In simple words, it is the human ability to imagine the world from another person’s point of view.

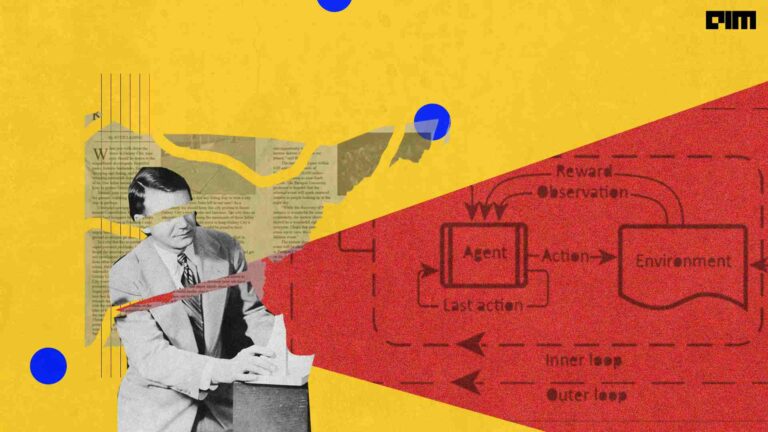

The researchers at Facebook AI Research (FAIR) proposed two different search techniques which can be applied to improve an arbitrary agreed-upon policy in a cooperative partially observable game.

The first one is the single-agent search which effectively converts the problem into a single agent setting by making all but one of the agents play according to the agreed-upon policy. The second one is the multi-agent search where all the agents carry out the same common-knowledge search procedure whenever doing so is computationally feasible, and fall back to playing according to the agreed-upon policy otherwise.

In the bench-marking challenge problem of Hanabi, the search technique showed an improved performance of every agent that has been tested and when applied to a policy trained using reinforcement learning, the AI system achieved a new state-of-the-art score of 24.61 / 25 in the game as compared to a previous best of 24.08 / 25.

Open Source Hanabi Environment By Google Brain

Previously, the researchers at Google Brain AI released an open-source Hanabi reinforcement learning environment called the Hanabi Learning Environment. The environment is written in Python and C++ and it includes an environment state class which can generate observations and rewards for an agent and can be advanced by one step given agent actions.

Why Hanabi

According to the researchers, Hanabi presents interesting multi-agent learning challenges for both, learning a good self-play policy and adapting to an ad-hoc team of players. The combination of cooperative gameplay and imperfect information make Hanabi a compelling research challenge for machine learning techniques in multi-agent settings. The practical advantage of the Hanabi benchmark is that the environment is extremely lightweight, both in terms of memory and compute requirements as well as fast. This environment can be easily used as a testbed for RL methods that require a large number of samples without causing excessive compute requirements.

Also, Hanabi is a different game from the adversarial two-player zero-sum games such as go, chess, checkers, among others. This game is different than the others for these two primary reasons:

- Unlike games like chess and go, Hanabi is neither two-player nor zero-sum, the value of an agent’s policy depends critically on the policies used by its teammates.

- Hanabi is a game with imperfect information which makes it a more challenging dimension of complexity for AI algorithms.

Wrapping Up

Games have been important testbeds for studying how good machines can do sophisticated decision making. One of the main reasons to choose games for reinforcement learning is that games are an interesting way to understand human intelligence. They are the challenging domain for reinforcement learning when it comes to solving using decision-making.

Last month, the master player of the Chinese strategy game Go, Lee Se-dol decided to retire as the player thinks AI cannot be defeated. In the present scenario, machines have been gaining superhuman powers and with the continuous research of machines using games will definitely guarantee the win over humans.