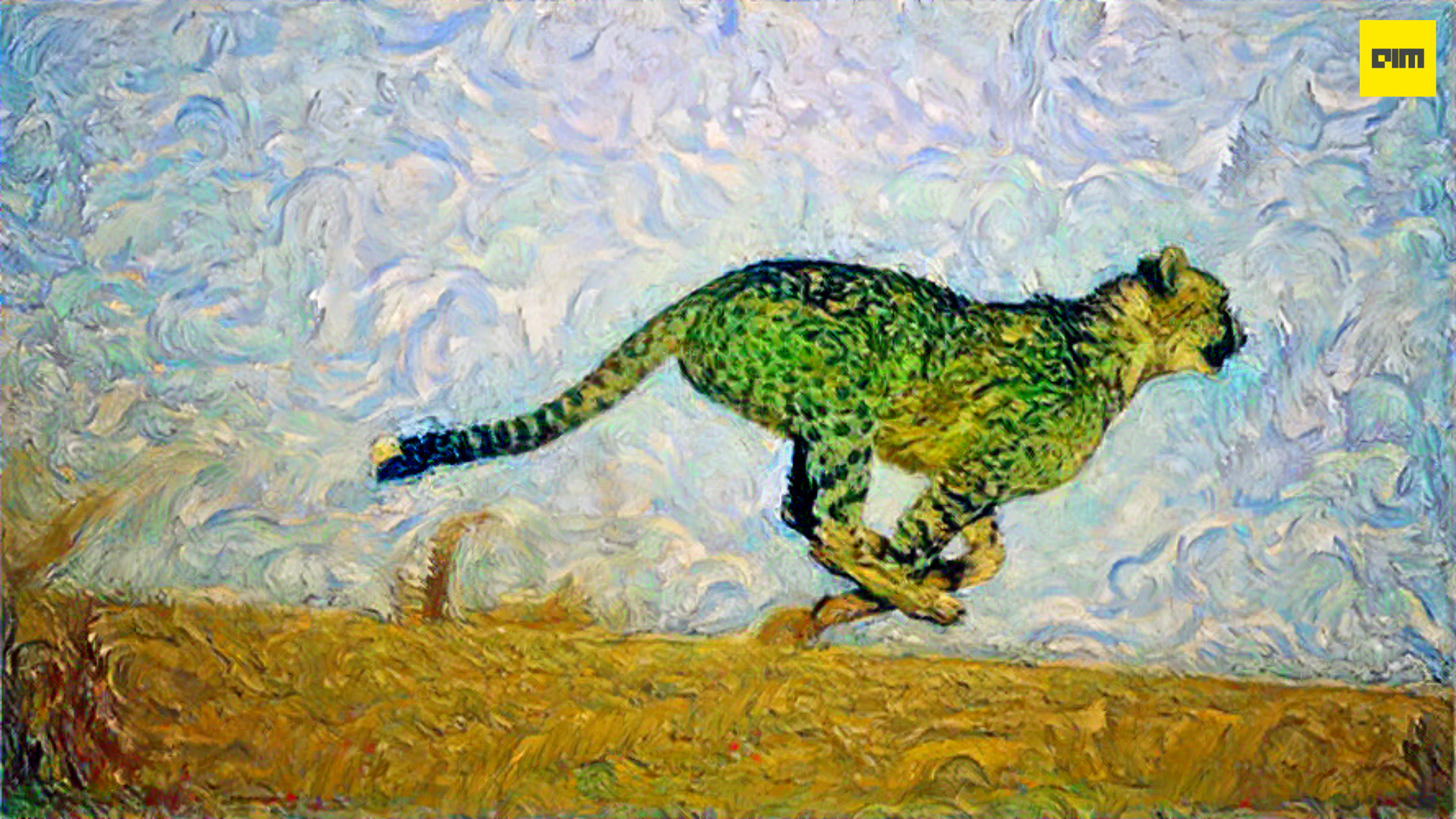

Since the 20th century, researchers have worked on different algorithms to create appealing artwork to attract artists’ attention. We have seen various algorithms, including strokes based rendering, region-based techniques, example-based rendering, and many more. The idea has always been to select two images, an arbitrary input image and a random style image, to combine them to create astonishing artistic output.

In 2015, a group of researchers Leon A. Gatys, Alexander S. Ecker, Matthias Bethge gave birth to “A Neural Algorithm of Artistic Style” Since then, there are more than 240 implementations of the research paper in several frameworks like torch, MXNet, TensorFlow, PyTorch, etc. Neural Style Transfer(NST) is a technique in which we use deep neural networks for image creation to create the visual style of another image. NST uses a Convolution Neural Network(CNN) such as VGGNet and AlexNet, to render an input image in different artist styles.

Taxonomy of Neural Style Transfer(NST)

Source: Neural Style Transfer: A Review

In this article, we will learn about Pystiche, a framework for NST implementation, built and fully integrated with PyTorch. It solves the problem of quickly testing and deploying an NST algorithm to create new style images. Philip Meier and Volker Lohweg have created Pystiche, which has recently been published in the journal of open-source software.

NST can be simply explained by the below image, two symbols, and three images.

Source: https://pystiche.readthedocs.io/en/latest/gist/index.html

Installation:

pip install pystiche

You can also install it from Github

pip install git+https://github.com/pmeier/pystiche@master

#setup

import pystiche

from pystiche.image import read_image

from pystiche import demo, enc, loss, ops, optim

from pystiche.image import show_image

from pystiche.misc import get_device, get_input_image

print(f"I'm working with pystiche=={pystiche.__version__}")

device = get_device()

print(f"I'm working with {device}")

Model

We are going to load VGG19 and feed the input tensor to the model, to extract a feature map of the content, style, and output image.

VGG19 model relatively(compared with ResNet, Inception,etc) generate better output for neural style transfer

#multi-layer encoder

multi_layer_encoder = enc.vgg19_multi_layer_encoder() print(multi_layer_encoder)

Pystiche provides a MultiLayerEncoder, which enables to obtain all the necessary encoding after a single forward pass.

Content Loss

Content loss calculates the content representation in the content image, which gets captured in a generated image. In this example, content_layer generates encoding then passes it to content_encoder to extract features and thus with the content_weight gives output to content_loss. It is also the distance between the content image, which we want to preserve, to generate the output image.

#content loss

content_layer = "relu4_2" content_encoder = multi_layer_encoder.extract_encoder(content_layer) content_weight = 1e0 content_loss = ops.FeatureReconstructionOperator( content_encoder, score_weight=content_weight ) print(content_loss)

Style Loss

Style loss, measure the distance in style between the output image and style image.

#style loss

style_layers = ("relu1_1", "relu2_1", "relu3_1", "relu4_1", "relu5_1")

style_weight = 1e3

def get_style_op(encoder, layer_weight):

return ops.GramOperator(encoder, score_weight=layer_weight)

style_loss = ops.MultiLayerEncodingOperator(

multi_layer_encoder, style_layers, get_style_op, score_weight=style_weight,

)

print(style_loss)

Perceptual Loss

Perceptual loss is a metric of comparing two different images that look similar. It compares the high-level differences in content and style images. Perceptual loss uses feed-forward neural networks for image transformation. We combine the content_loss and style_loss into a joined PerceptualLoss, which will serve as the optimization criterion. The content_loss and style_loss together create the perceptual loss.

#computing perceptual loss

criterion = loss.PerceptualLoss(content_loss, style_loss).to(device) print(criterion)

#load a input image

content_image = read_image("cheetah.jpeg", size=size, device=device)

show_image(content_image, title="Content image")

#load a style image

style_image = read_image("Van-Gogh-Wheat-Field.jpeg", size=size, device=device)

show_image(style_image, title="Style image")

#targets for the optimization criterion

criterion.set_content_image(content_image) criterion.set_style_image(style_image)

#Initialise the input image for training

starting_point = "content" input_image = get_input_image(starting_point, content_image=content_image) show_image(input_image, title="Input image")

#start the style transfer training

output_image = optim.image_optimization(input_image, criterion, num_steps=1000)

#write the image to current folder

write_image(output_image,'test.png', mode=None)

#show the output

show_image(output_image, title="Output image")

Conclusion

In this article, we have learned about Pystiche with code implementation. Pystiche is a fantastic tool to create new render images in the required style. Please check the full code at GitHub and documentation.