Recently, Microsoft announced limited access to its Neural Text-To-Speech (TTS) AI that enables developers to create custom synthetic voices. So far, AT&T, Duolingo, Progressive, and Swisscom have tapped the Custom Neural Voice feature to develop a unique speech solution.

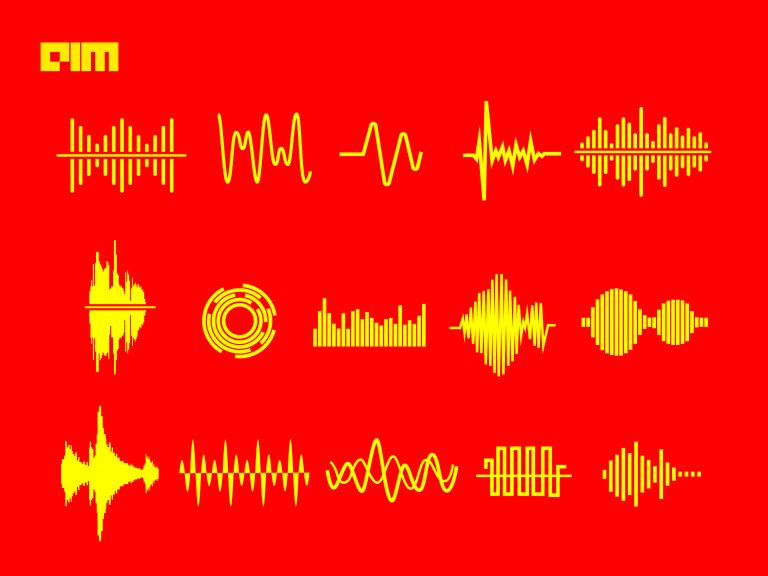

The technology consists of three main components: text analyser, neural acoustic model, and neural vocoder. The text analyser converts plain text to pronunciations, the acoustic model converts pronunciations to acoustic features and finally, the vocoder generates waveforms.

Below, we explain the tech behind the Neural TTS system.

Text Analyser

The process starts with a Text Analyser that has to overcome many challenges. The process becomes especially tricky with heteronyms like ‘read’, which has completely different pronunciations depending on the tense. The other major challenge is word segmentation since it is different for different languages.

Tagging words according to their part of speech – noun, verb, adjective, adverb – is another concern. Simultaneously, normalisation for shortcuts like ‘$300’ to ‘300 dollars’ or abbreviations like ‘Dr’ to ‘Doctor’ is also essential. Morphology — used to classify word numbers in terms of plurality, gender, or cases — is also needed.

Microsoft’s recent innovation called Unified Neural Text Analyser (UniTA) helps simplify the text analyser workflow while reducing over 50% of pronunciation errors simultaneously.

Firstly, UniTA converts the input text to word embedding vectors. This is an NLP technique to map words or phrases to vectors of real numbers. The process involves mathematical embedding to move from many dimensions per word to a continuous vector space with a much lower dimension. Pre-trained models like XYZ-code are used for the process.

Then comes the sequence-tagging fine-tune strategy, which includes the typical word classification tasks such as segmentation of words, normalisation of shortcuts, morphology for detecting pluralities or genders, and polyphone disambiguation for heteronyms. Unlike other models, UniTA adopts a multi-task approach to train all of these categories together.

Text Analyser provides an output in the form of a phoneme (basic unit of sound) sequence.

Neural Acoustic Model

The phoneme sequence defines the pronunciation of the words in the text, which is then fed into the Neural Acoustic Model, also known as the Neural Speech Synthesis Model. These models are used to characterise attributes like the speaking style, intonations, speed, and stress patterns.

To develop a Neural Acoustic Model, multi-lingual and multi-speaker TTS recordings were used to train the base model. The model was then trained to develop TTS for different languages and voices using a highly agile development model called the ‘transformer teacher model’. Microsoft trained this model using more than 50 different languages in more than 50 different voices on more than 3,000 hours of speech data.

A near-human parity was achieved using a recurrent neural network (RNN) based sequence-to-sequence model. This model’s architecture is based on an auto-regressive Transformer structure, which produced speech output close to an actual human voice. This was followed by training for fine-tuning and synthesis using two hours of target speaker data to adapt to a multi-lingual, multi-speaker ‘transformer teacher model’.

The multi-lingual multi-speaker model can use the data for one speaker in one language and train another speaker in another language. The fine-tuning for this is done using a TTS model, Fastspeech.

The Neural Acoustic Model generates a new high-quality model for the speaker that sounds very similar to the original recording.

Neural Vocoder

Finally, Microsoft used a vocoder to turn the audio into waveform. Microsoft recently upgraded to newer vocoder HiFiNet, which is particularly beneficial for hi-fi audios or long interactions by achieving higher fidelity while significantly improving the synthesis speed.

Microsoft’s TTS system was trained using deep learning algorithms on human voice recordings as training data. Microsoft created a training pipeline for HiFiNet using state-of-the-art research on vocoders to make a Neural TTS Vocoder. This vocoder can run fast enough to produce 24,000 samples per second and perform well on GPUs and CPUs.

The HiFiNet pipeline consists of two main components: Generator and Discriminator.

Generator is used to generate audio, whereas Discriminator is used to identifying the gap between the generated audio and original training data audio. The aim is to close this gap as much as possible.

The final product was tested using the Comparative Mean Opinion Score (CMOS) gap for comparing the voice quality of two TTS systems. The CMOS gap of one system greater than 0.1 or 0.2 is indicative of it being better than or significantly better than the other system.

Microsoft found the HiFiNet vocoder gained a CMOS score greater than 0.2 across different languages and neural voices. It also showed -0.05 score as compared to human recordings displaying a high human-parity audio fidelity.

Wrapping Up

The Custom Neural Voice can be used for various applications, including chatbots, voice assistants, online learning, audiobooks, public service announcements, and real-time translations. It also supports multiple languages, including English, Russian, Japanese, German, Polish, Korean, and Finnish, and can adapt to different grammar forms.