How to improve time series forecasting accuracy with cross-validation?

Time series analysis, is one of the major parts of data science and techniques like clustering, splitting and cross-validation require a different kind of understanding

Time series analysis, is one of the major parts of data science and techniques like clustering, splitting and cross-validation require a different kind of understanding

To make a better explanation of ARIMA we can also write it as (AR, I, MA) and by this, we can assume that in the ARIMA, p is AR, d is I and q is MA.

There are a few approaches that can be used to reduce the training time time of neural networks.

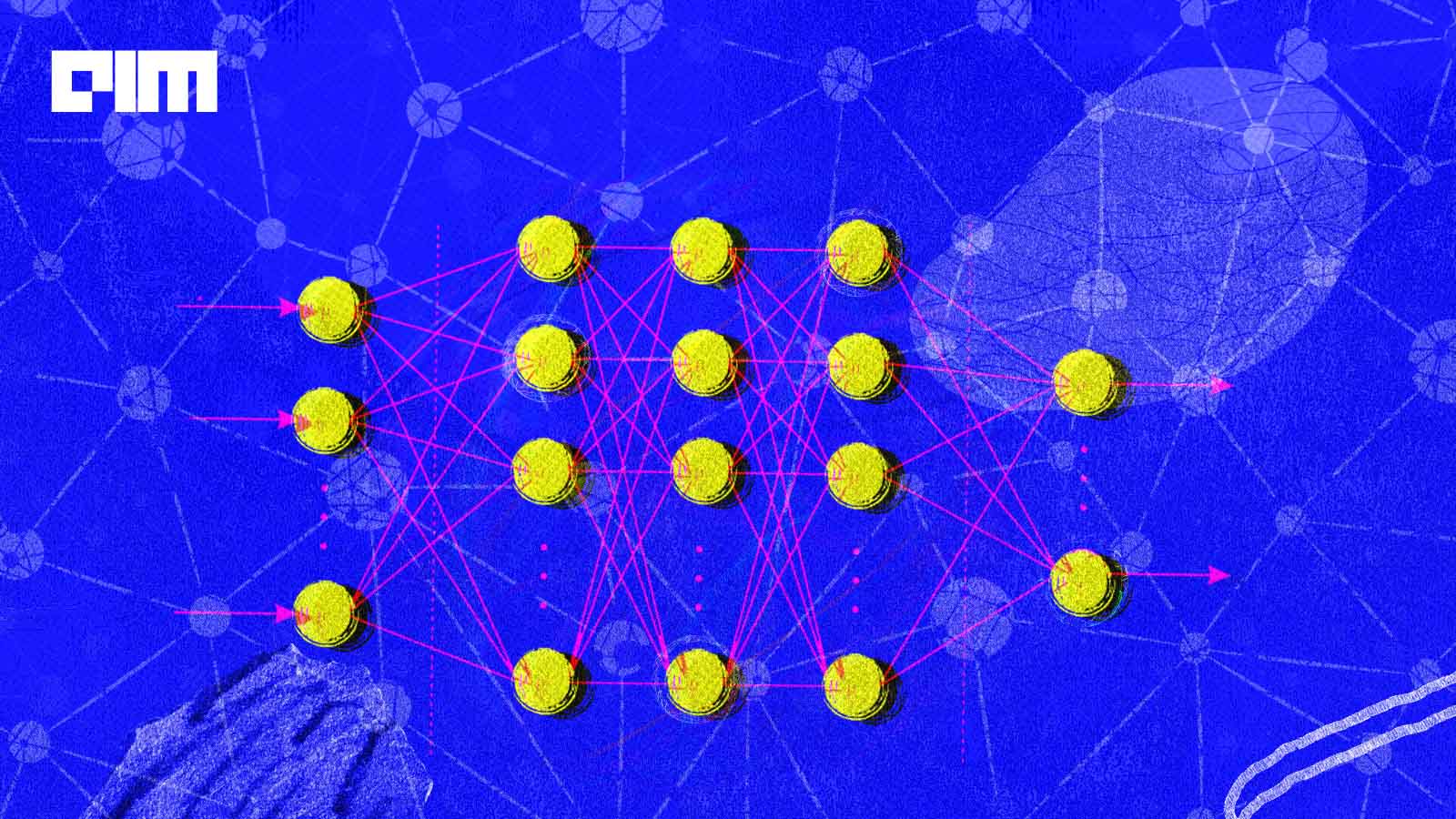

Through this post we will discuss about overfitting and methods to use to prevent the overfitting of a neural network.

Detecting outliers in the categorical data is something about the comparison between the percentage of availability of data for all the categories.

ARIMA is the most popular model used for time series analysis and forecasting. Despite being so popular among the community, it has certain limitations as well.

The exponential smoothing and moving average are the two basic and important techniques used for time series forecasting.

Curriculum learning is also a type of machine learning that trains the model in such a way that humans get trained using their education system

There can be various reason behind a neural network fails to converge. failure in convergence can make us confuse about the model results.

t-SNE is a nonlinear dimensionality technique that can be utilized in a scenario where the data is very high dimensional.

A graph attention network can be explained as leveraging the attention mechanism in the graph neural networks so that we can address some of the shortcomings of the graph neural networks.

Thera are many important factors that need to be considered while choosing a machine learning model.

Top2Vec is an algorithm for topic modelling which is used for discovering the topics in a collection of documents.

every person related data science is starving for better accuracy of the model that can be enhanced using some of the methods related to data and model

Continuous-time Markov chain is a type of stochastic process where continuity makes it different from the Markov chain. This process or chain comes into the picture when changes in the state happen according to an exponential random variable.

PyTorchCV helps in building high-performing transfer learning models that have shown better performance than the other existing frameworks.

Steppy is a lightweight python library that help in building fast and reproducible machine learning models.

OCR is a short form of Optical character recognition or optical character reader. By the full form, we can understand it is something that can read content present in the image. Every image in the world contains any kind of object in it and some of them have characters that can be read by humans easily, programming a machine to read them can be called OCR

One of the main advantages of Dempster-Shafer theory is that we can utilize it for generating a degree of belief by taking all the evidence into account

In this article, we are going to discuss time series clustering with its key concepts and we will also understand how it can be utilized practically.

ChefBoost is one python package that provides functions for implementing all the regular types of decision trees and advanced techniques. One thing which is noticeable about the package is we can build any version of the above-given decision tree using just a few lines of codes.

We can clarify the significance of chaotic phenomena in neural networks by taking an example of an artificial neural network where we can use chaotic neural network to measure the dynamic characteristics of the artificial neural networks.

Detecto is an open-source library for computer vision programming that helps us in fitting state-of-the-art computer vision and object detection models into our image data. One of the great things about this package is we can fit these models using very few lines of code.

to process faster with the network it is required to converge it faster and to do so there are various techniques that we need to follow while building or training neural networks.

Various probability theories enable us to calculate and interpret the distribution of randomly selected variables. We mainly find the use of the Poisson process and distribution when the number of upcoming events is large and their probability of occurring is very low.

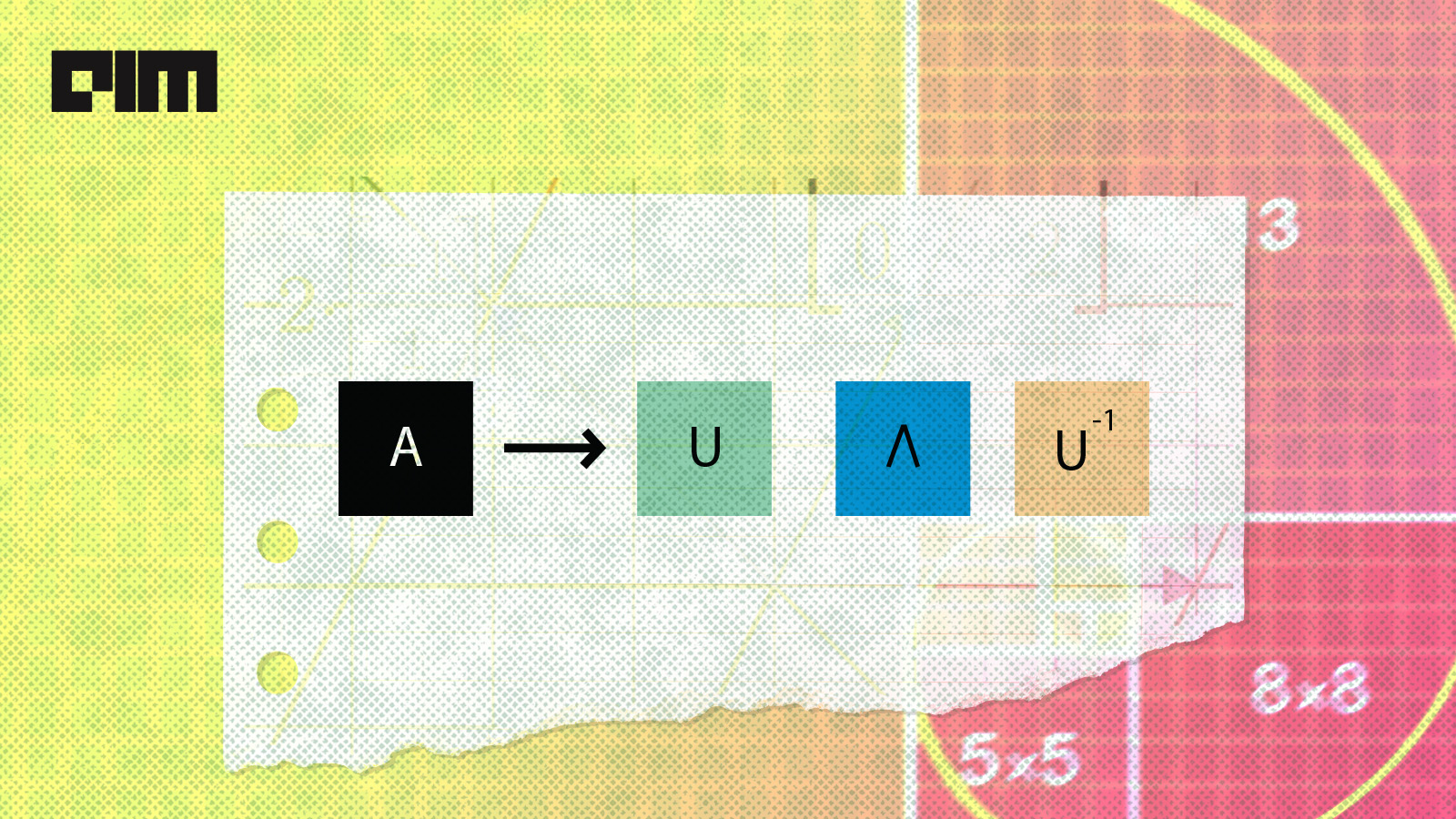

Mathematically, Eigen decomposition is a part of linear algebra where we use it for factoring a matrix into its canonical form. After factorization using the eigendecomposition, we represent the matrix in terms of its eigenvectors and eigenvalues.

Developing a high-performing and accurate model blessing to a data scientist but maintaining the privacy of the data while training is one of the tasks

Proplot is a wrapper of the matplotlib library for the visualization of data.

The base rate fallacy is a kind of fallacy that is also known as base rate bias and base rate neglect. This kind of fallacy has information about the base rate and specific information. There can be ignorance of base rate data in favor of individuating data.

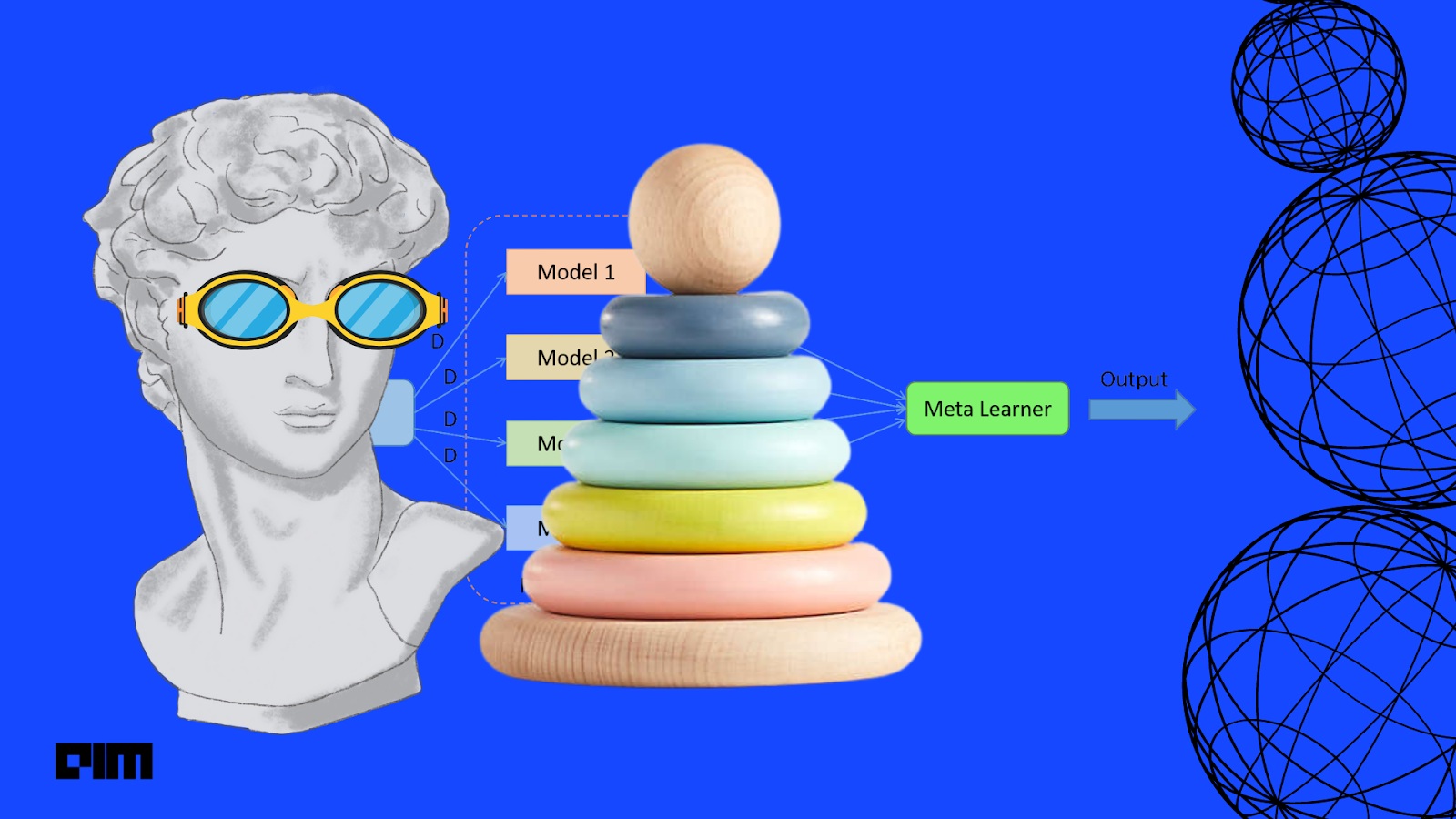

the idea behind stack ensemble method is to handle a machine learning problem using different types of models that are capable of learning to an extent, not the whole space of the problem. Using these models we can make intermediate predictions and then add a new model that can learn using the intermediate predictions.

Join the forefront of data innovation at the Data Engineering Summit 2024, where industry leaders redefine technology’s future.

© Analytics India Magazine Pvt Ltd & AIM Media House LLC 2024

The Belamy, our weekly Newsletter is a rage. Just enter your email below.