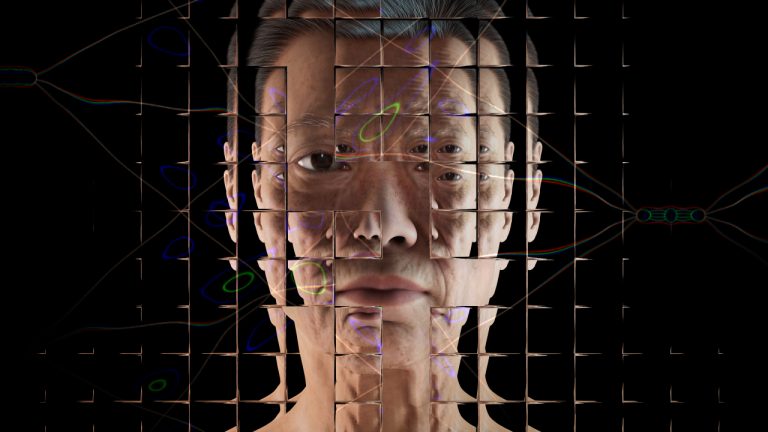

Clearview AI, a facial recognition startup, has been in the news lately for all the wrong reasons. Right from when its strategies were extensively covered, there has been a lot of attention regarding their controversial usage of public photos (reportedly 3 billion from its database), and implications of these AI tools in eroding privacy as we know it today.

A Bit Of A Background

According to the website, Clearview AI develops tools that help law enforcement agencies to accurately, reliably and lawfully identify suspects, as well as the victims upon whom they prey.

Clearview was founded by Hoan Ton-That, who is also the CEO of this startup company based in New York City. Hoan, an Australian of Vietnamese descent, along with his peers have built a computer vision model that was later used for catching the criminals. Clearview’s models skim over a large database of millions of people in various settings. Police departments have had access to facial recognition tools for many years, but so far, they have been limited to searching images provided by governments.

With Clearview AI, the law enforcement agencies can now track down any anonymous suspect by simply uploading the image, which will then be matched with the database that has all the necessary information.

Clearview’s tools were also applauded by law enforcers for revolutionising victim identification procedures.

The presence of something like Clearview AI in the US is perplexing because there have been many instances where few states have passed laws that regulate the use of this technology. Clearview tries to address the scepticism around the use of facial recognition in their website where they claim the following:

- We search the open web and do not – and cannot – search for any private or protected info

- It is not a surveillance system and is not built like one

- The results legally require follow-up investigation and confirmation

So far, it looks very assuring. But, according to the New York Times, in 2009, Ton-That created a site that was branded as a phishing site. It lets people share links to videos with all the contacts in their instant messengers.

The bad news for Clearview does not end there. Ever since the year started, the company has been under immense pressure from the media as well as legal circles. In one incident, Clearview has been accused of storing the biometrics of Illinois residents without serving notice.

Added to that are the recent reports of security lapse exposing the source code of Clearview’s tools. Not to forget that we are talking about a company that scrapes images off the web and provides it to the law enforcement agencies.

A Swansong To Privacy

Whenever the world is pushed to its limits, all the measures which were thought to be radical suddenly become the new norm. The peacetime ridiculous-sounding policies will be followed religiously. People who vociferously denounced surveillance are now volunteering their data to ensure public measures like social distancing. What happens to all this data once the pandemic ends? Of course, the tech companies have declared their benevolence behind their innovation, but the road to hell is often paved with good intentions.

A security lapse, like in the case of Clearview, can compromise the identity of an individual. Added to this are the vulnerabilities of the machine learning models, which are still being tweaked to thwart adversarial attacks. There is a reason why we do not have fully autonomous vehicles yet!

Whatever the reasons for delaying the deployment of AI-based technologies such as facial recognition and other surveillance applications to monitor contact tracing, have suddenly been decimated with the advent of COVID-19. Every nation has designed its application to promote public health. With machine learning, there is nothing called 100% reliability, just like with the regulations of policymakers. All one can do going forward is to accept that the world has changed forever and educate oneself of how their data can be used.