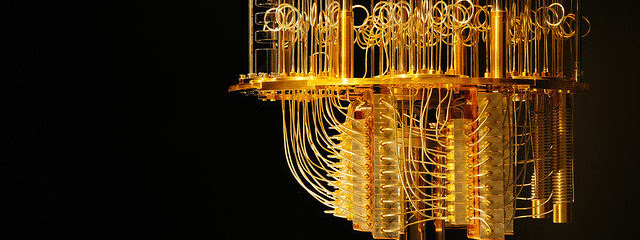

Researchers from the University of Sydney have developed an algorithm that characterises noise, which is one of the major obstacles while building large-scale quantum computers. The researchers believe this tool to be highly scalable and the results have been tested on the IBM Quantum Experience device.

A team of researchers, led by Dr Robin Harper noted that noise is the central obstacle while building large-scale quantum computers. Once this challenge is addressed, Quantum computers will be more efficient in solving computations problems that currently is a big challenge.

What Did The Researcher Do?

According to researchers, noise is the main obstacle while building large-scale quantum computers. They have tried to tame the noise — in terms of interference and instability — by understanding how exactly it affects the quantum system. While this information has been available for small-scale devices, it has not been understood in large-scale quantum devices, to date.

In the research paper, they detailed a protocol that entirely and efficiently characterises the error rates of quantum noise. The researchers developed a method that returns an estimate of the effective noise with relative precision and detects all correlated errors, enabling the discovery of long-range two-qubit correlations in the 14 qubit device that has never been detected before. The current methods of characterising noise are infeasible as device sizes approach 10 or more qubits.

Dr Harper, who is a postdoctoral researcher at the University of Sydney Nano Institute and part of the Australian Research Council Centre of Excellence for Engineered Quantum Systems. said, “The results are the first implementation of provably rigorous and scalable diagnostic algorithms capable of being run on current quantum devices and beyond.” The results are crucial for designing next-gen quantum devices.

Making Quantum Computers Efficient

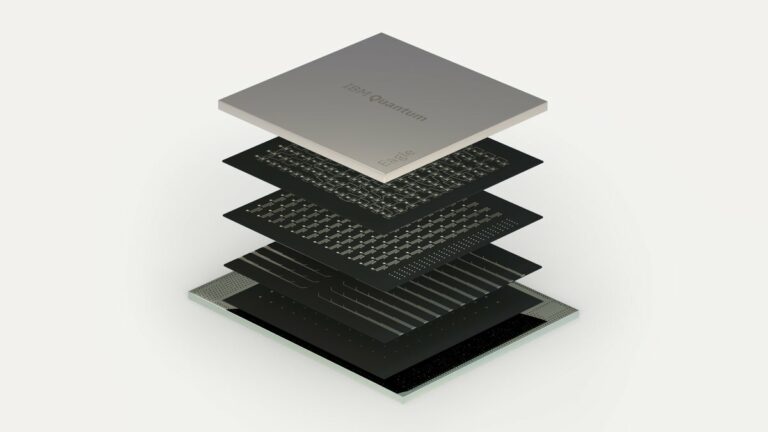

Useful large-scale quantum computers require both careful calibrations to reduce errors as well as some form of error correction before scalable, universal quantum computing can be realised. It is also important to identify error sources.

Error correction routines that are optimised for the specific noise in a system can dramatically outperform generic ones. Identifying these errors depends on the ability to characterise the noise in large quantum systems.

The researchers implemented a protocol that allows them to learn a complete description of the effective qubit error rates in a large-scale quantum device. The method is immune to systematic bias due to state preparation and measurement errors (SPAM) and achieves both high precision and accuracy.

The earlier work which relied on process tomography and gate set tomography does not scale past a handful of qubits even when sophisticated techniques such as compressed sensing are utilised. Other methods such as Randomised benchmarking protocol can scale in principle, but it provides only an incomplete description of the noise.

Addressing these challenges, the new protocol developed by researchers can carry cycle benchmarking, character benchmarking, and correlation benchmarking. Rather than estimating individual average fidelities one at a time, it can evaluate all of them simultaneously. It allows the correlations to be identified and quantified.

The Way Forward

The researchers said that their experiment gives the first demonstration of a protocol that is practical, relevant, and immediately applicable to characterising error rates and correlated errors in present-day devices with a large number of qubits. They believe that it can open myriad opportunities for novel diagnostic tools and practical applications and increase the scale of quantum computers.

Read the complete paper here.