In this last talk of day 01 of Computer Vision DevCon 2020, Brandon Gilles, CEO at Luxonis, explained the world’s first embedded spatial AI platform — OpenCV AI Kit (OAK). Embedded spatial AI platform provides an immense capability to imitate human-level perception in applications. This is essential for specific perception and interaction tasks that could conventionally be only solved by a person can now be performed by embedded systems.

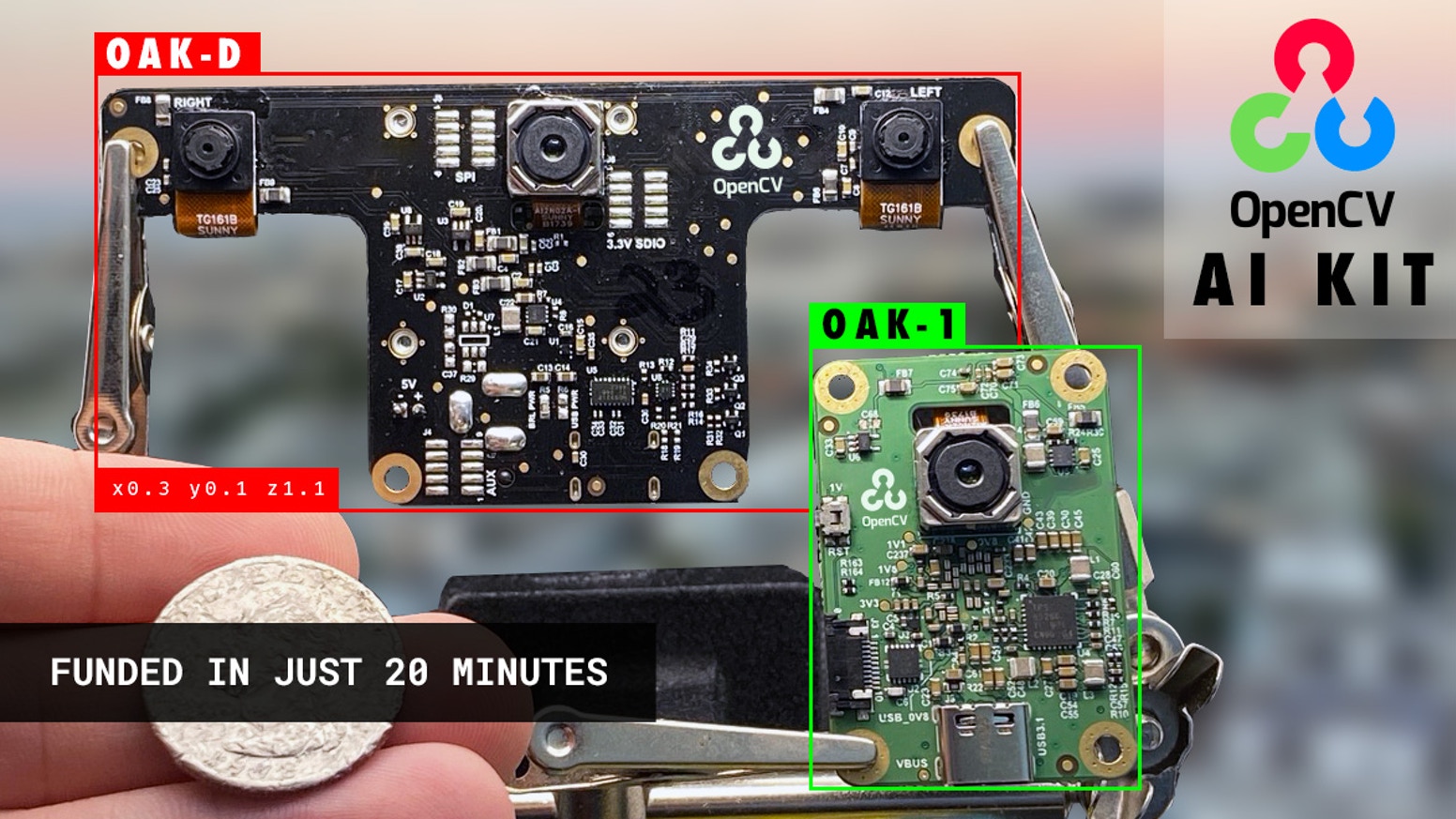

This talk starts with the explanation of OAK-1 and OAK-D and further explains how the OpenCV AI kit has two eyes to bring out the depth perception, especially the distance. Case in point — the system can now quickly identify a good onion from a bad one on a conveyor belt and throw it back into the field.

OAK-D

According to the speaker, OAK-D has got a built-in 4K camera with 12 megapixels, which is considered to be very high resolution with high capability and fantastic optics. Further, it has an advanced global shutter synchronised with 1280 x 800 resolution and one-megapixel stereo depth, along with an AI processor. Such capabilities come handy in building robotic applications where the system tries to mimic the perception of humans but in tiny devices.

OAK-D for visual assistance, including comparison with Azure Kinect DK: https://t.co/hYaBYWogYi

— Luxonis | Embedded Spatial AI + CV (@luxonis) August 8, 2020

Alongside it comes with 20 dedicated processors for detecting stereo depth, DeWarp, edge detection, line detection, and all other common motion estimation for background subtraction; and 16 processors designed explicitly for visual processing. This will allow users to run their own vectorised code.

“The product build-out of this OAK-D is designed to be modular, which is going to disrupt the market, disrupt the technology and is going to be used in all sorts of different ways that we can’t imagine,” said Gilles.

OAK-D comes with three cameras standard, with flexible flat cable (of 6 inches pulled to 12 inches) that one can custom stereo bass line, where some people use these to put the cameras close together. In contrast, other people will use them to put them far apart.

Our Kickstarter campaign for OpenCV AI Kit (OAK) surpassed $1M in funding with 40 hours to go!https://t.co/iofFlZQjmR

— Satya Mallick (@LearnOpenCV) August 11, 2020

OAK-1 and OAK-D will come in a beautiful aluminium case. Thanks all our backers, and people who have supported us!#AI #ComputerVision #OpenCV #DeepLearning pic.twitter.com/eFsonjxZSl

Furthermore, the system comes with object tracking in 3D with unique IDs, automatic motion-based lossless zooming facility, motion estimation, and JPEG and MPEG encoding.

Difference between OAK-1 & OAK-D

- OAK-1 comes with a smart camera with real-time neural inference, whereas OAK-D comes with an intelligent camera with real-time neural inference as well as depth perception.

- OAK-1 comes with a USB3C onboard camera; on the other hand, OAK-D is equipped with power over an ethernet modular camera.

- In terms of camera specifications, OAK-1 has a colour camera with 4056 x 3040 pixels, whereas OAK-D has 1280 x 800 pixels.

- While OAK-1’s field of view is 81 DFOV – 68.8 HFOV, OAK-D has 71.8 HFOV.

Use Cases

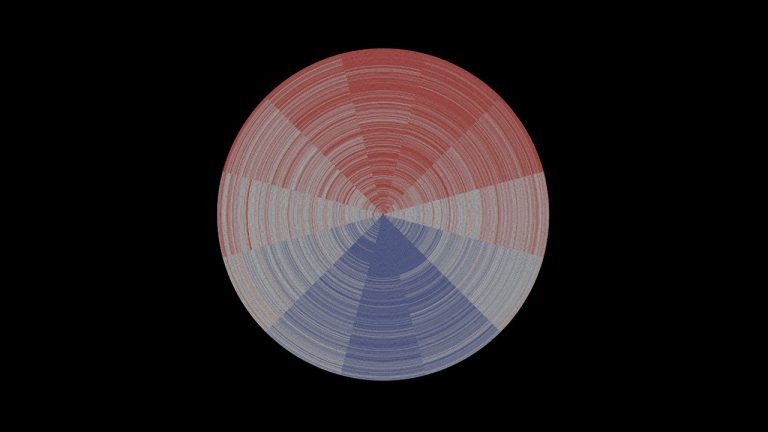

Although it can be used for a variety of applications, the best use of this system, so far, has been to build a computer vision pipeline for visually impaired people. The system allows visually impaired and completely blind to perceive the world in real-time through shaped audio, also known as think aided echolocation.

“So a fully blind person can start training using neuroplasticity and can train their occipital lobe to pull information from the ears,” said Gilles. “They can now visualise the world leveraging computer vision, which can produce the sounds that then gives the blind person the visualisation, just like a sighted person.”

The second use case is precision agriculture, where robotics has been deployed to perceive, harvest, quantise, and in-process sort ripeness and other metrics in fruits and vegetables. Here having spatial information acts as a critical aspect.

Explaining this Gilles stated — being able to do automated strawberry picking, zero cost sorting with a robotic arm, using the neural model to estimate ripeness, and detecting weeds in the farm can have tremendous business value, as well as increase revenue. Further, this will allow farmers to do organic farming with the help of low cost embedded spatial AI to not only kill pests with lasers but also controlling pesticide usage in the farm.

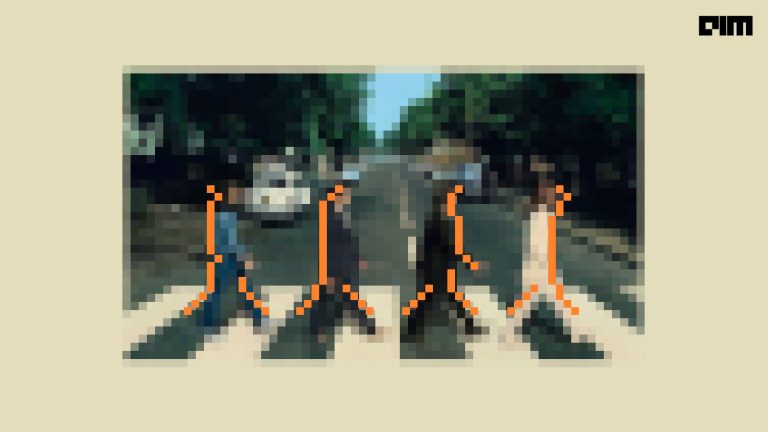

Thirdly comes the health and safety factor, which is hugely critical in the current COVID era. The system proves real-time AI-based spatial awareness for safety solutions, including real-time identifying people’s position of maintaining social distancing.

“So whether it’s cleaning with UV robots or monitoring social distancing, with spatial AI, users can see a bird’s eye view of individual people maintaining social standards,” concluded Gilles.