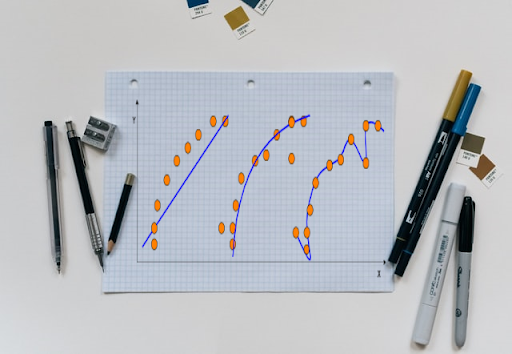

A machine learning model is only as good as the data it’s trained on. In other words, the poor performance of a model is mainly due to overfitting and underfitting. Overfitting happens when the model is modelled ‘too well’ on the training data. Underfitting refers to a model that can neither model the training data nor generalize to new data.

Overfitting could be an upshot of an ML expert’s effort to make the model ‘too accurate’. In overfitting, the model learns the details and the noise in the training data to such an extent that it dents the performance. The model picks up the noise and random fluctuations in the training data and learns it as a concept.

However, in a real-time situation, these concepts do not apply to new data, which poses a great hindrance to the model’s ability to generalize. Overfitting is a common occurrence with nonparametric and nonlinear models that offer flexibility when learning a target function.

So, what’s wrong with overfitting?

An overfitted model is designed to accommodate the eccentricities of a particular dataset; this produces good results from the given dataset but will fail to generalize on other data. In many cases, these parameters are defined as the ‘non-data’ aspect of the training dataset, which is nothing more than an oddity that might not repeat with other real-time datasets.

“Due to overfitting, a model will not be able to achieve generalization, which means the model(s) trained on one dataset has limited applicability on unseen data in real situations. This, in turn, reduces the ability of the model to discriminate patterns and predict correct results and can lead to drastic consequences especially in domains like healthcare, automotive and industrial,” Sindhu Ramachandran, Practice Leader – Deep Learning & Analytics, QuEST Global.

Moreover, since the model is so closely trained on a given training dataset, the model may replicate its inherent biases against community, gender, and race. Many mistakenly believe overfitting is a problem that can be solved simply by widening the scope or flexibility of model design by training it on a wider set of parameters and adding more features. Though simple, this is not an effective resolution to the problem.

Famed mathematician John von Neumann once said, “With four parameters I can fit an elephant, and with five I can make him wiggle his trunk.”

Neumann meant that one should not be impressed by a complex model that fits data. It only means that with enough parameters, a model can be made to fit with any data set.

Dr. Siba Panda, Assistant Professor, SVKM’s NMIMS Mukesh Patel School of Technology Management & Engineering, said, “If your aim is to build an overfitting model, you could take more features into consideration or build a complex model. There would be two outcomes: First, the significant additional features may cause the processing to be too costly; second, a complex model will deliver the overfitting results in the training data set but not in the test data set. So always go for a simple path, step by step, using different techniques, such as hold-out, data argumentation, feature selection and regularization, if you have an overfitting model. To avoid the overfitting problems while building models to solve real-life issues faced by industries, there are two concepts that students should pay attention to: normalization/standardization and regularisation.”

But, is it always bad?

In a discussion on trends to watch out for in computer vision, both Vahan Petrosyan, CTO at Sweden-based SuperAnnotate AI and Loius Coopey, VC at Point Nine Land, agreed overfitting might have useful applications in computer vision in future.

Taking the example of traffic management analysis, the duo wrote: “If your camera is fixed, you would rather build slightly different models for each camera and overfit your CV model for each camera. Although overfitting in machine learning has negative connotations, in such cases you would create far more accurate models for each fixed camera, rather than building a generic model for all cameras.”